Claude-context hit #1 on GitHub trending today with 7,476 stars and +871 in the last 24 hours. Created by Zilliz (makers of the Milvus vector database), it’s an MCP plugin that brings semantic code search to AI coding agents, solving one of the industry’s biggest bottlenecks: context window limits. The tool reduces token consumption by 40% through intelligent retrieval rather than brute-force context stuffing. This directly addresses the tokenmaxxing problem—developers spending 10x on tokens for 2x productivity gains, as TechCrunch recently exposed.

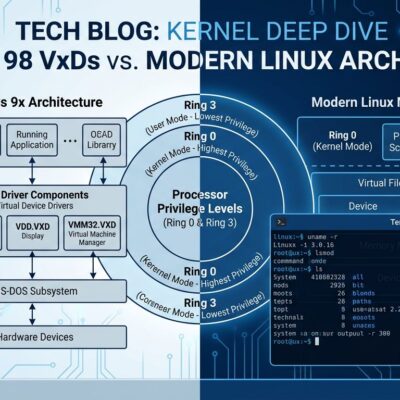

Context windows have hit 1-2M tokens (Claude Opus 4.6, Gemini 3.1 Pro), but production codebases routinely exceed these limits. Enterprise-scale projects often run 100K+ lines of code, translating to 2.5M+ tokens. Claude-context changes the equation: instead of trying to fit everything into context, it uses vector search to retrieve only what matters.

The Problem: Context Windows Can’t Keep Up with Enterprise Codebases

Even 1M-token context windows fall short for most production codebases. A 1M-token window holds roughly 40,000 lines of code with documentation. Enterprise applications routinely exceed 100K lines, translating to 2.5M+ tokens. Worse, research shows 60-80% of tokens get wasted on “figuring out where things are” rather than answering the actual question.

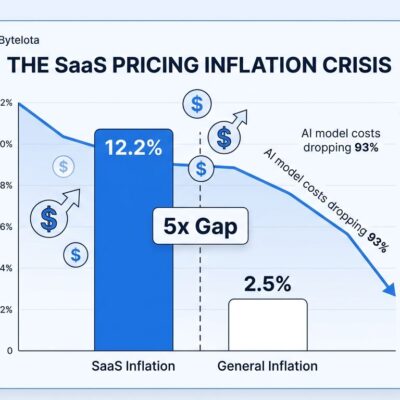

This creates the tokenmaxxing trap. Engineers with the largest token budgets achieve 2x throughput at 10x the cost of tokens, according to TechCrunch’s recent analysis. Models also struggle with the “Lost in the Middle” phenomenon—information buried in very long contexts gets missed. More tokens doesn’t mean better results.

Related: AI Productivity Paradox: 40% Speed Boost, 242% More Bugs

Semantic Code Search via MCP: 40% Token Reduction

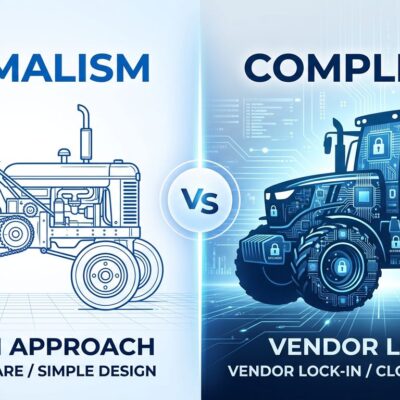

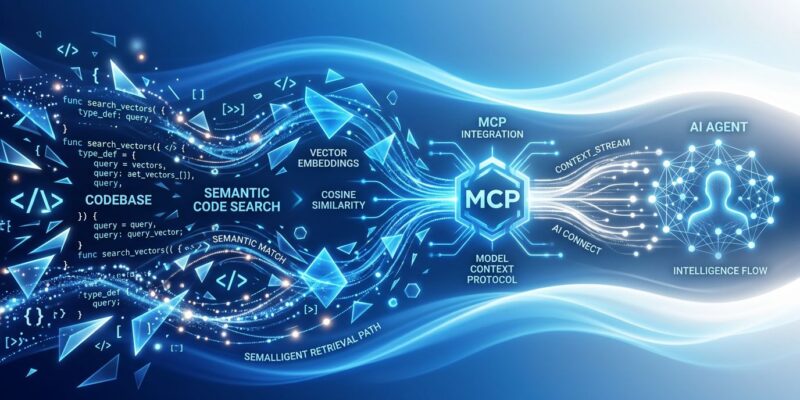

Claude-context uses hybrid search—combining BM25 keyword matching with dense vector semantic similarity—to retrieve only relevant code snippets. Instead of loading thousands of files into context, the AI agent asks “Find functions that handle user authentication” and claude-context returns the 3-5 most relevant functions from millions of lines of code. The Zilliz team measured a 40% token reduction with equivalent retrieval quality.

The architecture works in four steps: First, the codebase gets embedded and stored in a vector database (Zilliz Cloud or self-hosted Milvus). Second, hybrid search combines keyword precision with semantic understanding. Third, an MCP server provides standardized tools that any AI agent can invoke. Fourth, only relevant snippets get injected into the agent’s context window.

This isn’t just vector search—it’s hybrid search. More accurate than pure semantic approaches, faster than brute-force context loading. The MCP integration means it works with any MCP-compatible AI coding tool: Claude Code, Cursor, VS Code, Windsurf, and a dozen others.

MCP Becomes Industry Standard: From Anthropic Experiment to Linux Foundation

MCP (Model Context Protocol) evolved from Anthropic’s internal experiment to industry standard in just 18 months. As of March 2026: 97M monthly downloads, 10,000 live servers, and adoption by OpenAI (March 2025), Google DeepMind (April 2025), Microsoft, AWS, and Cloudflare. Think of MCP like USB-C for AI applications—a standardized way to connect AI to external systems.

In December 2025, Anthropic donated MCP to the Agentic AI Foundation (under the Linux Foundation), co-founded by Anthropic, Block, and OpenAI, with support from Google, Microsoft, AWS, and Cloudflare. The MCP Dev Summit in April 2026 drew 1,200 attendees. This isn’t an experiment anymore—it’s infrastructure.

Claude Code now supports MCP Tool Search: automatic lazy loading that reduces MCP context usage by 95%. The standardization means developers can build once, use everywhere. Claude-context represents the ecosystem’s maturation: production-ready tooling that solves real developer pain points.

Getting Started: Installation and Usage

Getting started requires Node.js v20.x-v23.x (NOT v24+, which is incompatible), a Zilliz Cloud account (free tier available), and an OpenAI API key for embeddings. For Claude Code users, installation is a single command:

claude mcp add claude-context \

-e OPENAI_API_KEY=sk-... \

-e MILVUS_ADDRESS=https://your-cluster.vectordb.zillizcloud.com \

-e MILVUS_TOKEN=your-token \

-- npx @zilliz/claude-context-mcp@latestOnce configured, developers index their codebase with “Index this codebase” (via MCP tool), monitor progress with “Check the indexing status,” then query naturally: “Find payment processing logic” or “Show me error handling for API requests.” The MCP server provides four tools: index_codebase, search_code, clear_index, and get_indexing_status.

Configuration examples exist for 12+ AI coding tools (Cursor, Windsurf, VS Code, Gemini CLI, and more). The MCP integration means it works with whichever tool you’re already using—no need to switch editors. Smart file filtering reduces index size and costs:

# Include only specific files

INCLUDE_PATTERNS="src/**/*.ts,lib/**/*.js"

# Exclude common patterns

EXCLUDE_PATTERNS="node_modules/**,dist/**,*.test.ts"Is Claude-Context Worth It? Decision Criteria

Claude-context shines for large codebases (40K+ lines), team onboarding, legacy code exploration, and token cost optimization. If you’re spending $100+/month on AI coding, a 40% reduction means real savings. Enterprise codebases that exceed context limits get the most value—this tool scales where naive context stuffing fails.

Skip it for small projects under 10K lines that fit in context windows. The setup overhead (Zilliz account, API keys, configuration) isn’t worth it for one-time analysis. External dependencies (vector DB + embedding API) make it inappropriate for security-critical on-prem environments. Alternative approaches work better in these cases: naive context stuffing for small projects, manual file selection for high control, grep+AI for exact string matches, or GitHub Copilot Workspace for GitHub-native workflows.

Related: Vector Databases 2026: PostgreSQL Kills the Category

The Shift from Autocomplete to Autonomous Agents

The AI coding landscape is shifting from autocomplete (GitHub Copilot inline suggestions) to autonomous agents (Claude Code, Cursor Composer). Context management becomes critical for agents because they operate independently, not just suggesting the next line. Cursor alone has 1M+ users and 360K+ paying customers. Claude Code’s terminal-based agent approach is gaining traction. Windsurf introduced “Cascade” for back-and-forth agent collaboration.

The tokenmaxxing backlash is driving demand for smarter tools. MCP standardization enables this evolution—agents can access external context sources, not just what’s loaded in the editor. Many senior engineers now combine tools: Cursor Pro ($20/month) with Claude Code at moderate API usage ($60-100/month) totals $80-120/month. Context-aware agents beat context-blind autocomplete.

Key Takeaways

- Claude-context brings semantic code search to AI agents via MCP, achieving 40% token reduction through intelligent retrieval rather than brute-force context stuffing

- MCP has become industry standard with 97M monthly downloads, 10,000 live servers, and Linux Foundation governance—this is infrastructure, not experiment

- Best for large codebases (40K+ lines), team onboarding, legacy code exploration, and token budgets over $100/month where 40% reduction means real savings

- Free tier available via Zilliz Cloud for experimentation; works with any MCP-compatible AI coding tool (Claude Code, Cursor, VS Code, Windsurf)

- Represents the shift from autocomplete to context-aware autonomous agents—smarter tooling beats higher token spending

Claude-context is available now on GitHub. Start with Zilliz’s free tier to test on your codebase.