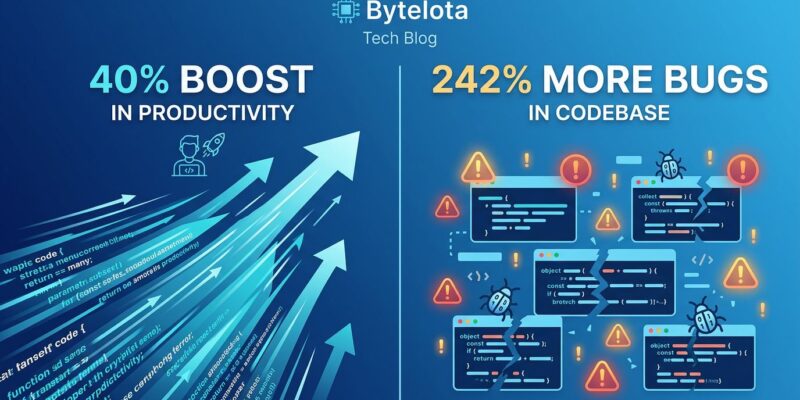

Developers now spend 11.4 hours per week reviewing AI-generated code versus 9.8 hours writing new code — a complete reversal from 2024. AI coding assistants promised to make developers more productive. They delivered on speed: 30-40% faster output, 98% more merged pull requests. But they broke something else: stability. Code quality metrics show 242% more incidents per PR, 54% more bugs per developer, and 441% longer review times. Welcome to the AI Productivity Paradox — where developers are busier than ever but organizational metrics stay flat.

The Core Paradox: Speed Up, Stability Down

At the individual level, AI coding tools are crushing it. Developers complete 21% more tasks and merge 98% more pull requests. Cursor, Claude Code, and GitHub Copilot have made writing code faster than ever.

But something strange happens when you zoom out to the organizational level: delivery metrics don’t budge. According to Faros AI’s research across thousands of organizations, the time saved in code creation gets reallocated to auditing and verification. AI-authored changes produce 10.83 issues per PR compared to 6.45 for human-only PRs — a 1.7× increase in problems. Code churn jumped from 33% to 40%, and 40% of AI-generated code gets rewritten within two weeks.

The result? Developers are busier, not more productive. Median PR review time is up 441%. Bugs per developer are up 54%. Incidents per PR are up 242.7%. The bottleneck shifted from writing to reviewing, and we’re spending more time fixing AI’s “subtly wrong” code — code that compiles and passes quick review but contains logic errors that senior engineers catch only after real scrutiny.

The Great Equalizer: Low Performers Gain 4× More

Here’s the counterintuitive part: not all teams benefit equally from AI. Plandek’s analysis of 2,000 organizations found that low-performing teams see nearly 50% improvement in lead time to delivery, while high-performing teams see only 12.5% improvement — a 4× difference.

AI acts as an equalizer, not a multiplier. High-performing teams already had optimized workflows and senior engineers writing clean code. AI adds marginal value. Low-performing teams had more “low-hanging fruit” — boilerplate, repetitive tasks, and junior developers who benefit massively from AI assistance.

But the performance gap remains. Low-performing teams still deliver less than half the output per engineer compared to high-performing teams, even with AI. AI democratizes productivity, but it doesn’t eliminate skill gaps.

Why Traditional DORA Metrics Fall Short

Traditional DORA metrics — Deployment Frequency, Lead Time for Changes, Change Failure Rate, and Recovery Time — were designed for a world where humans wrote all the code. They don’t track the impact of AI-generated code.

The problem: if deployment frequency goes up but change failure rate also increases, you still don’t know whether AI is helping or hurting. You can’t tell the difference between AI-assisted work and human-authored work unless you explicitly segment them.

Elite engineering teams in 2026 are adding an AI measurement layer. They track: what percentage of merged code was AI-assisted or AI-generated, how that percentage trends over time, and how AI code performs compared to human code. A team with 20% AI code share and a team with 70% AI code share are operating in fundamentally different modes, even if their DORA numbers are identical.

New Frameworks Emerge: SPACE and DX Core 4

The measurement landscape is evolving. The SPACE framework (Satisfaction, Performance, Activity, Communication, Efficiency) takes a holistic view beyond pure delivery metrics. It captures developer experience, which matters when reviewing AI code becomes more draining than writing new code.

But the most promising evolution is DX Core 4, introduced in December 2024 by Abi Noda and Laura Tacho. It unifies DORA, SPACE, and DevEx into four dimensions: Speed, Effectiveness, Quality, and Business Impact.

DX Core 4 combines system metrics (what actually happened) with self-reported metrics (how developers feel it went), accounting for the perception gap between objective output and subjective experience. Teams deploying DX Core 4 across 3,500 developers saw 65% AI adoption, 31% total throughput increase, and 16% productivity lift specifically attributed to AI. Each one-point improvement in DX score saves developers 13 minutes per week, and top-quartile teams show 4-5× higher performance across speed, quality, and engagement.

The Countermeasure: AI Code Review Tools

With 40% of all committed code now AI-generated, human-only code review has become a bottleneck. The market responded with an explosion of AI code review tools.

Anthropic launched Code Review in March 2026, a multi-agent system where specialized AI reviewers analyze pull requests simultaneously. Each agent targets different issue classes: logic errors, boundary conditions, API misuse, authentication flaws, and compliance violations. A verification step disproves findings to reduce false positives (fewer than 1% in internal use). The average review costs $15-25 and takes about 20 minutes.

Other players have staked out positions: Greptile indexes your entire codebase and achieves an 82% bug catch rate but generates the most noise. Qodo scores 60.1% F1 and auto-generates tests for untested code paths. CodeRabbit catches fewer bugs (44% rate) but produces almost no false positives and costs just $24/user/month.

Cloudflare’s deployment shows the scale: 131,246 review runs across 48,095 merge requests in 5,169 repositories during the first 30 days, with a median review time of 3 minutes and 39 seconds.

The new workflow is clear: AI generates code, AI reviews code, humans approve. AI reviewing AI isn’t optional anymore — it’s necessary.

Navigating the Paradox

The AI Productivity Paradox isn’t going away. Developers will keep using Cursor and Claude Code because they’re genuinely faster at writing code. But speed without stability is just chaos.

The path forward requires three shifts. First, invest in AI code review tools to match your AI coding assistants — you can’t have one without the other. Second, update your productivity metrics to account for AI impact. Add an AI segmentation layer to your DORA metrics or adopt frameworks like DX Core 4 that explicitly track AI’s contribution. Third, focus on sustainable velocity, not raw speed. A team that ships 50% more code with 242% more incidents isn’t more productive — it’s just creating more work.

AI is a tool, not a solution. The teams that win in 2026 aren’t the ones writing the most code. They’re the ones with the best guardrails.