PrismML released Ternary Bonsai on April 16, 2026—a family of AI models that fit frontier-quality intelligence into 1/9th the memory of traditional models. The flagship 8B model runs in just 1.75GB (versus 16.4GB for standard 16-bit equivalents) and delivers 27 tokens per second on an iPhone 17 Pro Max. Performance sits within 5% of Qwen3 8B despite being 9x smaller. This isn’t theoretical research. These models run on your phone, process data locally, cost nothing per inference, and work offline.

How Ternary Weights Work

The breakthrough: ternary weights. Every parameter takes one of three values—{-1, 0, +1}—encoding approximately 1.58 bits of information per weight (log₂3≈1.58). PrismML didn’t quantize an existing model down to ternary. They trained Ternary Bonsai natively in 1.58-bit from scratch, applying the constraint end-to-end across embeddings, attention layers, MLPs, and the language model head. Matrix multiplication collapses into pure addition and subtraction. No multiplies. Zero weights get skipped entirely.

Benchmark Performance

Benchmarks prove it works. Ternary Bonsai 8B scores 75.5 across six evaluations (MMLU Redux, MuSR, GSM8K, HumanEval+, IFEval, BFCLv3). That trails only Qwen3 8B, which consumes 16.38GB—nine times more memory. Compared to PrismML’s earlier 1-bit Bonsai 8B (1.15GB, score 70.5), the ternary version gains 5 benchmark points for 600MB of extra memory. The zero state matters. PrismML founder Sahin Lale put it bluntly: “Turns out adding 0 helps.”

Real-World Use Cases

This unlocks real deployment scenarios cloud APIs can’t touch. Healthcare apps process patient records locally without HIPAA concerns. Financial apps handle sensitive data on-device. Field workers in remote areas run AI without internet. Mobile developers embed 8B models in consumer apps. Privacy becomes a feature, not a limitation. Costs drop to zero—no API bills, no usage caps, no vendor lock-in. Latency falls to near-zero since inference runs locally.

Performance on Apple Silicon

Apple Silicon handles it well. On M4 Pro, Ternary Bonsai 8B hits 82 tokens per second, roughly 5x faster than 16-bit models. On iPhone 17 Pro Max, 27 tokens per second proves fully usable for real-time chat. Apple’s own Foundation Models achieve 30 tokens per second on iPhone 15 Pro, so Ternary Bonsai competes directly while offering open weights and cross-platform deployment.

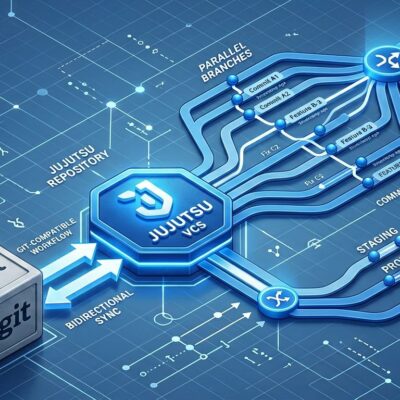

Technology Foundation

The technology builds on Microsoft’s BitNet b1.58 research from 2024, when researchers declared their 1.58-bit model comparable to 16-bit Llama 2. PrismML commercialized it. The company emerged from stealth in March 2026 with $16.25 million in seed funding from Khosla Ventures and Cerberus Ventures, plus compute grants from Google. All four founders hold Caltech PhDs. CEO Babak Hassibi is a computer scientist and mathematician at Caltech. The company’s built on proprietary Caltech intellectual property.

Cloud vs Edge AI Debate

Ternary Bonsai challenges a core assumption: that AI requires massive cloud infrastructure. Cloud APIs offer cutting-edge performance, but they lock you into vendors, expose your data, charge per token, and add latency. Edge AI historically meant lower quality. Ternary Bonsai bridges that gap—near-cloud quality with complete privacy, zero cost, and instant response. The tradeoff: 5% less performance for 9x less memory. For privacy-sensitive or cost-constrained applications, that’s a win.

Availability and Future

The models are available on Hugging Face now. Three sizes: 8B (1.75GB), 4B (0.86GB), and 1.7B (0.37GB). Load them with standard transformers, run them locally, pay nothing. As specialized ternary hardware emerges—ASICs optimized for add/subtract-only operations—performance could jump 10x-100x beyond current CPU/GPU speeds. Apple could integrate “ternary blocks” into future chips the same way Neural Engine handles 8-bit and 16-bit operations today.

Whether ternary models replace cloud APIs depends on your use case. They won’t match GPT-4’s reasoning on complex tasks. But for 80% of applications—chatbots, code completion, content generation, data analysis—they’re good enough. And “good enough” with privacy, zero cost, and offline operation beats “excellent” with vendor lock-in, per-token charges, and mandatory internet.

PrismML proved the math works. Now developers decide if AI independence matters enough to accept a 5% quality discount. For healthcare, finance, and anyone tired of API bills, the answer is probably yes.