AI coding tools are powerful but architecturally blind. They generate technically correct code that breaks production systems in 67% of enterprise deployments because they lack the structural context humans naturally have. A study of 500 software engineering leaders found that 67% now spend more time debugging AI-generated code than they save writing it.

The problem isn’t model capability. It’s context. GitNexus solves this by creating knowledge graphs of entire codebases—every dependency, call chain, cluster, and execution flow—and exposing them to AI agents so they can’t miss critical architectural relationships. It’s trending #1 on GitHub today with 24,300+ stars because it addresses the fundamental limitation in AI-assisted development: the context gap.

The AI Context Gap Is Real and Costly

Between one-third and two-thirds of AI-generated code contains errors requiring manual correction, according to research from Bilkent University. JetBrains surveyed 481 experienced developers across 71 countries and found lack of context understanding is the top barrier to AI adoption at 14.4%.

The issue runs deeper than limited context windows. AI coding assistants treat each session as a clean slate—close your IDE or start a new chat, and all context is gone. Even with massive context windows, AI can’t reconstruct the architectural picture from raw text. A codebase isn’t just code; it’s a network of relationships: dependencies, call chains, module interactions, and system behavior patterns built up over time.

For teams maintaining microservices, one context-blind suggestion can break integration contracts between services, duplicate business logic across service boundaries, or introduce dependency conflicts affecting multiple teams. The METR study found that experienced developers using AI tools took 19% longer to complete work, not faster—despite predicting they’d be 24% faster. Perception isn’t reality.

How GitNexus Solves It

GitNexus creates interactive knowledge graphs from GitHub repositories or ZIP files that run entirely in your browser. The tool indexes everything: dependencies, function calls, type inheritance, execution flows, and clusters of related code. It precomputes structure using a seven-phase pipeline that extracts symbols, links imports across files, traces call chains, and groups related code into functional communities using the Leiden clustering algorithm.

The key innovation is precomputation. GitNexus doesn’t analyze code at query time—it builds the complete architectural map upfront and stores it locally. When AI agents query the graph, they get complete context in single calls rather than making multi-query chains that can miss relationships. The AI can’t miss context because it’s already in the tool response.

This is zero-server architecture. Nothing leaves your machine. Whether you use the browser-based web UI or install the CLI, your code stays local. For proprietary codebases and security-conscious teams, this matters.

Two Ways to Use GitNexus

The web UI at gitnexus.vercel.app lets you try GitNexus in 30 seconds. Drop in a GitHub URL or ZIP file, wait for indexing, and explore the interactive knowledge graph. You can ask the built-in AI chat questions about the codebase structure, trace dependencies, and visualize relationships. The browser-based version is limited by memory to around 5,000 files, making it perfect for exploration and smaller projects.

For production use, install the CLI globally:

npm install -g gitnexusRun setup once to auto-configure your editors:

gitnexus setupThen index a repository:

gitnexus analyze /path/to/your/repoStart the MCP server to expose the knowledge graph to all your AI tools:

gitnexus mcpThe CLI has no size limits, uses persistent storage via LadybugDB, and supports Tree-sitter parsing for JavaScript, TypeScript, Python, Go, Rust, Java, C++, C#, and more. A single MCP server serves all indexed repositories to every AI coding tool you use.

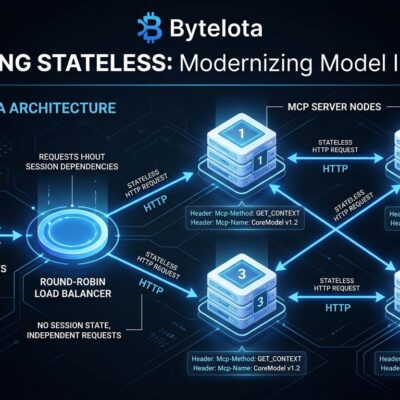

MCP Integration: Why It Works Everywhere

GitNexus uses the Model Context Protocol (MCP), an open standard introduced by Anthropic in November 2024. Think of MCP as USB-C for AI—one connection works with every tool. OpenAI officially adopted MCP in March 2025, followed by Microsoft, Google, and Amazon. As of March 2026, over 1,600 MCP servers are available covering databases, APIs, file systems, and cloud services.

GitNexus exposes 16 MCP tools that AI coding assistants can call:

querycombines BM25 search, semantic search, and reciprocal rank fusion for hybrid searchcontextprovides 360-degree symbol views with categorized referencesimpactassesses blast radius with confidence scoring and depth groupingdetect_changesmaps git diffs to affected processesrenamehandles coordinated multi-file refactoringcypherallows raw graph queries for advanced use cases

The integration works with Claude Code, Cursor, Codex, Windsurf, and OpenCode. Claude Code gets the deepest integration with PreToolUse hooks that enrich searches with graph context and PostToolUse hooks that auto-reindex after commits.

Who Should Use GitNexus

If you’re debugging AI-generated code more than you’re writing it, you have a context problem. GitNexus is built for developers using AI coding assistants who need those tools to understand architectural patterns, not just generate syntactically correct snippets.

Teams with large or complex codebases benefit from knowledge graphs during onboarding. Instead of spending weeks reading code, developers can explore the graph, trace execution flows, and understand module relationships visually. The Graph RAG agent can answer questions about “how does authentication work” or “what depends on this API” with actual architectural awareness.

Privacy-conscious developers who won’t upload proprietary code to cloud services can use GitNexus’s zero-server architecture. Everything runs locally in the CLI or in-browser for the web UI. For microservices architectures, GitNexus supports repository groups that extract API contracts and trace cross-repo execution flows.

The Trends Behind GitNexus

The tool’s trending status—#1 on GitHub today with 1,174 stars gained—reflects timing. AI coding assistants went mainstream in 2026, and the context gap became the bottleneck. GitNexus launched in August 2025 and went viral on February 22, 2026, gaining 7,300 stars in days. It now has 24,300+ stars.

The adoption of MCP as the standard protocol means GitNexus isn’t locked to one AI tool vendor. As more coding assistants adopt MCP—and they will, because it’s becoming the standard—GitNexus becomes more valuable through network effects. GraphRAG research consistently shows that graph-based retrieval outperforms vector-only RAG for code analysis because structured relationships beat text similarity for architectural understanding.

Try It Now

Visit gitnexus.vercel.app to try the web demo. For production use, install the CLI with npm install -g gitnexus and run gitnexus setup to configure your editors. The GitHub repository has full documentation.

GitNexus is open source under a noncommercial license. The project is maintained by Abhigyan Patwari, with commercial deployment available through Akonlabs for enterprise teams that need PR review integration, auto-updating documentation wikis, and automatic re-indexing.

The knowledge graph approach addresses what bigger models and longer context windows can’t: architectural awareness that persists across sessions and provides complete context in single queries. If your AI coding assistant keeps breaking your architecture, the problem isn’t the model. It’s the lack of structure.