AI coding tools reach break-even in just 3 days for most developers. At $100/month and an average developer hourly rate of $62, the math is simple: 1.6 hours of saved time covers the cost. With developers averaging 3.6 hours saved per week, tools like GitHub Copilot, Cursor, and Claude Code pay for themselves before the first week ends. Yet 92.6% adoption hasn’t translated to uniform productivity gains—McKinsey reports 46% faster routine tasks while one controlled study found developers taking 19% longer due to verification overhead. The ROI is real, but understanding the trade-offs separates hype from value.

The 3-Day Break-Even: Why $100/Month Pays for Itself

The ROI calculation is straightforward. Average US developers earn $129,300 annually, translating to roughly $62 per hour. A typical $100/month AI coding subscription requires just 1.6 hours of saved time to break even. DX Research surveyed 121,000 developers across 450+ companies and found users save an average of 3.6 hours per week. Simple division: 1.6 hours needed divided by 3.6 hours gained per week equals roughly 3 days to payback.

However, break-even timelines vary by salary. A $180K senior developer earning $90/hour hits break-even in 1.1 hours—less than 2 days. A $240K staff engineer at $120/hour breaks even in under 50 minutes, or 1.5 days. For any developer earning $60K+ annually, the math favors AI tools immediately. The annual ROI amplifies this: a $120K developer gains $30,000 in saved time value against $1,200 in subscription costs—a 24× return.

92% Adoption Doesn’t Mean 92% Productivity Gains

Adoption rates suggest universal acceptance: 92.6% of developers use AI coding assistants at least monthly, 75% use them weekly, and 51% rely on them daily. Moreover, AI-authored code now constitutes 26.9% of all production code, up from 22% last quarter. Yet productivity outcomes vary wildly depending on how developers integrate these tools into their workflows.

McKinsey studied 4,500 developers across 150 enterprises and found AI tools reduce routine coding tasks by 46% on average, with code review cycles shortened by 35%. GitHub’s controlled experiment showed developers using Copilot completed an HTTP server task in 1 hour 11 minutes versus 2 hours 41 minutes for the control group—55% faster. On the optimistic end, these numbers validate vendor claims.

The pessimistic side tells a different story. METR’s controlled study with experienced developers found participants took 19% longer to complete tasks with AI assistance. The culprit: verification overhead. Developers spent extra time checking, debugging, and fixing AI-generated code, negating generation speed gains. One CTO summarized the paradox bluntly: “93% of developers use AI, but productivity is still 10%.”

The divide comes down to usage patterns. DX Research found daily users merge 60% more pull requests compared to occasional users. Frequency matters—developers who treat AI as an integrated workflow tool see substantial gains, while those who use it sporadically see minimal improvement. Adoption alone doesn’t create productivity; disciplined integration does.

Related: AI Productivity Paradox: 93% Use It, 19% Slower Reality

Fast Code Generation Requires Slow, Careful Review

AI tools generate code quickly, but verification discipline determines net productivity. GitHub Copilot users completed tasks 55% faster in controlled settings, but that speed advantage evaporates without proper validation. Studies show projects with inadequately reviewed AI code exhibit 23% higher bug density. Furthermore, skipping verification increases code review time by 12% downstream—you don’t save time, you just move it later in the cycle.

Accenture reported an 84% increase in successful builds when developers applied proper validation frameworks. The winning approach combines manual inspection, sandbox testing, peer review, and automated checks against standards like OWASP and NIST. Layered validation catches false positives and hallucinations before they reach production. The process adds time up front but prevents the 19% productivity loss seen in studies where developers treated AI output as production-ready.

The trade-off is unavoidable: fast generation demands disciplined verification. Developers who verify everything hit the 46-55% faster outcome range. Developers who trust AI output blindly hit the 19% slower outcome. The tool doesn’t determine ROI—your validation discipline does.

Related: Comprehension Debt: AI Code’s Invisible Cost

From $100/Month to $4,000/Month: Team Economics

Solo developer pricing looks reasonable at $100/month, but per-seat economics scale brutally. A 10-developer team faces $1,000-$4,000/month depending on tool choice. GitHub Copilot Business at $19/seat costs $190/month for 10 developers. Cursor Teams at $40/seat runs $400/month. Claude Code mid-tier subscriptions can hit $1,000/month for the same team size. Annual costs compound: $2,280-$48,000 for 10 seats.

Enterprise scale amplifies costs dramatically. A 500-developer organization pays $114,000 annually for GitHub Copilot Enterprise ($39/seat), $192,000 for Cursor Teams ($40/seat), or $234,000+ for Tabnine Enterprise. These are base subscription costs—implementation overhead adds another $50,000-$250,000 annually for training, governance, monitoring, and enablement infrastructure.

Budget planning requires understanding hidden costs. Many organizations negotiate volume discounts that reduce list prices by 20-40% for teams exceeding 100 seats. Annual contracts typically save 10-20% compared to monthly billing. For enterprises, custom pricing tiers often include features not reflected in public documentation. The listed $100/month solo rate doesn’t predict team costs—run the per-seat math early and negotiate aggressively.

When AI Coding Tools ROI Works (And When It Doesn’t)

ROI certainty depends on three factors: developer hourly rate, task characteristics, and verification discipline. For developers earning $60K+ working on pattern-based tasks, ROI is nearly guaranteed. AI tools excel at boilerplate code—APIs, CRUD operations, tests, and documentation. McKinsey’s 46% reduction in routine coding tasks reflects this strength. Automated testing sees 35-40% productivity improvements because AI generates comprehensive test suites that humans find tedious.

ROI becomes uncertain for mission-critical code with zero error tolerance, novel algorithms where AI lacks training data, and highly regulated environments where compliance overhead outweighs productivity gains. Junior developers learning fundamentals face a different calculus—over-reliance on AI risks copy-paste without comprehension, creating technical debt that compounds over time.

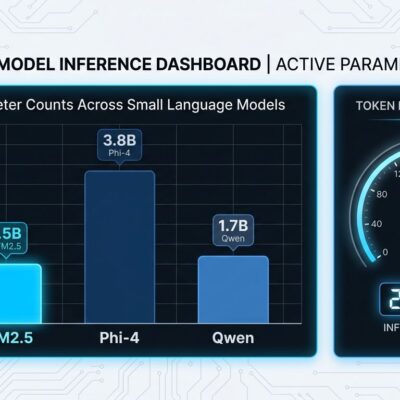

Tool choice also matters. GitHub Copilot dominates autocomplete with broad IDE support and 4.7 million paid subscribers. Cursor achieves 38ms p99 response times with 92% accuracy, optimized for real-time interactive coding. Claude Code’s agent-first approach suits complex discrete tasks where you describe intent and review results. A common pattern emerging in 2026: use Cursor for day-to-day editing and Claude Code for larger, autonomous tasks. Match tool philosophy to workflow, not just feature lists.

Key Takeaways

- For developers earning $60K+, break-even happens in 3 days with 3.6 hours saved per week—annual ROI reaches 24× at typical usage rates

- Daily users merge 60% more PRs compared to occasional users—frequency and workflow integration matter more than tool choice

- Verification is non-negotiable: projects with inadequate AI code review show 23% higher bug density and negate productivity gains entirely

- Budget for scale: $100/month solo becomes $1,000-$4,000/month for 10 seats, with enterprise costs hitting $114K-$234K+ annually before volume discounts

- Measure actual productivity through PR throughput and cycle time, not adoption rates—92.6% adoption doesn’t guarantee uniform gains

The ROI case for AI coding tools is clear for most professional developers, but it’s not automatic. Disciplined verification, daily usage habits, and realistic cost planning separate value from waste. If you’re earning $60K+ and working on pattern-based code, the 3-day payback math works. Skip verification or use tools sporadically, and you’ll join the 19% slower cohort.