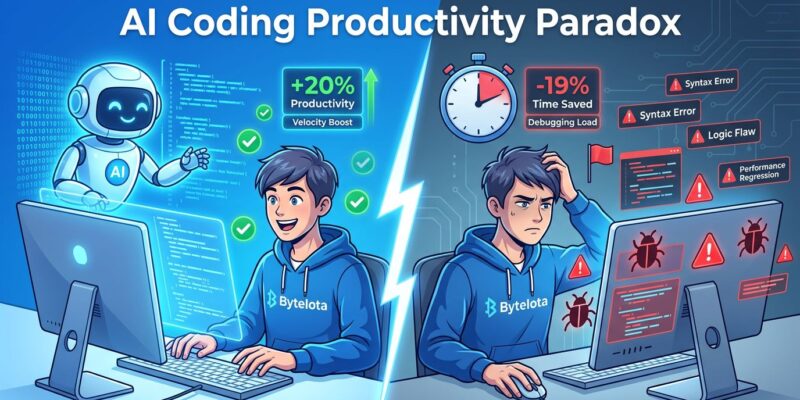

Developers using AI coding assistants believe they’re 20% faster. Rigorous research from METR’s July 2025 study of 16 experienced open-source developers shows they’re actually 19% slower. That’s a 39-point gap between perception and reality—and it’s not an outlier. Faros AI’s 2025 analysis of 10,000+ developers confirms the paradox: individual output skyrockets (98% more pull requests, 21% more tasks), but PR review times increase 91% and team delivery stalls. Welcome to the AI productivity paradox of 2026, where typing faster doesn’t mean shipping faster.

The Research Is Clear: Perception Doesn’t Match Reality

METR’s randomized controlled trial recruited 16 experienced developers from large open-source repositories—averaging 22,000+ stars and 1 million+ lines of code. These weren’t junior developers fumbling with unfamiliar syntax. They were maintainers who knew their codebases intimately. Each developer tackled 246 real GitHub issues, randomly assigned to either use AI tools (Cursor Pro with Claude 3.5/3.7 Sonnet) or work without them.

The result? When using AI, developers took 19% longer to complete tasks. The kicker: after the study, they estimated they’d been 20% faster with AI. The perception-reality gap isn’t a rounding error—it’s a fundamental mismatch between how productivity feels during the typing phase and how it measures end-to-end.

Faros AI’s analysis of 10,000+ developers across 1,255 teams scaled this finding. Individual metrics looked great: 21% more tasks completed, 98% more PRs merged, 47% more PRs handled per day. However, team-level delivery? Unchanged. DORA metrics showed no improvement. The productivity gains evaporated somewhere between “code written” and “feature shipped.”

The Review Bottleneck Eats All the Gains

Here’s where AI’s speed boost dies: code review. Faros AI found that PR review times increased 91% when teams adopted AI coding tools. Meanwhile, PR sizes ballooned by 154%—more code to review, slower reviews per PR, and 98% more PRs flooding the queue. The math is brutal.

Before AI, a team of 10 developers might generate 50 PRs per week, each taking 30 minutes to review. That’s 25 hours of review load. After AI adoption? 99 PRs per week (98% increase), each taking 57 minutes (91% longer). The review load jumps to 94 hours per week. Consequently, review capacity becomes the limiting factor, and deployment velocity stalls despite individuals feeling productive.

Moreover, code quality degrades. Faros AI reported a 9% increase in bugs per developer. Code churn—the percentage of code discarded within two weeks—has doubled since 2021, according to GitClear’s analysis of 153 million lines of changed code. Fast code that gets rewritten isn’t productive. It’s wasted effort dressed up as velocity.

Why Senior Developers Suffer Most

The paradox hits experienced developers hardest. Seniors already have efficient coding workflows—they know the patterns, the edge cases, the architectural constraints. AI doesn’t accelerate them during the manual coding phase; it adds friction through validation overhead.

Research shows senior developers spend 4.3 minutes reviewing each AI suggestion, compared to juniors’ 1.2 minutes. They’re not slower because they’re bad at using AI—they’re slower because they actually understand what correct code looks like. They catch the architectural mismatches, the missing edge cases, the security holes that AI misses. Thirty percent of senior developers report editing AI output so extensively that it erases any time savings.

Sonar’s 2026 State of Code survey quantified the trust gap: 96% of developers don’t fully trust AI-generated code accuracy. Yet only 48% always verify it before committing. That’s a dangerous verification deficit. Furthermore, teams now spend 24% of their work week—nearly one full day—checking, fixing, and validating AI output. For seniors responsible for production systems, this validation tax is non-negotiable.

The Quality Tax: Security and Code Churn

AI-generated code doesn’t just feel fast—it’s also riskier. Veracode’s 2025 GenAI Code Security Report tested over 100 LLMs across 4 languages and found AI code contains 2.74 times more vulnerabilities than human-written code. The failure rate on secure coding benchmarks hit 45%. Common weaknesses include insufficient randomness (CWE-330), code injection (CWE-94), and cross-site scripting (CWE-79, with an 86% failure rate).

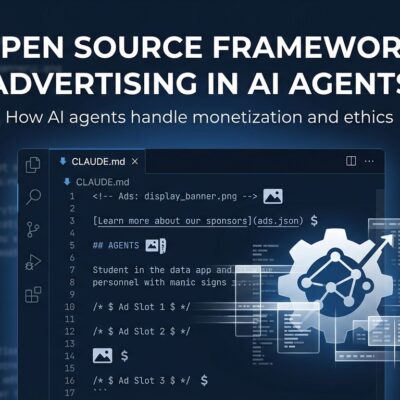

Here’s a typical AI autocomplete mistake:

# AI autocomplete produces:

def display_user_input(request):

user_name = request.GET['name']

return f"<h1>Hello {user_name}</h1>" # UNSANITIZED - XSS vulnerability

# Senior dev must catch the missing input sanitization

# Time cost: 3-5 minutes to identify, test, and fix

# vs 30 seconds to write it securely from scratchFor experienced developers, the time spent fixing these issues often exceeds the time saved during initial generation. The paradox compounds: AI accelerates typing but creates downstream work that makes the overall process slower.

What Developers Should Actually Do

The data doesn’t say “never use AI”—it says “use AI selectively.” The tools work well for boilerplate generation, test scaffolding, documentation, and unfamiliar domains. They struggle with core business logic, security-critical paths, performance-sensitive code, and architectural decisions.

The key is measurement. Don’t track output metrics like PRs created or lines of code written. Instead, track outcomes: deployment frequency, cycle time from commit to production, mean time to recovery. If review time is increasing, code churn is rising, or quality metrics are declining despite more PRs, AI isn’t helping—it’s creating a bottleneck.

Context engineering helps. Research shows that providing AI with architectural context upfront—coding standards, design patterns, test templates—improves performance by 29-39%. The problem isn’t AI autocomplete; it’s vibe coding without structure. Precision prompting with proper context reduces the validation overhead that drives the paradox.

The Industry Needs Honesty, Not Hype

Ninety-three percent of developers use AI coding tools in 2026. That’s not proof the tools deliver productivity—it’s proof the hype cycle works. When experienced developers are measurably slower with AI despite believing they’re faster, we have a perception problem the industry refuses to address.