AI coding tools advertised at $10-$20 per month are costing engineering teams $200-$600 per engineer in reality, creating a budget crisis as organizations discover their 2026 AI tool spending is 10-30x higher than planned. With 85% of developers now using these tools, the gap between sticker price and actual cost isn’t a minor accounting issue—it’s blowing up annual budgets.

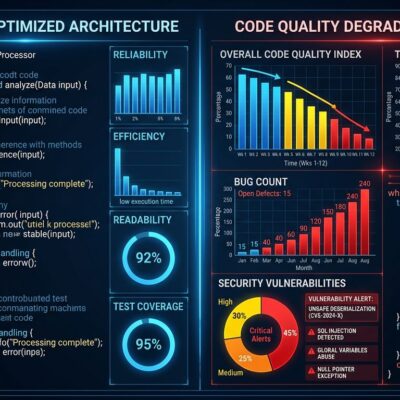

The disconnect stems from a three-layer cost structure most teams aren’t tracking: visible costs (seat licenses), semi-visible costs (token consumption), and hidden costs (quality overhead). That third layer—code review time, debugging AI-generated code, and rework cycles—makes up 60-80% of the real expense.

Why Your $20/Month AI Tool Actually Costs $600

The $200-$600/month reality breaks down into three layers. Visible costs (seat licenses) represent just 6-15% of total spending. Semi-visible costs (tokens and API charges) add another 10-30%. The killer? Hidden costs—code review overhead, debugging time, security review—consume 60-80% of the real budget.

Here’s a real example: A 50-person team pays $1,000/month for Cursor Pro seats ($20 × 50). Token overages add $7,500/month when developers use premium models. Code review overhead adds $18,000/month as teams spend 60-70% more time reviewing AI-generated code. Total cost: $35,000/month ($700/engineer)—35 times the advertised price.

According to Fordel Studios research, “the actual fully loaded monthly cost per engineer ranges from $310-750” versus the “$20-40 per month” managers typically quote when budgeting. Larridin’s Developer Productivity Benchmarks warn that “ROI calculations that use only the seat license fee as the cost denominator produce misleadingly high results,” with organizations overstating ROI by 20-40%.

Pricing Models Changed 3 Times in 18 Months

AI coding tool pricing models evolved from request-based (early 2025) to credit-based (mid-2025) to quota-based (Q1 2026), with some vendors overhauling pricing twice in six months. Budgeting became nearly impossible.

Windsurf switched from credits to quotas on March 19, 2026, raising Pro from $15 to $20/month—its second pricing overhaul in six months. GitHub Copilot introduced tiered pricing (Pro at $10/month, Pro+ at $39/month), then paused new signups entirely on April 20, 2026, citing agentic workflow costs.

The credit multiplier problem compounds the chaos. Premium models like Claude Opus 4.6 and GPT-5.4 consume 5-20x standard credits. A $20/month Cursor Pro credit pool covers 225 Sonnet requests but only 10-45 premium model requests. Developers switching models mid-month burn through credits without realizing they’ve triggered pay-as-you-go billing.

Top Quartile Gets 4-6x ROI. Average Teams Get 2.5x.

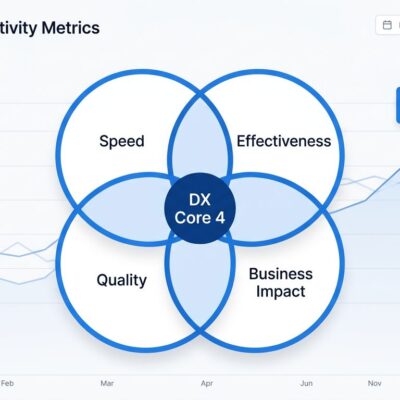

Top-quartile teams achieve 4-6x annual ROI on AI coding tools. Average teams hit 2.5-3.5x. Some see negative returns when quality costs are included. The gap isn’t the tools—it’s code review discipline, usage governance, and accurate cost tracking.

A Fortune 100 retailer achieved 17x ROI: $1.9 million/year in Copilot Enterprise licenses versus $33.75 million in productivity savings (450,000 hours saved at $75/hour). The key? Strong code review governance prevented the 1.7x defect rate increase that plagues teams without review practices.

Contrast with a fintech company that deployed Cursor Teams for 200 developers, hit $22,000/month in token overages (70% from just 30 developers working on legacy codebases), and rolled back the deployment. Their ROI was negative. Most teams are average, not top-quartile. Expecting 10x ROI based on vendor case studies sets unrealistic expectations.

The 70% Token Waste Problem (And Quality Tax)

One developer tracking 42 AI agent runs found 70% of tokens were waste, driven by full conversation history in every API call. Sessions balloon from 5K tokens to 200K tokens as context bloats. One production incident saw an AI agent rack up $2,400 in overnight charges after getting stuck in an infinite loop.

Quality overhead is just as brutal. Code review time increases 60-70% for AI-generated code. Security review overhead adds $150-300/engineer/month in regulated industries. JetBrains research found developers took 19% longer to finish tasks with AI due to debugging and fixing AI-generated code, yet believed they were 20% faster—a massive perception-reality gap.

This isn’t an anti-AI stance. The tools deliver value. But the 70% token waste and quality overhead costs drive the $200-$600/month reality. Teams that optimize token usage (clearing conversation history, using smaller models for simple tasks) and implement code review governance can cut costs 30-50%.

How to Cut Costs 60-80% (Without Losing Value)

Teams can slash AI coding tools cost using a tiered approach: free tiers for light users, paid tiers for power users (top 20%), and bring-your-own-key (BYOK) tools for technical teams.

GitHub Copilot Free offers 2,000 completions plus 50 chats per month. Amazon Q provides unlimited completions plus 50 agentic requests. Both are free forever. For teams comfortable managing API keys, BYOK tools like Cline (VS Code extension) and Aider (CLI) cost $10-50/month in API charges for moderate use versus $200-600/month for full-service tools.

Cost optimization best practices: (1) Disable automatic overages in tool settings to hard-cap spending, (2) Monitor usage weekly to catch token waste before monthly bills arrive, (3) Tier your team—20% power users on paid plans, 80% on free tiers, (4) Set budget alerts at 50%, 75%, and 90% of monthly limits.

For a 10-person team: 2 power users on Cursor Pro ($40/month) and 8 on Copilot Free ($0/month) delivers 80% of the value at $4-10/engineer all-in. Not every developer needs the $200/month Ultra tier.

Key Takeaways

- Budget realistically: Plan for $200-600/engineer/month all-in, not the $10-20 seat license price. Include token costs and quality overhead.

- Track all three layers: Visible costs (6-15%), semi-visible costs (10-30%), and hidden costs (60-80%). Most teams only track layer one.

- Expect ROI chaos: Top-quartile teams achieve 4-6x, average teams get 2.5-3.5x. Strong code review is the difference.

- Use free tiers strategically: GitHub Copilot Free and Amazon Q deliver value at $0/month. Reserve paid tiers for power users.

- Optimize token usage: Clear conversation history, use smaller models for simple tasks, disable auto-overages. Cut costs 30-50%.

The AI coding tools pricing crisis is real, but understanding the three-layer cost structure makes budgeting possible. Calculate your real cost before it catches you off guard.