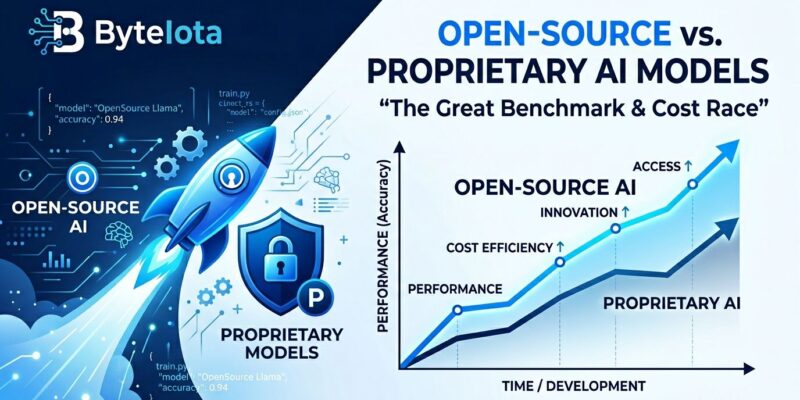

April 2026 marks the cost-performance inflection point in AI. MiniMax M2.5 scores 80.2% on SWE-bench Verified, landing just 0.6 percentage points behind Claude Opus 4.6’s 80.8%. The kicker? MiniMax costs roughly $0.15 per million input tokens. Claude runs $5. That’s a 33x price difference for what’s essentially the same capability. GLM-5 sits at 77.8%—only three points behind Claude—while DeepSeek V3.2 delivers 90% of GPT-5.4’s performance at one-fiftieth the cost. The “you get what you pay for” argument just died.

The performance gap between open-source and proprietary AI has collapsed to single digits while the cost gap stayed massive. This isn’t a prediction. It’s already happening, and the economics have fundamentally shifted.

The Numbers Don’t Lie: Performance Parity Arrived

Open-source models now compete within single-digit percentage points of frontier proprietary models on industry-standard benchmarks. MiniMax M2.5, released February 12, 2026, hit 80.2% on SWE-bench Verified. That beats GPT-5.2 (80.0%) and trails Claude Opus 4.6 by just 0.6 points. The model’s weights are on Hugging Face with vLLM and SGLang support for self-hosting.

GLM-5, developed by Z.ai—the first publicly traded foundation model company with a $31.3 billion valuation after its January 2026 Hong Kong IPO—scored 77.8% on the same benchmark. It’s a 744-billion parameter Mixture-of-Experts architecture with 40 billion active parameters per token and a 200,000-token context window. Three points behind Claude. At $3 per month for the coding plan, GLM-5.1 delivers 94.6% of Claude Opus 4.6’s coding performance.

DeepSeek V3.2 achieves roughly 90% of GPT-5.4’s performance at one-fiftieth the price. It required only 2.788 million H800 GPU hours for full training and leads open-source models on LiveCodeBench coding competitions. What cost $500 per month in 2025 now runs for $50 in 2026 with DeepSeek.

Qwen 3.5, released by Alibaba on February 16, brings 201 languages, a 256,000-token context window, and Apache 2.0 licensing. The 397B-A17B MoE model activates just 17 billion parameters per token for cost efficiency while staying competitive on benchmarks. Google’s Gemma 4, launched April 2, puts its 31B model at number three globally among open models on the Arena AI leaderboard. The 26B variant ranks sixth. These models outcompete others 20 times their size and run on smartphones.

For most production use cases, a three-to-ten point gap doesn’t justify paying 10 to 50 times more. The benchmark numbers confirm it: open-source is good enough.

The Cost Savings Are Transformative

A mid-sized financial services firm migrated customer service AI from GPT-4 to self-hosted Llama 3.3 70B. Monthly costs dropped from $45,000 to roughly $6,300. Hardware investment totaled $200,000 (including setup). Payback period: 4.5 months. Annual savings: approximately $464,400. That’s an 86% cost reduction.

A 10-person development team spent $528 per month on GPT-4o cloud APIs. Switching to local deployment with a dual RTX 4090 build ($4,615 hardware, amortized over 24 months) costs $192 to $217 monthly. Payback period: three to five months. First-year savings: 59%.

MiniMax M2.5 runs at about $0.15 per million input tokens compared to Claude Opus 4.6’s $5. GLM-5.1’s coding plan costs $3 monthly and delivers 94.6% of Claude’s performance. DeepSeek V3.2 operates at one-fiftieth the cost of GPT-5.4. Gartner forecast that by 2026, more than 50% of enterprise AI inference workloads would run on-premise or at the edge. They were right. Adoption jumped from under 10% in 2023 to over 50% now.

The ROI math for open-source AI isn’t marginal. It’s transformative. For most businesses, the cost difference alone makes open-source worth serious consideration before even accounting for sovereignty and customization benefits.

Beyond Cost: Sovereignty, Control, and Competitive Differentiation

CIO surveys show data sovereignty “has become a prerequisite rather than an afterthought.” Regulatory pressure from GDPR, HIPAA, SOC 2, and the EU AI Act is tightening. Healthcare, finance, and government sectors now require on-premise deployment. “Sovereign AI” has shifted from a nice-to-have to a procurement requirement.

The 2026 State of Open Source Report found 55% of respondents cite avoiding vendor lock-in as a driver for OSS adoption—a 68% year-over-year increase. Platform independence reduces strategic risk. There are no proprietary API migration costs when switching models.

Fine-tuning allows companies to integrate institutional knowledge directly into model weights. You can embed domain-specific expertise in medical, legal, or financial applications. You can encode proprietary workflows that competitors can’t replicate. The California Management Review notes this “creates AI capabilities that competitors cannot replicate through standard API access.” Customization becomes a competitive moat, not a cost center.

Apache 2.0 licensing (Qwen 3.5, Gemma 4) offers commercial freedom: modify, distribute, commercialize. Clear IP ownership for derivatives. No legal gray areas.

The question isn’t whether open-source AI is good enough anymore. It’s whether most teams can justify paying 50 times more for the last 5% of performance while surrendering data control.

The Trade-offs: When Proprietary Still Makes Sense

Open-source isn’t a universal replacement. Performance gaps of three to ten percentage points exist on benchmarks. That matters for cutting-edge research where the last 5 to 10% determines outcomes. Self-hosting requires ML and DevOps expertise. Infrastructure management involves hardware procurement, maintenance, and updates. Enterprise support is limited compared to managed services.

Latency varies. GPT-4o delivers 0.4-second time-to-first-token and 82 tokens per second. DeepSeek-V3 runs 1.8 seconds and 38 tokens per second. Cloud latency can be slower, though local deployment can flip this advantage.

Proprietary models still lead on bleeding-edge capabilities. GPT-5’s advanced reasoning and o1 models offer features unavailable elsewhere. Low-volume exploration and prototyping are cheaper with cloud APIs than building infrastructure. Compliance requirements sometimes favor managed services with specific certifications.

Honest assessment of trade-offs builds credibility. Understanding where each approach wins enables better decisions.

The Hybrid Future: Strategic Workload Routing

Enterprises aren’t replacing proprietary models entirely. They’re routing workloads. Open-source handles high-volume production tasks, data-sensitive applications, and custom fine-tuning needs. Internal tools and workflows see 86% cost savings. Proprietary models serve cutting-edge experimentation, low-volume specialized tasks, and enterprise support requirements.

Kai Waehner wrote in early April 2026: “The real differentiator in 2026 is deployment trade-offs—data privacy, vendor lock-in avoidance, latency control, total cost of ownership.” Hybrid architectures optimize costs while maintaining access to frontier capabilities.

The market dynamics have shifted. Open-source catches up on benchmarks (80.2% vs 80.8%). Proprietary focuses on convenience and cutting-edge features. Value moves from raw performance to applications and workflows.

In April 2026, the performance gap between open-source and proprietary AI collapsed to single-digit percentages while the cost gap remained stubbornly stuck at 10 to 50 times. That asymmetry is reshaping AI strategy. The either/or debate is over. Smart teams ask “which workload gets which model?” instead.