Observability costs are exploding in 2026. Datadog bills are growing 30-50% year-over-year for most teams, with mid-market companies paying $123,000 annually just to monitor their infrastructure. AI workloads are making it worse—teams report 40-200% bill increases when they add LLM monitoring. The culprit is Datadog’s multi-dimensional pricing model, which charges per-host, per-product, and per-GB, creating unpredictable bills that scale super-linearly with infrastructure growth. If your observability bill surprises you every quarter, you’re not alone—and it’s not an accident.

Datadog’s Pricing Model: Why Bills Scale Super-Linearly

Datadog doesn’t charge a simple monthly fee. It charges separately for each observability dimension, and those charges multiply. Infrastructure monitoring costs $15-23 per host per month. APM runs $31-40 per host, plus $1.70 per million additional spans. Logs hit you twice—$0.10 per GB for ingestion, then another $1.70-2.55 per million events for indexing. RUM costs $1.50 per 1,000 sessions. Database monitoring adds $70 per host. Each product stacks on top of the others.

The real pain comes from hidden cost drivers. Datadog counts every 5 containers as 1 host, meaning efficient infrastructure paradoxically increases your bill. Kubernetes clusters generate 50,000+ custom metrics, and Datadog charges $0.05 per metric per month after the first 100 per host. The same request generates billable events across metrics, traces, and logs simultaneously—cross-product overlap that quietly multiplies costs. There are no default cost guardrails. Volume drifts upward as services deploy, and by the time you notice, you’re thousands over budget.

A mid-market company with 100 engineers and 50 services pays approximately $10,310 per month—$123,720 annually. The breakdown: $1,150 for infrastructure, $920 for containers, $1,450 for custom metrics, $1,875 for logs, $3,275 for APM and span overages, $1,290 for RUM and synthetic monitoring, and $350 for database monitoring. Enterprise organizations regularly exceed $500,000 to $1,000,000 annually. Datadog’s multi-dimensional pricing isn’t transparent—it’s a discovery process.

AI Workloads: The 40-200% Cost Multiplier

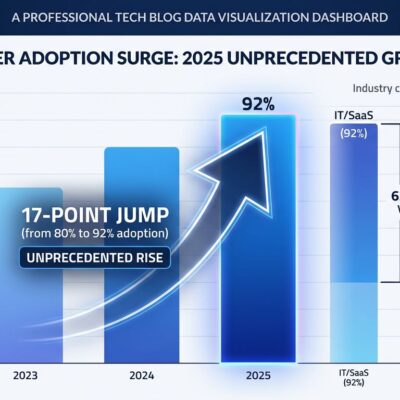

AI adoption in 2026 has introduced a new observability crisis. AI workloads generate 10-50x more telemetry than traditional services, and teams are blindsided when they add LLM monitoring. A single LLM inference request produces thousands of tokens requiring cost tracking, high-dimensional embedding vectors unsuitable for traditional log systems, multi-step trace trees from chain-of-thought reasoning, custom evaluation metrics like hallucination rates and relevance scores, and retry/fallback logs from model timeouts and rate limits.

Consider a customer support bot handling 50,000 daily messages with 4 LLM calls per message. It generates 400 million tokens daily, 1 million trace spans daily, 4 million metric data points daily, and 400 MB of logs daily. Traditional pricing models assume telemetry scales linearly with traffic. AI breaks this assumption. A chatbot interaction with 4 LLM calls generates 4-8x more spans than a traditional request-response flow. Teams report 40-200% observability bill increases after adding AI workload monitoring to existing Datadog deployments.

The “Observability Tax” – When 7 Tools Cost More Than Their Value

Many teams think they’re optimizing by using “best-of-breed” tools for each observability function. In practice, they’re paying an observability tax—cumulative financial and operational costs that exceed any individual tool’s value. A typical mid-market stack for 100 hosts and 75 engineers uses Datadog ($5,490/month), PagerDuty ($3,075/month), Atlassian StatusPage ($399/month), Sentry ($442/month), Pingdom ($249/month), Loggly ($349/month), and Grafana Cloud ($299/month), totaling $10,303 per month or $123,636 annually in direct costs.

Operational costs are worse. Incident response burns approximately one hour per incident from context switching between 5-6 platforms. Connecting seven tools creates roughly 12+ integration failures annually, each consuming 2-8 hours of senior engineering time. Vendor management—contracts, security reviews, SOC 2 evaluations, compliance activities—consumes 100+ hours annually across finance, legal, and security teams. Tool expertise becomes fragmented, creating knowledge silos and single points of failure.

Organizations consolidating to unified platforms report 40-70% direct savings, plus reduced mean-time-to-resolution, elimination of integration maintenance, unified billing, and standardized training. “Best individual tool” doesn’t equal “best system.” The cost of having 7 tools exceeds the cost of any single tool being slightly better at one thing.

Vendor Alternatives: Datadog Costs 21x More

Datadog is expensive, but the magnitude surprises most teams. For a 150-engineer team, Datadog costs $42,468 per month. Uptrace costs $2,039—21x cheaper. SigNoz costs $12,240 (3.5x cheaper). Grafana Cloud costs $20,955 (2x cheaper). New Relic costs $24,635 (1.7x cheaper). The pricing spread is dramatic.

Self-hosted options like Grafana + Loki + Tempo are 70-90% cheaper than Datadog but require DevOps capacity to manage. A hybrid approach—self-hosted systems for high-volume data combined with SaaS tools for specialized capabilities—can cut costs by 60-70%. The trade-off is operational overhead versus billing predictability. For teams with the expertise, self-hosting makes financial sense. For smaller teams, consolidating to a single mid-tier vendor eliminates the observability tax without adding operational burden.

Cost Optimization: 20-50% Savings Without Losing Visibility

Elastic’s 2026 observability survey found that 96% of organizations are actively controlling costs, with 70% seeking to optimize existing spend rather than simply cutting data. The shift is from “collect everything” to “collect what matters.” Teams commonly achieve 20-40% log reduction and 25-50% lower storage costs through proven techniques.

Sampling strategies are the fastest win. Tail-sampling traces for errors and slow paths with a 5-10% baseline captures critical data while reducing volume. Head-sampling for high-volume endpoints and sending representative samples instead of full telemetry streams cuts costs without sacrificing visibility. Data retention policies matter—setting hot retention to 14 days for application logs with explicit exceptions for production services balances accessibility and cost. Cold archiving handles compliance requirements cheaply.

First-mile processing analyzes data in real-time before it reaches expensive storage. Send summaries and representative samples to your observability platform while keeping full raw data in low-cost storage like S3. Cardinality controls prevent cost explosions—avoid high-cardinality label combinations in metrics and limit custom metric proliferation. Volume reduction through exclusion filters, adjusted sampling rates, and removing unused integrations delivers immediate savings.

Key Takeaways

- Datadog’s multi-dimensional pricing (per-host + per-product + per-GB) creates unpredictable bills scaling super-linearly with growth. Mid-market teams pay $123K/year; enterprises exceed $500K-$1M.

- AI workloads generate 10-50x more telemetry, causing 40-200% bill increases. A support bot handling 50K daily messages produces 400M tokens, 1M spans, and 4M metrics daily.

- 7-tool observability stacks cost more than their value—$123K/year in direct costs plus 100+ hours of vendor management overhead. Consolidation saves 40-70%.

- Vendor alternatives exist at dramatically lower costs: Uptrace is 21x cheaper than Datadog, SigNoz is 3.5x cheaper, and Grafana Cloud is 2x cheaper for the same scale.

- Cost optimization delivers 20-50% savings through sampling (tail-sampling for errors), retention policies (14-day hot storage), first-mile processing (analyze before storing), and cardinality controls (limit high-cardinality labels).

Observability shouldn’t cost more than the infrastructure it monitors. If your bill is growing faster than your infrastructure, it’s time to audit your observability strategy.