The February 2026 SWE-bench Pro leaderboard update exposed a problem AI labs don’t want you talking about: when evaluated on private, previously unseen codebases, top models collapse. Claude Opus 4.1 fell from 22.7% to 17.8% resolution rate—a 22% drop. OpenAI GPT-5 did worse, plummeting from 23.1% to 14.9%, a 36% decline. This isn’t noise. It’s evidence that public leaderboards are inflated, and developers making costly decisions based on those numbers are getting sold a fantasy.

The gap matters because teams are adopting AI coding tools based on promises of 75-80% problem resolution on benchmarks like SWE-bench Verified. However, when these same models hit real-world codebases they’ve never seen before, performance drops by nearly half. That’s not a minor variance. That’s overfitting.

The Private Performance Collapse

Here’s what happened in February 2026: the SWE-bench team ran independent evaluations on their Pro benchmark, including a private subset of problems the models hadn’t been trained on. Moreover, the results were brutal. Claude Opus 4.1, which scored 22.7% on the public Pro leaderboard, dropped to 17.8% on private tests. GPT-5 performed even worse, falling from 23.1% to 14.9%.

These aren’t small corrections. A 36% performance drop suggests the models aren’t developing true software engineering capabilities—they’re pattern-matching against problems they’ve seen before. Consequently, when confronted with genuinely novel codebases, the cracks show.

Meanwhile, models advertising 75-80% resolution rates on SWE-bench Verified quietly deliver 15-25% on the more realistic SWE-bench Pro. The gap between marketing and reality is widening, and it’s costing developers time and trust.

What SWE-Bench Pro Actually Tests

SWE-bench Pro isn’t your typical benchmark. It contains 1,865 real GitHub issues from 41 actively maintained repositories across Python, Go, TypeScript, and JavaScript. Furthermore, these aren’t trivial bug fixes. The average solution requires changing 107 lines of code across 4.1 files, and every task demands at least 10 lines of modification. Many problems require over 100 lines.

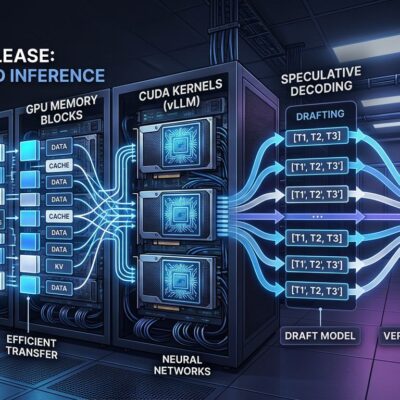

The benchmark uses “Fail-to-Pass” testing: unit tests that fail before the fix and pass after. Models get issue text, bash tools, and a Docker container with the codebase installed at the commit before the fix. They must navigate the code, identify the problem, and generate a working patch. No hand-holding.

Critically, SWE-bench Pro includes public, held-out, and commercial test sets—18 proprietary repositories that models have never seen. This private subset is what revealed the performance gap. In fact, it turns out models perform significantly better on public repos they’ve been exposed to during training than on genuinely unseen codebases. That’s the definition of overfitting.

Cost, Gaming, and the Reality Gap

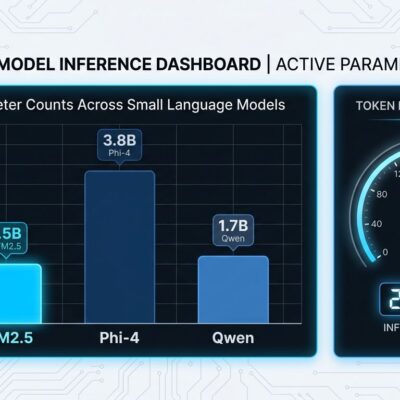

The February leaderboard also exposed absurd cost disparities. Claude Opus 4.6 scores 76.8% on SWE-bench Verified at $376.95 per instance. Gemini 3 Flash delivers 75.8%—nearly identical performance—for $177.98. MiniMax M2.5 achieves competitive results at just $36.64. That’s a 10x cost difference for comparable accuracy.

For teams processing thousands of coding tasks monthly, this matters. A model that’s “good enough” at 1/10th the price beats a marginally better model that drains your budget. Nevertheless, public leaderboards optimize purely for accuracy, ignoring cost-performance tradeoffs entirely.

Then there’s the gaming problem. Research shows test overfitting is endemic in LLM-based program repair: models generate code that narrowly passes observed tests while breaking other functionality. Additionally, the performance plateau at 75-77% across top models on public benchmarks, combined with the collapse on private tests, suggests we’ve hit the limits of pattern-matching. Models aren’t reasoning about software engineering—they’re memorizing solutions to problems they’ve seen.

Language-specific performance variations reinforce this. Models score 25-30% on Python and Go tasks in SWE-bench Pro but drop to 15-20% on JavaScript and TypeScript. That’s not a capability difference. That’s training data bias showing through.

The real-world gap is worse. While 73% of engineering teams now use AI coding tools daily, most organizations see no measurable productivity gains. Developers report spending more time cleaning up AI-generated messes than writing AI-assisted code. Consequently, tools that pass benchmarks fail in production because they lack project-specific context, hallucinate deprecated APIs, or mix up library versions. Agent scaffold and tooling matter more than model intelligence—some agents show 22-point performance swings based purely on infrastructure, not model weights.

What Developers Should Actually Do

Stop trusting public leaderboards. Period.

If you’re evaluating AI coding tools, demand trial periods on your actual codebase. Measure first-pass accuracy—the percentage of generated code that works without modification. Moreover, tools requiring constant correction don’t save time; they create verification bottlenecks.

Evaluate cost-performance tradeoffs for your volume. A model that’s 5% less accurate but 10x cheaper might be the better choice if you’re processing thousands of tasks monthly. Calculate total cost of ownership, including the time engineers spend verifying and fixing AI-generated code.

Set realistic expectations. On complex, multi-file problems like those in SWE-bench Pro, expect 15-25% resolution rates, not the 75-80% advertised on public benchmarks. Furthermore, models excel at simpler tasks—single-function bug fixes, boilerplate generation, syntax transformations. They struggle with architectural decisions, cross-file dependencies, and problems requiring genuine reasoning.

Finally, remember that 96% of developers don’t fully trust AI output, yet only 48% always verify it before committing. Be in the 48%. The gap between public benchmark performance and private reality proves that AI coding tools aren’t reliable enough to skip verification.

The SWE-bench Pro results should reset expectations industry-wide. Models are useful tools for accelerating certain tasks, but they’re not autonomous software engineers. The 46% performance drop on private tests is a wake-up call: if a model hasn’t seen your codebase before, its real-world performance will likely be far below what the leaderboard promises.

Key Takeaways

- Claude Opus 4.1 dropped 22% on private tests; GPT-5 fell 36%—evidence of benchmark overfitting

- SWE-bench Pro’s 1,865 multi-language, long-horizon tasks better reflect real-world complexity

- MiniMax delivers competitive performance at 1/10th the cost of Claude—optimize for cost-performance, not pure accuracy

- Public leaderboards show 75-80%; realistic expectations on unseen code: 15-25%

- Test AI tools on your actual codebase before committing—public scores don’t predict private performance