Cloud waste hit 29% in 2026—the first increase in five years—according to Flexera’s March survey of 753 cloud decision-makers. StormForge’s survey of 134 IT professionals reveals an even starker picture: organizations waste 47% of their cloud budgets on average. With global cloud spending projected to exceed $840 billion by end of 2026, this represents roughly $240 billion vanishing annually on idle virtual machines, over-provisioned instances, and forgotten development environments. 82% of cloud decision-makers cite managing spend as their primary challenge, yet 29.6% of platform teams don’t measure success at all.

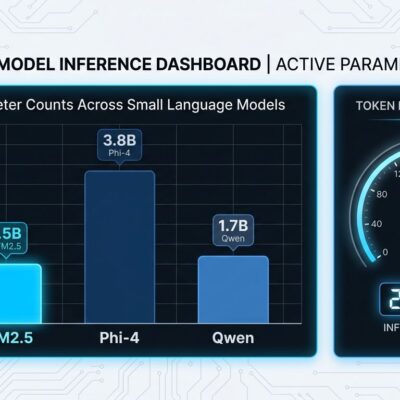

The culprit behind this reversal? Surging AI workloads. Organizations over-provision GPU instances and fail to optimize inference workloads, creating expensive new patterns of waste. For developers and platform engineers, cloud cost optimization is no longer optional—it’s becoming a core responsibility alongside performance and reliability.

Where $240B Goes: The Waste Breakdown

Cloud waste comes from three specific sources, each representing fixable problems once you can see them. Idle or stopped resources—instances, volumes, IPs, snapshots left running—account for 10-15% of monthly invoices. These are dev/test environments forgotten after projects end, scheduled workloads that could be shut down, and resources created for troubleshooting that never got cleaned up.

Over-provisioned compute wastes another 10-12% of spending. Teams provision 8 vCPUs but use 2, maintaining “safety margin” capacity that never gets touched. This isn’t inefficiency—it’s paying full price for resources that literally do nothing. Orphaned storage artifacts—unused disks, snapshots from deleted applications, redundant backup copies—add 3-6% avoidable spend.

The root cause isn’t technical. It’s visibility. 54% of organizations lack clear insights into where their cloud money goes. Without cost attribution across teams and projects, waste proliferates silently. Flexera specifically calls out AI workloads as the new waste driver in their March 2026 report. Organizations spin up GPU instances for training, forget to shut them down after experiments, and over-provision inference capacity “just in case.”

What Works: $100M Saved from a Single App

An insurance company cut $100 million annually from a single application. Not through exotic optimization—through standard FinOps practices. Rightsizing instances, eliminating idle resources, using reserved capacity for stable workloads, implementing auto-scaling. The example is striking because it’s repeatable.

An Azure enterprise documented $22 million in savings—a 36% cost reduction—through systematic optimization recommendations. MakeMyTrip reduced compute costs by 22% through container optimization on Amazon ECS and EKS. Reco.se implemented observability and resource allocation strategies, cutting infrastructure expenses by 21% year-over-year. These aren’t vendor marketing claims. They’re documented case studies showing cloud waste is fixable at scale.

Organizations implementing structured optimization programs report 25-30% cost reductions within 3-6 months. Most achieve positive ROI within the first quarter, with ongoing annual savings of 25-35%. If one insurance app had $100M in waste, how much is hiding in typical enterprise portfolios? The ROI timeline makes this a no-brainer investment.

From Dashboards to Autopilot: FinOps Goes AI

60% of enterprises now use AI-assisted FinOps workflows, with 75% projected to adopt automation by end of 2026. This represents a fundamental shift from reactive cost reporting—dashboards showing what you spent last month—to proactive autonomous optimization. Systems now automatically rightsize, shutdown, and cleanup without human intervention. The FinOps Foundation is blunt: “Dashboards are table stakes of yesterday—reactive. You have to move to proactive, real-time automation.”

Automation platforms leverage machine learning to continuously analyze utilization patterns, generate recommendations, and implement changes during maintenance windows. This solves the scalability problem that killed manual optimization—humans can’t review thousands of resources across dozens of accounts weekly. Early adopters using automated rightsizing, scheduled shutdowns, and policy enforcement report 80% reduction in time-to-remediation.

This matters for platform engineers building internal developer platforms. Gartner forecasts 80% of large organizations will have platform teams by 2026, and cost optimization is becoming their job. The tooling has matured from “nice to have” to “table stakes.” Just as CI/CD automated deployment pipelines, FinOps automation is making cost optimization continuous and autonomous.

What Actually Works: The Optimization Playbook

The most effective optimization follows a maturity progression: gain visibility, optimize manually, automate tactically, then deploy AI-assisted continuous optimization. Organizations that skip steps fail—you can’t automate what you can’t see.

Tag everything for cost allocation from day one. Without tagging by team, project, environment, and owner, you can’t attribute costs or hold teams accountable. Implement scheduled shutdowns for dev/test environments—they don’t need to run 24/7. Automated schedules save 50-70% on non-production workloads. Use Reserved Instances and Savings Plans for stable, predictable workloads to capture 30-70% discounts. Deploy auto-scaling for variable demand to eliminate perpetual over-provisioning. Run automated cleanup scripts monthly for orphaned volumes, snapshots, and IP addresses to save 3-6%.

Start simple. Don’t wait for perfect visibility to act on obvious waste. Shutting down forgotten dev instances saves 5-10% immediately. Then build better analytics and progressively automate. Most teams over-think this—the quick wins are obvious once you look.

Are Cloud Providers Incentivized to Keep You Wasteful?

All major cloud providers—AWS, Azure, GCP—offer optimization tools. AWS Compute Optimizer analyzes utilization and recommends rightsizing. Azure Advisor integrates with Cost Management for VM optimization suggestions. GCP Recommender identifies idle resources and suggests committed use discounts. Yet industry average waste remains 28-35%, and it just increased for the first time in five years.

The question: are providers truly incentivized to minimize your spending, or just reduce it enough to keep you from leaving? Cloud providers make more money when customers waste resources. They provide tools because satisfied customers stay, but the tools are advisory—they don’t aggressively automate implementation. This explains why 60% of enterprises use third-party AI-assisted FinOps platforms rather than relying solely on provider-native optimization. The tooling exists. The incentive alignment doesn’t.

Key Takeaways

- Cloud waste hit 29% in 2026 (Flexera)—the first increase in five years, driven by AI workload over-provisioning. StormForge reports 47% average waste across 134 organizations.

- The waste is fixable: idle resources (10-15%), over-provisioned compute (10-12%), orphaned storage (3-6%). Organizations implementing FinOps programs achieve 25-30% cost reduction in 3-6 months.

- Real-world proof: $100M saved from one insurance app, $22M saved by Azure enterprise (36% reduction), 22% compute cost reduction for MakeMyTrip.

- FinOps automation is the inflection point: 60% of enterprises now use AI-assisted workflows, 75% projected by end 2026. Manual optimization doesn’t scale.

- Platform engineers own this now: With 80% of organizations building platform teams (Gartner), cost optimization is becoming their responsibility alongside developer experience and reliability.

The data is clear: cloud waste is massive, growing, and fixable. Start with visibility through tagging, eliminate obvious idle resources, then progressively automate. The ROI timeline—positive returns in 3-6 months—makes this essential, not optional.