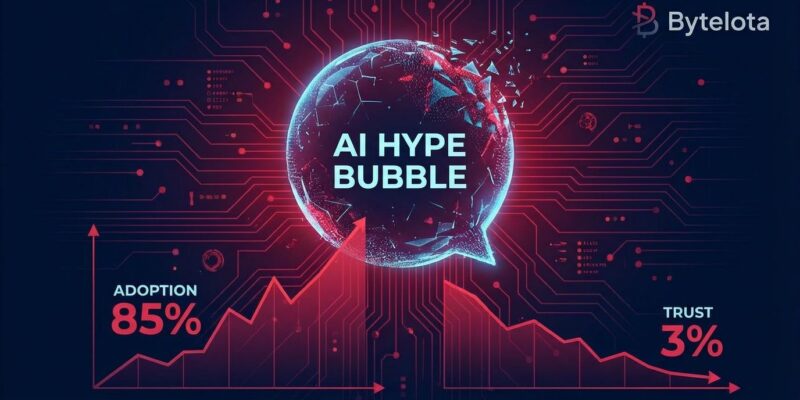

Gartner predicts over 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, and inadequate risk controls. This isn’t happening despite high adoption—it’s happening because of it. Stack Overflow’s 2025 survey shows 84% of developers now use AI tools, yet only 3% highly trust the output. Goldman Sachs questions “Where is all the revenue?” Sequoia Capital warns the AI bubble is “reaching a tipping point.” The gap between AI adoption (85%) and AI trust (3%) tells the real story: developers use these tools daily but don’t believe they work well.

85% Adoption, 3% Trust: The Numbers Don’t Add Up

Developer AI adoption has reached 84-85% according to Stack Overflow and JetBrains surveys from 2025, with 51% using AI daily. Yet only 3% of developers “highly trust” AI-generated output. More concerning: 46% actively distrust AI accuracy—outnumbering the 33% who trust it. Positive sentiment dropped from 70%+ in 2023-2024 to just 60% in 2025.

The top frustration reveals why. 66% of developers say AI delivers “solutions that are almost right, but not quite.” The second frustration? “Debugging AI-generated code is more time-consuming” (45%). Experienced developers show the greatest skepticism: only 2.5% highly trust AI-generated results. High adoption doesn’t mean high value—it means developers are pressured to use tools they don’t trust. When two-thirds say AI code is “almost right, but not quite,” that’s not a productivity gain. It’s a debugging nightmare.

Why 40% Will Fail: Costs, ROI, and Risk

Gartner’s June 2025 prediction breaks down three specific reasons for cancellation: escalating costs, unclear business value, and inadequate risk controls. The research firm also exposed a dirty secret: only about 130 of thousands of “agentic AI vendors” are real. The rest are “agent washing”—rebranding chatbots and RPA as autonomous agents without the capabilities to back it up.

Investment data supports the pessimism. Gartner’s January 2025 poll of 3,412 attendees found 19% made significant AI investments, 42% conservative investments, and 31% are in wait-and-see mode. Analysts explain: “Most agentic AI projects right now are early stage experiments or proof of concepts that are mostly driven by hype and are often misapplied. This can blind organizations to the real cost and complexity of deploying AI agents at scale.” The cancellations are coming because the math doesn’t work: high costs + unclear ROI + risk issues = project failure.

Goldman Sachs and Sequoia Question the Business Case

Goldman Sachs’ equity research titled “Gen AI: too much spend, too little benefit?” argues that “AI technology is exceptionally expensive, and to justify those costs, the technology must be able to solve complex problems, which it isn’t designed to do.” Sequoia Capital echoed this, questioning “Where is all the revenue?” and warning that “the AI bubble is reaching a tipping point” after massive infrastructure spending produced no proportional revenue.

MIT Technology Review adds that AI “systems do not have judgment, cannot tell right from wrong, and cannot tell true from false.” Harvard’s Ash Center documents the deflating hype: even as the bubble deflates, “carbon can’t be put back in the ground, workers continue to face AI’s disciplining pressures, and the poisonous effect on our information commons will be hard to undo.”

When top-tier investment banks and VCs question AI’s business case, developers should pay attention. These aren’t random skeptics—they’re the firms funding AI startups and advising enterprises. If they can’t find the revenue, your AI project might be in the 40% that gets canceled.

The Divide: Code Completion Works, Autonomous Agents Fail

AI succeeds at narrow, low-stakes tasks but fails at ambitious, autonomous ones. Stack Overflow data shows developers confidently use AI for searching answers (54%), generating test data (36%), learning new tech (33%), and documentation (31%). But they overwhelmingly reject AI for deployment and monitoring (76% won’t use), project planning (69% rejection), and code review/commits (59% rejection).

The pattern is consistent: autocomplete and code completion tools work well because they’re simple, narrow, and easy to verify. Autonomous agents attempting end-to-end workflows often fail. One CTO reported autocomplete would “fail catastrophically” on complex tasks. Another noted Claude Code completed a 4-hour task in 2 minutes with better quality—but these successes are rare exceptions, not typical performance.

This data gives developers a clear roadmap: use AI for autocomplete, docs, and search. Avoid it for deployment, planning, and high-stakes decisions. The 40% cancellation prediction applies to ambitious autonomous projects, not simple code completion tools.

How to Avoid the 40%

Projects that will survive focus on domain-specific AI with clear ROI metrics, seamless workflow integration, and human-in-the-loop verification. Projects that will fail are generic, hype-driven, with poor integration and inflated expectations. The key is treating AI as an assistant, not a replacement, and accepting longer ROI timelines than traditional tech.

Lenovo’s global CIO Arthur Hu says “patience remains a virtue” in AI investing, noting that “the ROI time horizon for generative AI is longer than other technology applications.” Analysis shows domain-specific AI—accounting, legal, medical with industry expertise—outperforms generic solutions. Human verification is non-negotiable: 75% of developers still ask people when they don’t trust AI answers.

Key takeaways:

- The adoption-trust paradox (85% usage, 3% high trust) explains why 40% of projects will fail—high adoption doesn’t equal high value

- Gartner’s three cancellation reasons are specific: escalating costs, unclear business value, inadequate risk controls—not vague concerns

- Goldman Sachs and Sequoia question the business case: “exceptionally expensive” with unclear revenue is a red flag

- Code completion and narrow AI tools succeed; autonomous agents for deployment, planning, and monitoring overwhelmingly fail

- Survival strategy: domain-specific AI, clear ROI metrics, human-in-the-loop verification, and realistic timelines—treat AI as assistant, not replacement