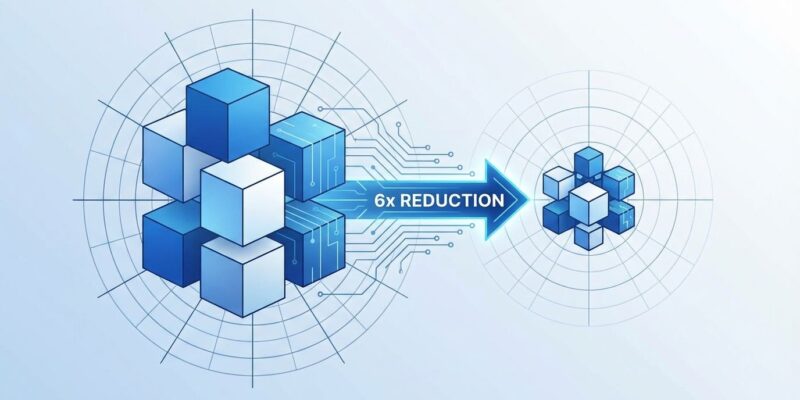

Google Research announced today a compression algorithm that reduces AI model Key-Value cache memory by 6x and delivers up to 8x speedup on NVIDIA H100 GPUs—all without measurable accuracy loss. TurboQuant addresses the bottleneck preventing large language models from running on consumer hardware: memory constraints that make a 70-billion-parameter model with a 32,000-token context consume more than 85GB of GPU memory in standard precision.

The achievement matters because memory bandwidth, not compute power, decides whether AI inference is feasible. While marketing teams obsess over TOPS benchmarks, engineers know the truth: compute units sit idle waiting for data.

Memory Bottleneck: The Real Constraint

Memory bandwidth is the decisive limitation for AI inference. Mobile devices offer 50-90 GB/s while data centers provide 2-3 TB/s—a 30-50x gap that turns inference into a memory-bound operation regardless of available compute. Mobile NPUs deliver impressive 35-60 TOPS, but they starve waiting for weights.

The Key-Value cache amplifies this problem. Transformer models store attention keys and values for every previous token to accelerate generation, and this cache grows linearly with context length. Llama-2-70B processing a 32k context window requires more than 85GB of GPU memory just for the KV cache in FP16 format—exceeding the capacity of most consumer GPUs and many data center accelerators.

With 72% of enterprises running production AI workloads as of Q1 2026, these constraints aren’t theoretical. They determine which models deploy, how long contexts can extend, and whether inference runs in the cloud or on-device.

How TurboQuant Compression Works

TurboQuant uses a two-stage compression process that fundamentally differs from standard quantization. Stage one, PolarQuant, converts vectors from Cartesian to polar coordinates—representing data as radius and angle rather than x-y positions. The key insight: angular patterns in AI model weights are concentrated and predictable, allowing the algorithm to exploit this geometric property and eliminate expensive normalization steps that traditional quantization requires.

Stage two, QJL (Quantized Johnson-Lindenstrauss), applies the Johnson-Lindenstrauss Transform to residual errors, reducing each remaining value to a single sign bit—positive or negative. This step carries zero memory overhead while correcting bias from the first stage.

The result: 3-bit compression with zero accuracy loss across question answering, code generation, and summarization tasks. No retraining. No fine-tuning. The algorithm is data-oblivious and operates near theoretical lower bounds according to the ICLR 2026 paper.

Beating GPTQ and AWQ 4-Bit Standard

The AI industry converged on 4-bit quantization as the deployment standard. GPTQ and AWQ, with more than 19 million combined downloads, compress models to 4 bits with typical accuracy degradation of 1-5%. GPTQ requires 2-4 hours of calibration for a 7-billion-parameter model. AWQ performs better—10-30 minutes calibration, 95-99% accuracy retention, and 1.3-1.5x faster inference than FP16—but still incurs quality loss.

TurboQuant’s 3-bit compression represents a 33% additional memory reduction beyond the 4-bit standard. More importantly: zero accuracy loss where existing methods trade performance for size, and zero calibration time where GPTQ burns hours.

The memory reduction compounds. 6x less memory means deploying 6x larger models on the same hardware, extending context windows 6x longer within the same memory budget, or processing 6x larger batch sizes per inference server. Each multiplier translates directly to deployment economics.

Real-World Deployment Economics

Memory efficiency determines deployment costs. Companies implementing dynamic batching and smaller models report 40% cloud cost reductions. TurboQuant’s 6x memory reduction allows sub-30B models to break even versus cloud APIs within three months, and enables 70B models to run on two A100-80GB GPUs costing $30,000 instead of requiring H100 clusters.

For context: Cloud APIs charge $0.50-$3.00 per million tokens for Gemini 3 Flash and $1.00-$5.00 for Claude Haiku 4.5. At scale, the $50+ billion inference chip market in 2026 reflects how much organizations are spending to make AI inference feasible.

TurboQuant’s compression enables consumer deployment scenarios that weren’t viable before. That 85GB Llama-2-70B KV cache becomes approximately 14GB—fitting comfortably on high-end consumer GPUs. Extended context windows of 100,000+ tokens become accessible on affordable hardware instead of requiring specialized infrastructure.

Developer Skepticism and Concerns

The impressive benchmarks come with caveats. Developers on Hacker News immediately questioned the claims. The paper reports accuracy-versus-space metrics but “conveniently avoids reporting inference wall-clock time,” one commenter noted. Several developers argued that “polar coordinates are absolute poison for parallel GPU compute,” questioning whether theoretical compression translates to real-world performance on modern architectures.

Others pointed out that baseline numbers “look seriously underreported” and flagged missing citations to 2021 NeurIPS research on geometric rotation techniques. The Google Research blog post itself drew criticism as “AI-generated slop” with awkward phrasing and repetitive emphasis on “zero overhead.”

The rapid response tells both sides of the story. Implementations appeared in llama.cpp within hours of the announcement—a strong signal of developer interest despite skepticism. Real-world validation remains pending. The critical test: whether GPU performance matches the theoretical claims when engineers try TurboQuant in production workloads.

What Comes Next for Model Compression

TurboQuant will be presented at ICLR 2026 with the companion PolarQuant paper appearing at AISTATS 2026. The theoretical foundation is solid—operating near provable lower bounds—but production adoption depends on whether the polar coordinate approach performs well on parallel GPU architectures.

If the speedup claims hold under real-world conditions, 3-bit quantization could become the new deployment standard, replacing the current 4-bit baseline. Watch for integrations into production inference frameworks like vLLM, Text Generation Inference, and llama.cpp. The same-day llama.cpp implementation suggests the open-source community is ready to validate the claims quickly.

The broader implication: We’re approaching practical limits of model compression without fundamentally rethinking model architectures. TurboQuant may represent one of the last major compression gains achievable through quantization alone. Beyond this point, efficiency improvements will require different approaches—sparsity, mixture-of-experts, or architectural innovations we haven’t seen yet.