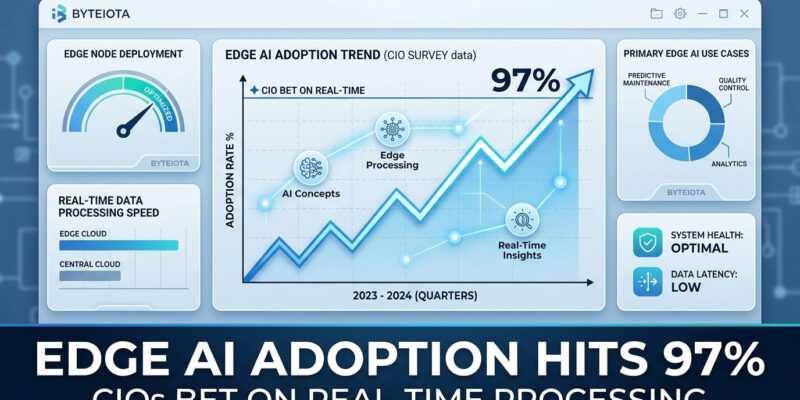

Edge AI Adoption Hits 97%: CIOs Bet on Real-Time Processing

Edge AI crossed from emerging technology to enterprise mandate in 2026. ZEDEDA’s survey of 301 US CIOs reveals 97% have Edge AI either deployed or on their roadmap—only 3% have no plans. Moreover, this isn’t pilot purgatory: 30% report full deployment, 22% are in limited production, and 34% are testing with plans to deploy within 24 months. The economics are undeniable. Manufacturing operations report 40% reductions in downtime, retail achieves real-time inventory accuracy impossible with cloud architectures, and healthcare enables patient monitoring with sub-10ms response times that save lives.

Consequently, this represents a fundamental architectural shift—inference moving from cloud to edge driven by latency requirements (autonomous vehicles need sub-10ms), bandwidth costs (95% reduction by processing locally), and privacy mandates (HIPAA compliance). Furthermore, enterprise budgets confirm the transformation: 90% of organizations are increasing Edge AI spending, with 30% reporting budget increases of 25% or more.

Manufacturing, Retail, Healthcare Lead Edge AI Deployment

Industry leaders aren’t testing anymore—they’re deploying at scale. Manufacturing operations hit 40% full deployment, retail reaches 50%, and healthcare shows 90% implementation rates. Additionally, these numbers reveal where ROI is proven and replicable.

Manufacturing operations implementing edge-based predictive maintenance reduce unplanned downtime by 40% through real-time anomaly detection. Computer vision systems inspect 100+ parts per minute at production line speed, detecting defects in milliseconds. Meanwhile, equipment sensors predict failures before downtime occurs. The ROI timeline is concrete: quality inspection and predictive maintenance projects show 6-12 month payback, while complex multi-process implementations require 18-24 months.

Retail’s 50% deployment rate is driven by automated checkout and inventory management. Computer vision tracks products picked and returned for cashierless checkout. In addition, AI cameras scan shelves, detect out-of-stock items, and trigger replenishment in real-time. The economic case is straightforward: processing video locally and sending only insights to cloud reduces bandwidth costs by 95%.

Healthcare’s 90% implementation rate reflects life-or-death latency requirements. Wearables monitor ECG and glucose continuously, detecting arrhythmias or hypoglycemia on-device. ICU systems detect sepsis and cardiac arrest up to 6 hours before crisis. CT scanners flag intracranial hemorrhages instantly. Therefore, Edge AI systems achieve 15-45ms response times enabling real-time intervention, preventing 70-85% of safety incidents that cloud-based systems detect too late to address.

Why Inference Is Moving from Cloud to Edge

The architectural shift isn’t theoretical—it’s economic and operational. 47% of enterprises now use hybrid cloud-edge architecture, with inference moving to edge while cloud retains training and analytics. The driver is latency physics: edge systems cut response time from 100ms+ (cloud round-trip) to single-digit milliseconds.

Latency requirements make cloud architectures impossible for entire categories of applications. Autonomous vehicles need sub-10ms response. Industrial automation requires sub-5ms for safety-critical controls. Augmented reality demands sub-20ms for user experience. Healthcare diagnostics need 15-45ms for real-time intervention. Consequently, cloud round-trip latency exceeds these requirements by an order of magnitude.

Bandwidth economics reinforce the shift. Video surveillance, IoT sensor networks, and manufacturing telemetry generate massive data volumes. Processing locally and sending only events or metadata to cloud reduces transmission costs by 95%. As a result, organizations eliminate cloud egress fees that made high-volume data streams economically unviable.

Privacy mandates complete the picture. Healthcare patient data must comply with HIPAA. Financial transactions require PCI-DSS. Proprietary manufacturing processes stay on-premises. However, streaming raw data to cloud violates compliance—processing locally solves the regulatory challenge while improving performance.

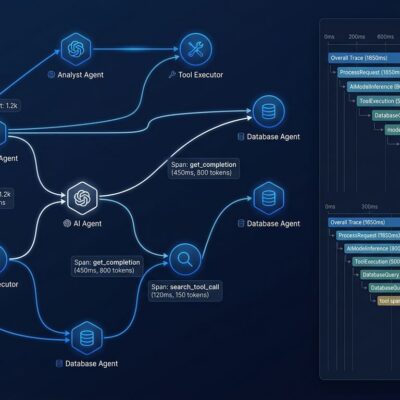

The hybrid pattern enterprises actually use: train models in cloud (unlimited compute, data aggregation), deploy inference to edge (latency, privacy, cost), sync insights back to cloud (long-term analytics). This isn’t edge replacing cloud—it’s a fundamental rethinking of where compute happens based on workload characteristics.

Edge Orchestration: Where Deployments Get Hard

ROI is proven, but deployment complexity remains the primary obstacle. “Edge Orchestration”—deploying, updating, and securing AI models across thousands of heterogeneous devices—is where ambitious projects fail. Edge environments feature diverse hardware (x86, ARM, GPUs, NPUs), operating systems, power constraints, and network reliability issues.

The gap between pilot and production is massive: deploying to one device is straightforward, managing updates, security, and monitoring across thousands is exponentially harder. Without edge orchestration platforms like ZEDEDA, AWS IoT Greengrass, or Azure IoT Edge, operational costs for provisioning, troubleshooting, and patching become unsustainable.

Here’s the paradox: 53% cite improving security and data privacy as the No. 1 reason for Edge AI investments, yet 42% identify security risks and data protection as the most significant deployment challenge. Securing thousands of distributed edge devices is harder than centralized cloud infrastructure. Therefore, the answer isn’t abandoning edge—it’s investing in proper orchestration tooling and operational processes.

This complexity explains the ROI timeline variance. Proven use cases like quality inspection and predictive maintenance deliver ROI in 6-12 months because deployment patterns are established. Complex multi-process implementations require 18-24 months as organizations build expertise and tooling. The message from industry analysts is clear: “Many overly ambitious edge AI projects will give way to targeted, efficient initiatives designed to deliver measurable business outcomes.”

What Edge AI Adoption Means for Developer Careers

Edge AI represents a major skills shift and career opportunity. With 90% of enterprises increasing budgets and adoption reaching 97%, demand is surging for developers who understand edge-aware architecture. The market is growing from $24.91 billion in 2025 to a projected $143 billion by 2034—a trajectory mirroring cloud computing’s rise 15 years ago.

Key skills in demand: model optimization (quantizing models from FP32 to INT8, pruning, efficient architectures like MobileNet), edge orchestration (managing deployment and updates across distributed fleets), real-time inference (sub-10ms processing for safety-critical applications), and hybrid architecture design (knowing when to process at edge versus cloud).

The budget signal is clear. Manufacturing companies report 36% significant budget increases. Retail shows 50% full deployment. Healthcare hits 90% implementation. Therefore, enterprise hiring and compensation flow to these investment areas. Developers building edge optimization and hybrid architecture skills now position themselves for the wave of enterprise deployments happening over the next 24 months.

Key Takeaways

- Edge AI adoption is mainstream: 97% of CIOs have deployment plans, with 30% fully deployed proving ROI in production environments

- Industry leaders show the pattern: Manufacturing 40% deployed (predictive maintenance), Retail 50% (automated checkout), Healthcare 90% (patient monitoring)—all reporting measurable ROI in 6-12 months for proven use cases

- The architectural shift is economics, not hype: Latency requirements (sub-10ms for autonomous vehicles), bandwidth costs (95% reduction), and privacy compliance (HIPAA, PCI-DSS) make cloud-only architectures unviable for real-time AI

- Hybrid cloud-edge is the actual pattern: 47% of enterprises use hybrid architectures—train in cloud, infer at edge, sync insights back—not edge replacing cloud

- Complexity is real but solvable: Edge orchestration challenges require investment in platforms (ZEDEDA, AWS IoT Greengrass) and operational expertise, explaining why complex projects need 18-24 months versus 6-12 for proven use cases

- Developer opportunity is substantial: Market growing from $24.91B to $143B by 2034, with 90% of enterprises increasing budgets creating demand for edge optimization, real-time ML, and hybrid architecture skills

Edge AI isn’t replacing cloud computing—it’s transforming where and how we deploy real-time intelligence. Enterprises betting billions on this architectural shift are proving ROI with measurable outcomes. The question for developers isn’t whether to learn edge AI, but how quickly to build skills for the deployment wave already underway.