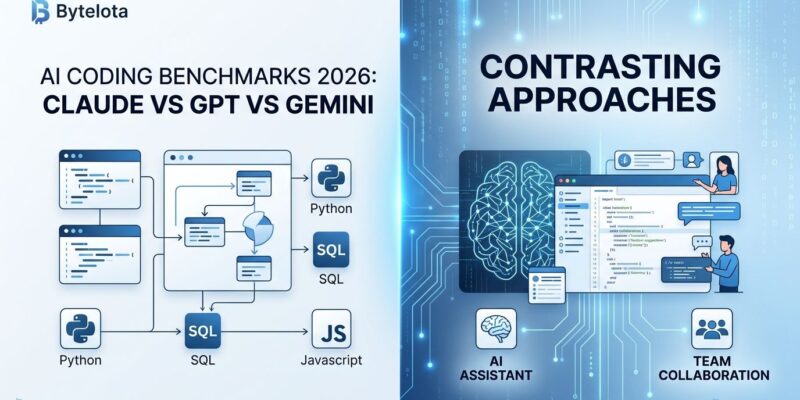

The AI coding tool wars are over, and nobody won. March 2026 benchmark results show Claude Opus 4.6, GPT-5.4, and Gemini 3.1 Pro trading victories across different tasks, with top models landing within 1-2 points of each other on major benchmarks. Meanwhile, prices have crashed 40-80% year-over-year, and the smartest developers aren’t picking sides anymore. They’re running 2-3 models in a routing setup, letting the task dictate the tool. The question isn’t “which model is best?” It’s “which model is best for this job?”

Performance Convergence: No Clear Winner

The March 2026 benchmarks tell a complicated story. Claude Opus 4.6 dominates SWE-bench Verified with 80.8% on real GitHub issues. However, switch to Terminal-Bench 2.0 for agentic execution tasks, and GPT-5.4 leads with 75.1%. Then Gemini 3.1 Pro takes the crown on ARC-AGI-2 abstract reasoning at 77.1%, more than doubling its predecessor’s score and leaving Claude (68.8%) and GPT-5.2 (52.9%) behind.

Here’s the full breakdown from independent LM Council benchmarks:

SWE-bench Verified (Real GitHub Issues):

- Claude Opus 4.6: 80.8%

- Gemini 3.1 Pro: 80.6%

- GPT-5: 74.9%

SWE-bench Pro (Harder, Less Gameable):

- GPT-5.4: 57.7%

- Gemini 3.1 Pro: 54.2%

- Claude Opus 4.6: ~45%

Terminal-Bench 2.0 (Agentic Execution):

- GPT-5.3-Codex: 77.3%

- GPT-5.4: 75.1%

- Claude Opus 4.6: 65.4%

The gap between top models? Just 1-2 points on most benchmarks. As LogRocket’s March 2026 analysis noted: “Determining which model is strongest at coding has become harder now that we’re in 2026, as results vary not just by model but also by agentic implementation. Benchmarks fragment by task type causing rank reversals.”

This matters because simple “X beats Y” comparisons are dead. Long-form codebase work favors Claude’s 1M context window. Terminal automation runs best on GPT-5.3-Codex. Abstract reasoning tasks lean Gemini. The tool needs to match the task.

The Price War: AI Coding Gets Radically Cheaper

While models converged on performance, they diverged on price. Moreover, Gemini made the most aggressive play. At $2 input / $12 output per million tokens, Gemini 3.1 Pro undercuts GPT-5.2 ($1.75/$14) and Claude Opus 4.6 (premium tier, highest cost). For ultra-budget needs, Grok 4.1 comes in at $0.20/$0.50.

The price-performance winner? Gemini 3.1 Pro delivers 77.1% ARC-AGI-2 and 80.6% SWE-bench Verified at a fraction of premium model costs. It matches Claude on key benchmarks while costing significantly less.

Prices across the board dropped 40-80% year-over-year while performance improved. Furthermore, the developer conversation has shifted. It’s no longer “which tool is smartest?” Now it’s “which tool won’t torch my credits?”

Knowing when premium models are worth 3-10x the price is critical for budget-conscious teams. For routine documentation and simple refactors, cheap models work fine. For complex architecture and whole-codebase reasoning, premium models earn their keep. The trick is matching cost to complexity.

Multi-Model Routing: The Strategy Enterprises Are Using

The smartest developers in March 2026 aren’t picking one model. They’re running two or three in a routing setup: a cheap default for routine work, a strong mid-tier for most serious tasks, and a premium option for the hard stuff.

The data backs this up according to IDC’s analysis:

- 37% of enterprises use 5+ models in production (2026)

- IDC predicts 70% of top AI-driven enterprises will use routing by 2028

- Routing cuts costs 60-85% while maintaining or improving performance

- One case study showed 28% faster resolution times and 19% better customer satisfaction

How does routing work in practice?

- Cheap model (Gemini, Grok) handles documentation, simple refactors, boilerplate code

- Mid-tier (GPT-5, Claude Sonnet) tackles feature development, debugging, code reviews

- Premium (Claude Opus 4.6) solves complex architecture, large-scale refactors, intricate debugging

Implementing a router takes 50-100 lines of code in most frameworks. Rule-based approaches use simple switch/case logic. More sophisticated setups use an LLM classifier to route each request.

Companies are treating AI model selection like air traffic control, dynamically routing each request to the optimal destination. Consequently, single-model loyalty is obsolete. The routing revolution is here.

Open-Weight Models Are Finally Competitive

Free and cheap alternatives used to mean compromising on quality. Not anymore. February 2026 brought a wave of open-weight models that can hang with proprietary titans.

Qwen3-Coder-Next (80B parameters, 3B active) matches Claude Sonnet 4.5 on SWE-bench Pro. MiniMax M2.5 hit 80.2% SWE-bench Verified at just $0.30/$1.20 per million tokens. GLM-5 (Reasoning) excels at coding and reasoning with a Quality Index of 49.64.

The performance gap has closed dramatically. Open source models now hit 90% on LiveCodeBench and 97% on AIME 2025, rivaling the best proprietary models. As multiple analyses concluded: open-weight models are “finally good enough to matter.”

This matters for teams that want self-hosting for privacy or security. For startups on tight budgets. For anyone avoiding vendor lock-in. Competitive performance without the proprietary price tag or restrictions.

Task-Specific Recommendations: Which Model for What

Forget one-size-fits-all advice. Based on March 2026 benchmarks from SitePoint and other independent analyses, here’s what the data actually suggests:

For long-form, large codebases:

Use Claude Opus 4.6. Its 1M context window and 128K output enable whole-repo understanding and complex multi-file changes. Leads SWE-bench Verified at 80.8%.

For terminal execution and agentic tasks:

Use GPT-5.3-Codex. Tops Terminal-Bench 2.0 at 77.3%. Best for CLI automation and DevOps scripts.

For budget-conscious teams:

Use Gemini 3.1 Pro. At $2/$12, it delivers 80.6% SWE-bench Verified and 77.1% ARC-AGI-2. Best price-to-performance ratio.

For abstract reasoning and problem-solving:

Use Gemini 3.1 Pro. Its 77.1% ARC-AGI-2 score dominates logic puzzles and algorithm design.

For balanced, general-purpose work:

Use GPT-5.4. Strong across multiple benchmarks. Good for varied workloads when you don’t want to switch tools constantly.

The Real Advantage Isn’t Speed

Here’s what the benchmarks don’t capture: “The real advantage of these tools is not speed alone, but reduced mental load. When AI handles context, repetition, and scaffolding, developers can focus on design, correctness, and long-term thinking.”

That’s the game-changer. Not autocomplete, but cognitive offloading. Not faster coding, but better thinking. With 84% of developers using AI tools and 41% of all code now AI-generated or assisted, the question isn’t whether to adopt these tools. It’s how to use them strategically.

Match tools to tasks. Route intelligently to cut costs. Consider open-weight alternatives. Don’t fall for tribal “my model beats yours” arguments when the data shows task-dependent performance.

The March 2026 benchmarks killed the simple answer. But they gave us something better: a framework for making smart choices based on what we’re actually building.