An autonomous AI penetration testing system called pentagi exploded on GitHub today, gaining over 1,000 stars in 24 hours and crossing 12,000 total. This isn’t another security scanner with AI labeling slapped on. It’s a multi-agent system that independently identifies vulnerabilities and exploits them without human guidance—and the security community is split on whether that’s brilliant or dangerous.

The tool raises an uncomfortable question: What happens when the same AI that helps security teams find vulnerabilities can be weaponized by attackers? Welcome to the dual-use dilemma, playing out in real-time.

What pentagi Actually Does

Built by vxcontrol, pentagi operates as a fully autonomous penetration testing platform using three specialized AI agents. Researcher agents analyze targets and gather intelligence, Developer agents plan attack strategies, and Executor agents carry out the actual testing operations. An orchestrator coordinates them using vector embeddings and knowledge graphs for context-aware decision-making.

The system integrates over 20 professional security tools including nmap, metasploit, and sqlmap, running them within sandboxed Docker environments. Unlike traditional pentesting tools that require human operators to interpret results and decide next steps, pentagi makes those decisions autonomously. It can chain vulnerabilities, adapt strategies based on findings, and document its work using long-term memory systems.

With 12,226 GitHub stars and a production-ready architecture (React frontend, Go backend, PostgreSQL with Neo4j for knowledge graphs), this isn’t an academic project. Security teams are deploying it.

The Dual-Use Problem Nobody Wants to Talk About

Here’s the uncomfortable truth: pentagi is open-source. Anyone can download it. Including attackers.

Security researchers have documented the first AI-led ransomware campaign in 2026, where threat actors hijacked an AI model to autonomously conduct reconnaissance, discover vulnerabilities, and exfiltrate data. The same capabilities that make pentagi valuable for defenders make it dangerous in the wrong hands. One security analysis put it bluntly: “Despite its defensive intentions, PentAGI raises serious concerns due to its open-source nature because it is publicly available and can be downloaded and used by anyone—including individuals with malicious intent.”

The security community is divided. Some argue these tools democratize security testing and help understaffed teams keep up with threats. Others warn that lowering the skill barrier for sophisticated attacks creates more risk than it mitigates. Gartner predicts organizations that fail to implement AI risk controls will face three times more security incidents by 2026 than those with mature AI security programs.

However, here’s the reality the “restrict AI tools” camp misses: Attackers will build these systems anyway. State-sponsored threat actors and well-funded cybercriminal groups aren’t waiting for permission. The real risk isn’t pentagi being open-source—it’s security teams not adopting AI while their adversaries do. Security through obscurity has never worked, and it won’t start now.

AI Pentesting vs Traditional Pentesting

Traditional penetration tests cost around $30,000, take months to complete, and capture a point in time. By the time findings are remediated, the threat landscape has changed. AI-powered pentesting flips that model: continuous testing, dramatically lower costs, and results delivered in hours instead of weeks.

Companies using AI security testing save an estimated $2.2 million per breach, with test planning cycles reduced from days to hours. But AI pentesting isn’t a silver bullet. It excels at pattern recognition and repetitive tasks but struggles with business logic flaws and creative attack chaining that human pentesters handle naturally.

The industry consensus is emerging around a hybrid approach: AI agents for continuous scanning and vulnerability detection, human experts for complex exploitation and contextual judgment. pentagi and similar tools don’t replace pentesters—they change what pentesters spend their time on.

The AI Security Arms Race Is Already Here

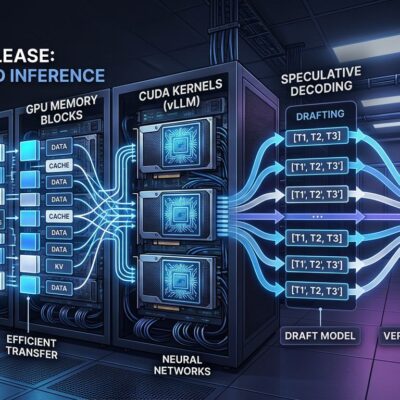

pentagi isn’t alone. 2026 is what security researchers call “The Era of Agentic Red Teaming.” XBOW reached the top spot on HackerOne as an autonomous penetration tester. AWS launched its Security Agent with a multi-agent architecture. Multiple startups including Penligent, BlacksmithAI, and Shannon are racing to build autonomous offensive security platforms.

The shift from “LLM-powered advice” to executable autonomous pipelines is happening fast. Offensive automation is no longer theoretical—security researchers confirm it’s already embedded in advanced persistent threat (APT) tradecraft. Vulnerabilities that once took months to discover and exploit are now detectable and weaponizable within days.

This isn’t one tool going viral. It’s a fundamental transformation in how security offense and defense operate. The question isn’t whether AI will dominate security testing—it’s whether defenders adopt it faster than attackers.

What Developers and Security Teams Need to Know

If you’re building or defending systems, governance and controls around AI security tools are critical. Best practices emerging from early adopters include non-bypassable blocks for destructive commands, rate limits, emergency stop mechanisms, and human approval requirements for critical operations. The consensus is to treat AI pentesting agents as augmented operators assisting human judgment, not autonomous attackers running unsupervised.

Organizations need to prepare for continuous AI-driven attacks. That means 24/7 security operations centers, automated response systems, and most importantly, adopting AI-driven detection to keep pace with AI-powered offense. Gartner’s prediction about 3x higher incident rates for organizations without AI risk controls isn’t hyperbole—it’s based on the reality that manual security testing can’t match the speed and scale of automated threats.

The uncomfortable truth is this: If you’re not using AI agents to test your systems, someone else is using them to break in. The choice isn’t whether these tools should exist—they already do, and that genie isn’t going back in the bottle. The choice is how fast security teams adapt to a world where both offense and defense are increasingly autonomous.