Google DeepMind released Gemini 3.1 Pro on February 19, 2026, achieving 77.1% on the ARC-AGI-2 reasoning benchmark—more than doubling its predecessor’s 31.1% score while keeping the same $2/$12 pricing. The model beat Claude Opus 4.6 (68.8%) and GPT-5.2 (52.9%) on a test designed to measure genuine reasoning rather than memorization. Yet despite these results and being 7x cheaper than Claude, Gemini 3.1 Pro is getting far less attention than its competitors.

ARC-AGI-2: Testing Real Reasoning, Not Memorization

Gemini 3.1 Pro’s 77.1% score isn’t just another benchmark number to ignore. ARC-AGI-2, created by François Chollet, tests fluid intelligence—the ability to solve entirely new logic patterns from sparse examples. The benchmark uses grid-based visual puzzles that are trivial for humans but have stumped AI systems for years. Each task provides only three example input-output pairs, forcing models to induce rules and apply them flexibly rather than rely on memorized patterns.

This “Easy for Humans, Hard for AI” design makes ARC-AGI-2 one of the few benchmarks that can’t be gamed through scale or training data memorization. It measures whether models can genuinely reason through novel problems—a core component of intelligence that has eluded most AI systems. Gemini 3.1 Pro’s 77.1% represents a fundamental leap in reasoning capability, not just incremental improvement.

The Competitive Picture: Beating Claude and GPT

Gemini 3.1 Pro beat Claude Opus 4.6 by 8.3 percentage points and GPT-5.2 by a massive 24.2 points on ARC-AGI-2. This challenges the prevailing narrative that Claude owns AI reasoning. While human evaluators still prefer Claude’s outputs in expert-level tasks (1633 vs 1317 Elo rating), Gemini now leads on the benchmark specifically designed to test reasoning rather than writing quality.

The community consensus has become “Gemini wins metrics, Claude wins mentality.” Benchmark charts favor Gemini, but developers report Claude “feels smarter” in actual use. This gap reveals that reasoning scores and subjective output quality measure different dimensions of capability. Gemini also scored 94.3% on GPQA Diamond—the highest on record—and 80.6% on SWE-bench Verified, which tests real-world software issue resolution.

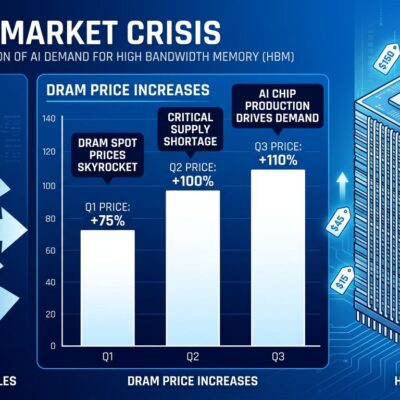

Performance Doubled, Price Stayed Flat

Gemini 3.1 Pro costs $2 per million input tokens and $12 per million output tokens—exactly the same as Gemini 3 Pro despite more than doubling reasoning performance from 31.1% to 77.1% on ARC-AGI-2. This makes it 7x cheaper than Claude Opus 4.6 on a per-request basis. While Claude may win on subjective output quality, Gemini’s performance-per-dollar advantage makes frontier reasoning accessible for experimentation and high-volume production use.

Context caching drops input costs further to $0.50 per million tokens for repeated prompts—useful for applications with large system prompts like codebases or product manuals. Free testing in Google AI Studio removes barriers to evaluation. The pricing strategy puts pressure on Claude and OpenAI to justify their premium tiers or match Google’s value proposition.

Real-World Capabilities Beyond Benchmarks

Gemini 3.1 Pro ships with practical improvements that address developer pain points. The 1-million-token context window (default, not beta) dwarfs Claude’s 200K default and enables analysis of massive codebases or long videos without chunking. Output capacity increased to 65K tokens from Gemini 3 Pro’s ~21K limit, resolving truncation issues during complex code generation.

A new three-tier thinking system (Light/Medium/Strong) lets developers trade latency for reasoning depth—fast responses for simple queries, deeper compute for complex problems. Multimodal processing handles up to 900 images, 8.4 hours of audio, or 1 hour of video per prompt, enabling use cases like autonomous form filling, code refactoring, and design generation that were impractical with earlier models.

The Quiet Winner

Google used a “.1” version increment for the first time instead of the typical “.5” mid-cycle update, signaling a focused intelligence upgrade rather than broad feature expansion. Released one month ago, Gemini 3.1 Pro has generated far less buzz than Claude Opus 4.6 (released February 5), despite beating it on reasoning benchmarks and undercutting it on price.

Why the attention gap? Preview status may be limiting awareness. Claude’s superior human preference scores (Elo ratings) matter more than raw reasoning metrics for many expert tasks. Or perhaps Google’s marketing simply can’t match Anthropic’s ability to generate excitement around technical releases.

For developers choosing AI models, Gemini 3.1 Pro now offers a compelling alternative: frontier-class reasoning at a fraction of Claude’s cost, with practical advantages like massive context windows and flexible compute options. Whether that’s enough to overcome Claude’s subjective quality edge depends on your use case—but ignoring a model that scores 77.1% on genuine reasoning tests while costing 7x less seems unwise.