On March 14, independent developer Ferran Duarri released GreenBoost, an open-source Linux kernel module that extends Nvidia GPU VRAM by using system RAM and NVMe storage as additional memory tiers. Trending on Hacker News with 145 points today, Green Boost addresses a critical pain point: running large AI models that exceed your GPU’s memory without spending $3,000-5,000 on an RTX 5090 or $25,000+ on an H100.

GPU VRAM shortage is the number one bottleneck for AI developers. Consumer GPUs top out at 24-32GB, but modern LLMs require 40-80GB or more. Consequently, GreenBoost lets you run these models on existing hardware by leveraging cheaper system RAM—about $100 for 64GB—instead of expensive GPU upgrades.

How GreenBoost Extends GPU VRAM: Three-Tier Memory System

GreenBoost extends GPU memory across three tiers. Tier 1 is your native VRAM at 300+ GB/s bandwidth. Tier 2 routes overflow to system RAM via PCIe 4.0, delivering roughly 32 GB/s. Tier 3 taps NVMe storage at approximately 1.8 GB/s. Notably, the system intercepts CUDA memory calls transparently—allocations under 256MB stay in native VRAM, while larger allocations like model weights and KV caches redirect to system RAM.

Duarri successfully ran a 31.8GB model (glm-4.7-flash:q8_0) on a GeForce RTX 5070 with just 12GB VRAM. That’s the real-world proof this works. Moreover, GreenBoost uses DMA-BUF file descriptors to make system RAM appear as CUDA-accessible device memory. Installation requires no code changes—just LD_PRELOAD injection to intercept CUDA calls. Additionally, you can monitor usage through the sysfs interface at /sys/class/greenboost/greenboost/pool_info.

This is the first open-source solution providing CUDA-coherent access to system RAM. Standard layer offloading lacks CUDA coherence, causing performance degradation. Furthermore, Nvidia’s Unified Memory exists but isn’t optimized for LLM workloads. GreenBoost fills a gap vendors haven’t addressed.

The Performance Trade-Off: 10-30x Slower Than Native VRAM

Here’s the hard truth: PCIe 4.0 bandwidth at roughly 32 GB/s is 10-30 times slower than native GPU VRAM running at 300+ GB/s to over 1 TB/s. Hacker News users report throughput drops to 1-5 tokens per second when accessing system RAM, compared to 50+ tokens per second on native VRAM.

That performance hit kills real-time inference. Chatbots need sub-second response times. Production API endpoints require high throughput. Interactive applications demand low latency. GreenBoost can’t deliver any of those. However, the HN community nailed the use case: “1-5 tokens per second makes this impractical compared to cloud services. But for overnight tasks where speed doesn’t matter, it’s a clever workaround.”

In fact, batch processing, overnight fine-tuning, and experimentation don’t care about tokens per second. For those workloads, GreenBoost democratizes access to larger models without the GPU upgrade tax.

Use Cases: Where It Works and Where It Fails

GreenBoost excels at overnight batch workloads like data labeling, cleansing, and fine-tuning runs that take hours anyway. Development and experimentation benefit too—avoid cloud costs while testing models that exceed local VRAM. The community recommends combining quantization with KV cache offloading: keep model weights in VRAM, offload KV cache to system RAM.

Where it fails: chatbots and conversational AI requiring sub-second response times, production API endpoints where throughput matters, real-time inference serving, and anything graphics-related where latency destroys frame rates. Consequently, if you’re trying to build a production chatbot with GreenBoost, stop now. Use cloud GPUs or buy more VRAM.

The right tool for the right job. Overnight data processing? GreenBoost saves thousands in hardware costs. Production inference? Cloud GPUs win despite ongoing rental fees.

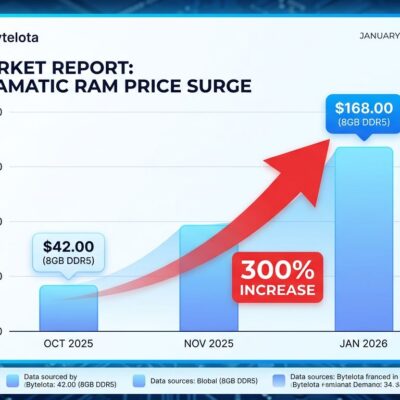

The Economics: $100 System RAM vs. $3,000+ GPU Upgrades

GPU VRAM is expensive. An RTX 5090 with 32GB runs $3,000-5,000 in street prices versus $1,999 MSRP. The RTX 4090 with 24GB costs around $1,600. An H100 with 80GB rents at $2-4 per hour or $25,000+ to buy. In contrast, 64GB of DDR5 system RAM costs roughly $100-150. That’s 20-250 times cheaper per gigabyte.

For budget-conscious developers, indie makers, and researchers, GreenBoost democratizes access to large models. Instead of spending $5,000 on a GPU upgrade or $3,000 monthly on cloud rentals, you leverage existing system RAM for a fraction of the cost—accepting the performance trade-off. Furthermore, GPU buying guides confirm these price points across the market.

Cloud GPUs make sense for production workloads at scale. GreenBoost makes sense for experimentation, development, and batch processing where speed is secondary to cost. Know which problem you’re solving.

Linux-Only and Community Response

GreenBoost is Linux-only. The kernel module architecture excludes Windows and Mac users—a complete driver rewrite would be needed for Windows support. That limits the addressable market since Windows remains dominant among AI developers, especially those using CUDA.

Community sentiment from Hacker News is mixed: respect for the engineering achievement tempered by skepticism about practical utility. Developers appreciate the technical cleverness but question real-world application given performance constraints. Concerns include incomplete benchmarks comparing GreenBoost to llama.cpp layer offloading, memory management risks where the OS may trigger OOM killers, and the experimental status with only 34 commits as of March 19.

For Linux users in ML and AI research, this is a viable option for specific use cases. Additionally, the Phoronix technical breakdown provides implementation details. For production deployments or Windows-based workflows, alternatives like quantization, cloud GPUs, or layer offloading remain necessary.

Key Takeaways

- GreenBoost extends GPU VRAM using system RAM via PCIe 4.0, enabling large models on consumer GPUs at 10-30x slower performance than native VRAM.

- Perfect for overnight batch workloads, fine-tuning, and experimentation where speed doesn’t matter. Fails for real-time inference, chatbots, and production APIs.

- Economics favor GreenBoost for budget developers: $100 for 64GB system RAM vs. $3,000-5,000 for GPU upgrades or $3,000 monthly cloud costs.

- Linux-only limitation excludes Windows/Mac users. Community response is cautiously optimistic but skeptical about production utility.

- Released March 14 by Ferran Duarri, trending on Hacker News today. Check the GitLab repository and NVIDIA Developer Forums for community discussion.