On March 11, 2026, at the Ask 2026 conference, Perplexity CTO Denis Yarats announced his company is moving away from Anthropic’s Model Context Protocol (MCP) in favor of traditional APIs and command-line interfaces. This marks a dramatic reversal just four months after Anthropic donated MCP to the Linux Foundation in December 2025, positioning it as the “USB-C for AI”—a universal standard for AI agent tool integration. The core issue is stark: MCP consumes up to 72% of available context windows before an agent processes a single user message.

This isn’t a minor efficiency problem. It’s a fundamental architectural flaw that questions whether Anthropic understands production AI deployment at scale.

The Token Economics Crisis: 143,000 Tokens Before Any Work Begins

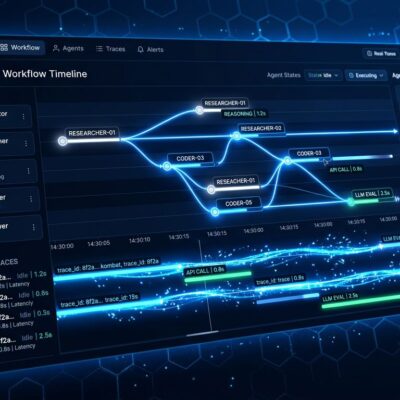

MCP’s tool definitions eat context windows for breakfast. Apideck documented one deployment where three MCP servers consumed 143,000 of 200,000 tokens—72% of the available context—leaving only 57,000 tokens for actual conversation, retrieved documents, and reasoning. Each MCP tool costs 550-1,400 tokens just for its name, description, JSON schema, and parameters. Load 43 tool definitions (a typical production deployment), and you’ve burned through 30,000-100,000+ tokens before processing any user requests.

The benchmarks are damning. Apideck’s CLI alternative uses 80 tokens compared to MCP’s 55,000+ token overhead—a two orders of magnitude improvement. Scalekit’s testing found that the simplest task, checking a repository’s programming language, consumed 1,365 tokens via CLI versus 44,026 tokens via MCP. That’s a 32× overhead for identical operations. Cloudflare’s research showed that a traditional MCP approach for their API would consume 1.17 million tokens.

Context windows are finite and expensive. When 72% of your context is consumed by tool definitions, you have a business model problem. Production deployments serving thousands of users can’t afford this overhead—it directly impacts profit and loss. This isn’t fixable with minor optimizations; it’s baked into MCP’s design.

Industry Fragmentation: APIs, CLIs, and Code Mode Replace MCP

Major companies aren’t waiting for Anthropic to fix MCP. They’re building alternatives. Perplexity launched their Agent API in February 2026—a single endpoint that routes to models from OpenAI, Anthropic, Google, xAI, and NVIDIA with built-in tools, all accessible under one API key using OpenAI-compatible syntax. No schema bloat. No per-server authentication headaches. Just a traditional API that does the job.

Y Combinator CEO Garry Tan built a custom CLI instead of using MCP, citing “reliability and speed” requirements for production systems. Apideck created a CLI with an 80-token agent prompt that replaces tens of thousands of tokens of MCP schema through progressive disclosure. Scalekit’s benchmarks show CLIs win on every efficiency metric—10 to 32× cheaper and 100% reliable versus MCP’s 72% reliability.

Meanwhile, Cloudflare developed “Code Mode,” a technique where agents write code against typed SDKs instead of calling individual tools. This reduces token usage by 99.9%. For Cloudflare’s API, Code Mode cuts what would have been 1.17 million MCP tokens down to under 1,000 tokens. When multiple independent companies (Perplexity, Apideck, YC, Cloudflare) arrive at the same conclusion—that MCP’s overhead is unacceptable—it signals an industry reckoning, not isolated complaints.

Four Months From Linux Foundation to Public Abandonment

The timeline exposes the gap between institutional legitimacy and production reality. December 9, 2025: Anthropic donates MCP to the Linux Foundation’s newly formed Agentic AI Foundation, co-founded with OpenAI and Block, supported by Google, Microsoft, AWS, Cloudflare, and Bloomberg. MCP had 97 million monthly SDK downloads and 200+ servers in its ecosystem. The protocol looked like an industry standard.

February 2026: Perplexity quietly launches their Agent API as an MCP alternative. March 11, 2026: Perplexity CTO publicly announces the move away from MCP at Ask 2026. Four months from institutional endorsement to public rejection by a major adopter.

This speed reveals how quickly hype collapses when it meets production scale. Linux Foundation involvement gave MCP credibility and implied industry consensus. But that credibility couldn’t overcome token economics. This is Anthropic’s “emperor has no clothes” moment—the protocol looked like a standard until major players actually tried to deploy it. Did Anthropic design MCP for their internal needs (Claude Desktop) without understanding what production deployment requires? The evidence suggests yes.

Where MCP Works and Where It Fails

MCP isn’t universally bad—it’s just mispositioned. It works well for IDE integrations like VS Code, Cursor, and Claude Desktop, where developers need AI assistants with rich project context. A single user with comprehensive tool access benefits from dynamic discovery. Rich context has value when you’re not paying for it at production scale.

But MCP fails catastrophically for production agent APIs serving many users. Token efficiency directly impacts costs when you’re serving thousands of requests. The industry is converging on a pragmatic hybrid: MCP for desktop/IDE tools where context richness matters, traditional APIs and CLIs for production agents where token economics are paramount.

Perplexity still runs an MCP Server for developers who want it—backward compatibility matters. But their flagship Agent API uses traditional routing because that’s what production demands. This pattern will likely become standard: niche protocol for desktop tools, lightweight APIs for everything else. Anthropic’s mistake was believing “universal” meant “works for all use cases” instead of recognizing that different contexts demand different tools.

Key Takeaways

- MCP’s context overhead (40-72% consumption before any work) makes it impractical for production AI agents at scale—this isn’t a bug, it’s fundamental to the design

- Industry leaders are fragmenting rather than standardizing: Perplexity’s Agent API, Apideck’s CLI (80 tokens vs. 55,000+), Cloudflare’s Code Mode (99.9% reduction), and YC’s custom tooling show the shift away from MCP

- The four-month timeline from Linux Foundation donation to public abandonment reveals the gap between institutional legitimacy and production reality—hype collapsed when it met token economics

- MCP works for IDE/desktop integrations where rich context has value; it fails for production agent APIs where token efficiency determines profitability

- Token economics may make “universal” AI protocols inherently unviable—expect continued fragmentation, not convergence on a single standard

The software industry spent decades learning that one size doesn’t fit all for APIs. We’re relearning that lesson with AI agent protocols. MCP will likely survive as a niche standard for desktop assistants. But Anthropic’s vision of a universal protocol for all AI agent integrations? That died four months after the Linux Foundation made it official.