Meta announced a $60 billion AI chip deal with AMD on February 24, 2026—the largest single hardware procurement in “Magnificent Seven” history. The five-year agreement will deploy 6 gigawatts of custom AMD MI450 GPUs starting in H2 2026, with performance-based warrants allowing Meta to acquire up to 160 million AMD shares. This came just days after Meta committed to millions more Nvidia Blackwell and Rubin GPUs. Why is Meta—Nvidias second-largest customer—betting $60 billion on AMD when CUDA lock-in makes switching painful and ROCm still trails the leader? Because Nvidias supply constraints and pricing monopoly have turned vendor diversification from nice-to-have into strategic necessity.

The Deal: Custom Silicon at Gigawatt Scale

AMDs custom MI450 GPU, built on 2nm CDNA 5 architecture, packs 432 GB of HBM4 memory and delivers 20 PFLOPS at FP8 precision. Each rack in Metas AMD Helios architecture houses 72 MI450 GPUs producing 1.4 exaFLOPS at FP8—enough compute for training and inference at Metas Llama 4 scale. First gigawatt deployments ship in the second half of 2026, paired with 6th Gen AMD EPYC “Venice” CPUs and ROCm software.

The equity component is where things get interesting. Meta receives warrants for up to 160 million AMD shares—roughly 10% of the company—that vest as shipment milestones are met. This structure, nearly identical to AMDs OpenAI deal, pushes potential total value past $100 billion and locks in mutual strategic interest. If Meta succeeds with AMD, both companies win big.

Why Meta Cannot Afford Nvidia Dependency

Nvidia reported $68.1 billion in quarterly revenue in January 2026—$51 billion from data center alone—yet still described itself as “supply-constrained at record levels.” Blackwell GPUs are backlogged for over a year. Hyperscalers like Meta, Microsoft, and Google dominate allocation, but even they cannot get enough chips. Memory prices surged 30% in Q4 2025 with another 20% increase expected in early 2026. Alibabas CEO warned the undersupply could last two to three years.

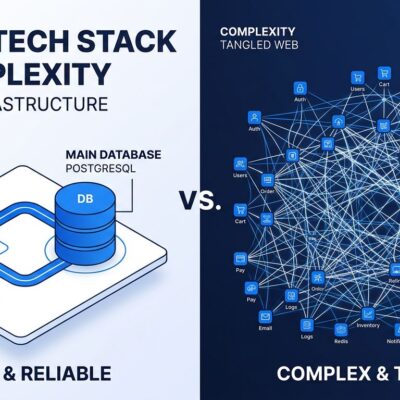

For Meta, this creates three unacceptable risks. First, supply constraints threaten Llama 4 scaling plans—you cannot train multimodal models if you cannot secure GPUs. Second, Nvidias pricing power is unchecked when customers have no alternatives. Third, CUDA lock-in creates long-term strategic vulnerability. Proprietary ecosystems are fine when the vendor delivers, but catastrophic when they cannot or will not.

The $60 billion AMD commitment is not vendor replacement—its insurance. Meta still needs Nvidia for maximum performance workloads where CUDAs 18-year ecosystem lead matters. But for Llama-scale training and inference, AMD offers a credible second source that keeps Nvidia honest on both supply and pricing.

The ROCm Reality: Production-Ready With Caveats

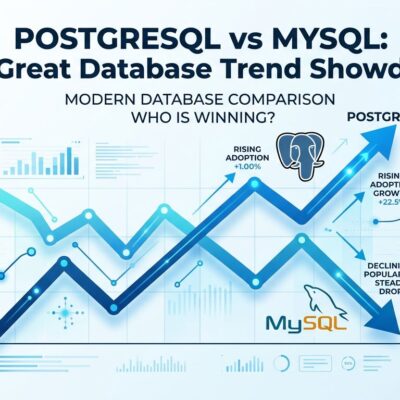

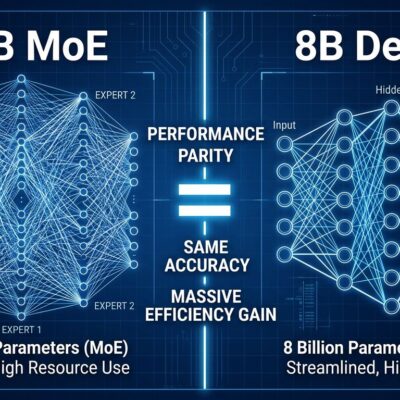

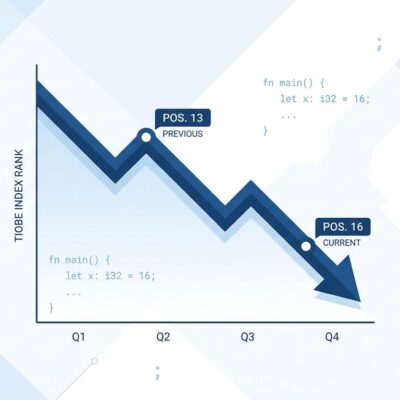

CUDA remains 10-30% faster than ROCm for most workloads, backed by unmatched libraries (cuDNN, cuBLAS, TensorRT) and developer talent. Migration from CUDA requires rewriting kernels, retraining teams, and sacrificing years of accumulated optimization. AMDs HIP and HIPIFY tools automate parts of the translation, but complex code still needs manual tuning.

Yet ROCm has closed the gap dramatically in 2025-2026. PyTorch now officially supports ROCm on Linux as a first-class platform, not experimental. Benchmarks from August 2025 showed AMDs MI325X + ROCm achieving near-parity with Nvidias H100 in large language model training. For Llama3 405B and DeepSeekv3 670B specifically, AMDs MI300X beat Nvidias H100 on both absolute performance and price-performance.

The honest assessment: ROCm is production-ready for specific workloads—specifically Metas Llama-scale models. Its not CUDA-equivalent across the board, and installation remains more complex. But its good enough to reduce vendor risk, especially when AMD custom-designs silicon for your exact use case. Metas scale justifies the ROCm investment. Smaller organizations might not have this luxury.

The End of CUDA or Nothing

Meta is not alone in pursuing multi-vendor GPU strategies. Google runs TPUs, AWS built Trainium, Microsoft developed Maia. The industry consensus is clear: single-vendor dependency is unacceptable for AI infrastructure at scale. Nvidias CUDA moat remains the strongest in tech, but supply constraints hand competitors an opening they are aggressively exploiting.

For developers, this shift matters even at smaller scale. Metas $60 billion validation legitimizes AMD in AI infrastructure. Framework developers will optimize for ROCm alongside CUDA. More tooling, libraries, and community support are inevitable. Learning ROCm becomes valuable as dual CUDA/ROCm expertise increases career optionality.

The broader point: vendor diversity in AI infrastructure benefits the entire ecosystem. Price competition improves. Supply expands. Strategic options multiply. If Meta can run Llama 4 on AMD, others can too—from enterprises to startups building on open models.

Will Meta Actually Deploy 6 Gigawatts?

Announcements are not deployments. The real test comes when Meta attempts to migrate production Llama workloads to ROCm at scale. Challenges include operational complexity of dual-vendor infrastructure, ROCm ecosystem gaps versus CUDA maturity, and unproven MI450 performance (benchmarks do not exist yet for silicon shipping in H2 2026).

Success factors favor Meta. Their scale justifies custom silicon investment. The MI450 is designed specifically for their workloads, not generic AI. AMDs $60 billion incentive ensures Meta gets priority support. And MI300X already proved competitive performance for Llama models—MI450 on 2nm should improve further.

Realistic outcome: AMD likely handles 20-40% of Metas GPU needs by 2027, with Nvidia remaining primary for maximum-performance workloads. Thats enough to prove multi-vendor AI infrastructure works at hyperscale, giving other enterprises a blueprint to follow.

Bottom Line

Metas $60 billion AMD bet signals the end of Nvidias unchallenged GPU monopoly in AI. This is not anti-Nvidia ideology—its risk management. Supply constraints, pricing power, and CUDA lock-in made diversification strategically necessary. ROCm is production-ready for Llama-scale workloads even if its not CUDA-equivalent everywhere. And vendor competition benefits developers across the entire AI ecosystem, from hyperscalers to solo engineers.

The question is not whether ROCm is perfect—its not. The question is whether its good enough to reduce Metas vendor risk. At $60 billion over five years with custom silicon, the answer appears to be yes.