Superpowers, a new agentic skills framework from Perl veteran Jesse Vincent, gained 1,528 GitHub stars in 24 hours on February 26, 2026, rocketing to #2 on GitHub’s global trending list. The framework’s tagline isn’t subtle: “a framework & software development methodology that works.” The implicit criticism is clear: existing agent frameworks don’t work, or don’t help developers actually ship code. The explosive adoption suggests Vincent hit a nerve.

The agent framework landscape is crowded. LangGraph, CrewAI, AutoGen, and dozens of others compete for developer mindshare. Yet pilots fail, doom loops persist, and $500,000+ salary burns on custom connectors for shelved projects remain common. The question isn’t whether developers want agent frameworks. It’s whether any framework actually helps them ship.

Why 1,528 Stars in 24 Hours Matters

Developer fatigue with overhyped agent frameworks is real. In 2026, AI agent pilots fail primarily due to integration issues, not LLM failures. According to research on why agent pilots fail, three causes dominate: bad memory management (dumb RAG), broken input/output (brittle connectors), and lack of event-driven architecture (polling tax). Consequently, senior engineers spend months building custom connectors for pilots that get shelved. That’s $500,000+ in salary for plumbing instead of product.

Superpowers’ explosive adoption signals an unmet need. Moreover, developers aren’t searching for more features or complexity. They want frameworks that help them ship code, not just demo capabilities. The “that works” positioning resonates because competitors overpromise and under-deliver. Indeed, Simon Willison, a respected voice in the developer community, called Vincent’s approach “wildly more ambitious than most.” That’s not hype. That’s recognition.

Mandatory Workflows, Not Optional Suggestions

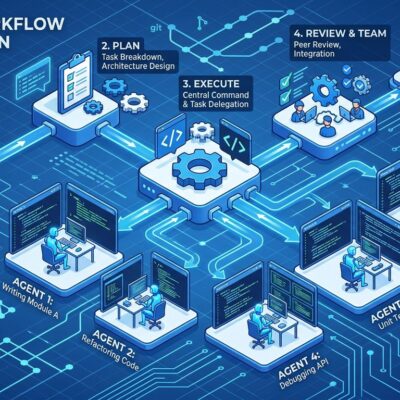

Superpowers differentiates through enforcement, not options. The framework implements four mandatory principles: test-driven development (TDD), systematic methodology over guessing, complexity reduction through simplicity, and evidence-based verification before completion. Furthermore, this isn’t a suggestion. It’s the workflow.

The process is deliberate. The agent refrains from immediate coding and asks clarifying questions first. It presents designs in digestible chunks for validation. Additionally, it creates implementation plans broken into 2-5 minute tasks. Subagent-driven development enables extended autonomous work with two-stage review: specification compliance first, then code quality. The goal is quality, not speed.

This contrasts sharply with competitors. LangGraph, CrewAI, and AutoGen offer optional patterns. Developers can follow best practices or skip them. However, Superpowers removes that choice. TDD is mandatory. Code review is mandatory. Design-first is mandatory. The trade-off: less flexibility, more quality. For developers tired of agents that generate code without tests or ignore architectural constraints, mandatory enforcement is a feature, not a bug.

The Competitive Landscape Reveals Gaps

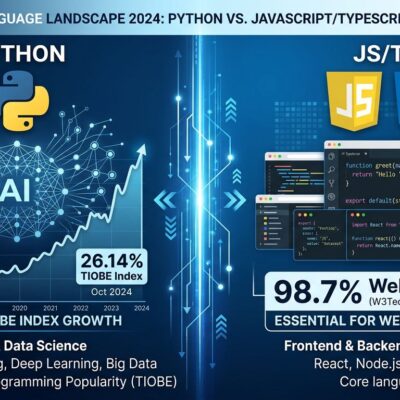

LangGraph is built for complex, stateful workflows. It offers precise control over execution order, branching, and error recovery. Nevertheless, the cost is a steep learning curve, verbose boilerplate, and overkill for simple use cases. It requires deep understanding of state machines and asynchronous programming. According to framework comparisons, while you can set up a CrewAI project in an afternoon, a robust LangGraph implementation requires significant investment. It’s for critical infrastructure where failure costs millions.

CrewAI champions time-to-production for standard business workflows. It enables deploying multi-agent teams 40% faster than LangGraph through role-based collaboration. However, the cost is token efficiency. CrewAI uses nearly 4x more tokens than MS Agent Framework. Role-playing architecture inflates every run with verbose system prompts and inter-agent communication. For budget-conscious teams, that matters.

AutoGen focuses on conversational multi-agent systems with group decision-making. It’s the slowest due to chat-heavy consensus-building overhead. Agents can loop endlessly, requiring timeouts, turn limits, and referee logic. The non-deterministic nature means agents might debate productively or get stuck arguing forever. For production use, that’s risky.

All three frameworks share common traits: Python-based, optional best practices, feature-heavy. Thus, Superpowers targets the gap they leave: Shell-based (76% Shell vs 100% Python), mandatory workflows, simplicity-first. It’s a different bet on what developers need.

Obra’s Credibility: Pragmatic Engineer, Not VC Hype

Jesse Vincent brings a track record that matters. He served as Perl project lead from 2005 to 2008. He created Request Tracker, a widely-used ticket-tracking system, and founded Best Practical Solutions. He built K-9 Mail, the Android email app now owned by Mozilla and rebranded as Thunderbird for Android. In 2014, he co-founded Keyboardio, an ergonomic keyboard company that ships physical hardware.

This background differs from the typical AI framework founder. Vincent isn’t a VC-funded startup pitching the next big thing. Instead, he’s an independent developer with 20+ years shipping software that people use. The Perl background emphasizes “get stuff done” over language purity. Similarly, the hardware experience (Keyboardio) demonstrates understanding of real constraints, not just abstract code. His reputation is for practical tools that solve real problems, not feature demos that don’t ship.

Contrast this with competitors. LangGraph comes from LangChain Labs, a VC-backed company. CrewAI is a venture-funded startup. AutoGen is Microsoft Research. There’s nothing wrong with institutional backing, but it shapes priorities. Vincent’s independent position allows focus on developers shipping code, not investor metrics or enterprise sales.

Skepticism and Open Questions

Hacker News discussion reveals healthy skepticism. One commenter stated: “Seems cute, but ultimately not very valuable without benchmarks.” Another compared the agent framework hype to “NFT/Crypto bros of the AI world.” The concerns are valid. Superpowers launched today. There are no benchmarks. Rigorous evaluation is difficult with non-deterministic LLMs. A/B testing isn’t straightforward. Day 1 hype doesn’t guarantee sustained value.

Token economics remain unclear. Do sub-agents justify their consumption? Simon Willison noted AI tools “make development harder work” despite productivity gains, creating cognitive overhead and mental exhaustion. One developer reported that a 2.2K line CLAUDE.md file worked better than elaborate systems. Another said they write more code manually now to maintain control. Using coding agents heavily resembles being promoted to technical lead: it requires design decisions, documentation, and rigorous code review.

The “trivial” debate persists. Critics argue examples are too simple to prove value. Defenders counter that 21-file changes demonstrate real-world applicability, and the value lies in eliminating noise, not replacing core logic. Both sides have points.

Open questions remain. Is Superpowers genuinely different, or just better marketing? What does “works” mean when LangGraph and CrewAI have millions of users? Will mandatory workflows backfire by reducing flexibility? Is 1,528 stars in 24 hours sustainable adoption or Day 1 hype?

Fair assessment: Mandatory TDD is genuinely different from optional suggestions. Shell-based implementation is genuinely different from Python complexity. The focus on simplicity over features is a clear philosophical divergence. However, no benchmarks exist yet. Rigorous evaluation hasn’t happened. It’s early days.

Key Takeaways

- Superpowers gained 1,528 GitHub stars in 24 hours, trending #2 globally, signaling unmet developer need for agent frameworks that help ship code rather than demo features.

- The framework differentiates through mandatory workflows (TDD, code review, design-first) versus competitors’ optional patterns, trading flexibility for enforced quality.

- Shell-based (76% Shell) and simplicity-first approach contrasts sharply with Python-heavy, complex competitors like LangGraph (steep learning curve), CrewAI (4x token overhead), and AutoGen (slow consensus loops).

- Jesse Vincent’s credibility as Perl project lead, Request Tracker creator, and Keyboardio founder positions this as pragmatic engineering versus VC-funded hype, with 20+ years shipping software that people actually use.

- Skepticism is warranted given Day 1 launch, no benchmarks, and cognitive overhead concerns, but mandatory quality enforcement represents a genuinely different bet on what developers need from agent frameworks.