AI agents are hitting the enterprise reality wall in 2026. While vendors market them as “autonomous digital workers,” Gartner predicts 40% of agentic AI projects will be canceled by end of 2027—not because the models fail, but because organizations can’t operationalize them. Consequently, the reason is simple: AI agents aren’t autonomous workers. Instead, they’re unreliable tools requiring constant human supervision, and most enterprises are learning this the hard way.

The Numbers Don’t Lie: Massive Failure Rates

Recent data reveals catastrophic failure rates that vendor marketing conveniently ignores. Furthermore, Gartner’s June 2025 prediction isn’t alone: a Medium analysis of 847 AI agent deployments in 2026 found 76% failed in production. Meanwhile, RAND Corporation reports 80% of AI projects never even reach production—a rate nearly double that of typical IT projects.

The root cause? Organizations treating agents as autonomous workers when they’re actually supervised tools. Moreover, Gartner’s key insight: projects fail due to “escalating costs, unclear business value or inadequate risk controls”—not technical incompetence. Additionally, only 34% of enterprises have AI-specific security controls in place, exposing massive governance gaps.

The Performance Gap: 38-72% Isn’t Autonomous

Benchmarks tell the truth marketing materials hide. In fact, AI agents score 38-72% on standard tasks compared to 72-95% human performance—a 20-34 percentage point gap. Specifically, OpenAI’s CUA achieves 38% on OSWorld system tasks and 58% on WebArena web tasks. Meanwhile, OpAgent reached 71.6% on WebArena in January 2026, currently state-of-the-art. Humans? 72% OSWorld, 78% WebArena baseline.

The gap widens with complexity. For example, while simple WebVoyager tasks hit 87% success, system-level OSWorld tasks plummet to 38-42%. Furthermore, agents take 1.4× more steps than required—even when they succeed, efficiency suffers. Nevertheless, technical progress like FDM-1’s 50x more efficient video encoding doesn’t solve the reliability problem. Impressive engineering? Absolutely. However, ready for autonomous deployment? Not even close.

Developers Become Janitors, Not Builders

Here’s what vendor demos don’t show: developers spending more time on AI work, not less. Specifically, a Harness survey found 67% of developers spend more time debugging AI-generated code. Additionally, 68% spend more time fixing AI-related security issues. Consequently, the supervision burden often exceeds effort saved.

Harvard Business Review captured it perfectly in February 2026: “AI doesn’t reduce work—it intensifies it.” In other words, developers shift from building to debugging, reviewing, and cleaning up AI output. Moreover, the fear is real: becoming “janitors for AI-generated code” rather than creators. Furthermore, AWS research shows teams with excessive context switching (exacerbated by rapid AI task generation) deliver 40% less work and double their defect rate. Therefore, one business leader’s quote says it all: AI agents require MORE supervision than the employees they replaced.

Related: Microsoft Copilot’s 3.3% Adoption Crisis: Why It’s Failing

The Total Cost No One Mentions

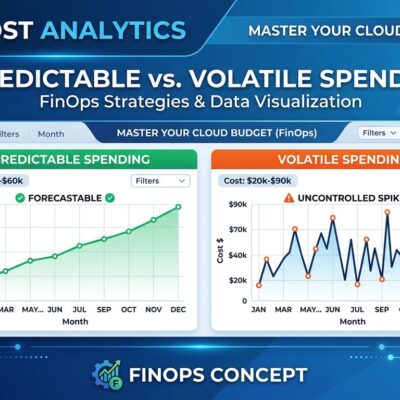

Vendor pricing looks attractive until you add the operational overhead. Specifically, enterprise AI agent production requires observability infrastructure: logging ($300-$800/month), error tracking ($100-$500/month), analytics dashboards ($500-$2,000+ setup), and monitoring integrations ($300-$1,000/month). Consequently, that’s $1,200-$4,300+ monthly before computing costs or developer supervision time.

Observability tools aren’t optional—they’re mandatory. Moreover, tools like LangSmith, Langfuse, and AgentOps add 12-15% performance overhead just for monitoring. Additionally, factor in developer supervision hours and error correction costs. Furthermore, IBM notes that “AI shifts the cost curve from pure coding toward orchestration, governance, infrastructure, and oversight layers.” In other words, the “total cost of AI agents” often exceeds ROI, especially when 24-76% of deployments fail.

From Hype to Reality: What Actually Works

This isn’t an anti-AI stance—it’s a reality check backed by data. Nevertheless, despite widespread failures, Gartner predicts that by 2028, 15% of routine work decisions will be handled by agentic AI (up from virtually zero today). However, the critical caveat: still supervised, still requiring oversight, not autonomous.

The successful path forward replaces the “autonomous worker” framing with “supervised tool requiring human oversight.” Specifically, what works: low-stakes repetitive tasks like web scraping and data entry with human verification. In contrast, what doesn’t: high-stakes decisions, complex multi-step workflows, expecting autonomy. Moreover, Gartner’s recommendation cuts through the noise: “Use AI agents when decisions are needed, automation for routine workflows, assistants for simple retrieval”—not as worker replacements. Therefore, the pendulum is swinging from hype to pragmatism in 2026. Ultimately, the 40-76% failure rates aren’t inevitable for teams that set realistic expectations and invest in proper governance.