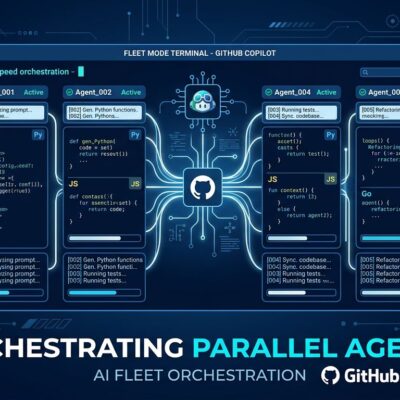

GitHub dropped two major updates to Copilot CLI in November 2025, bringing the AI model wars to developers’ terminals. On November 13, OpenAI’s GPT-5.1 series entered public preview—GPT-5.1, GPT-5.1-Codex, and GPT-5.1-Codex-Mini. Five days later on November 18, Google’s Gemini 3 Pro joined the lineup alongside significant CLI enhancements: bundled ripgrep for faster code search, drag-and-drop image support, and improved error handling.

For the first time, developers can choose between competing frontier models from OpenAI, Google, and Anthropic directly in their command-line workflow. This transforms GitHub Copilot CLI from a single-model assistant into a multi-model platform where you pick the best tool for each task.

Performance Claims and Reality Checks

Google claims Gemini 3 Pro delivers 35% higher accuracy than Gemini 2.5 Pro in resolving software engineering challenges, tested in VS Code. Meanwhile, OpenAI’s GPT-5.1 scored 76.3% on SWE-bench Verified, up from GPT-5’s 72.8%, with GPT-5.1-Codex-Max hitting 77.9% on the same benchmark. On LiveCodeBench Pro for algorithmic coding, Gemini 3 Pro leads with 2,439 Elo—roughly 200 points ahead of GPT-5.1.

However, benchmark skepticism is healthy. These are lab conditions, not real-world messy codebases with legacy code, unclear requirements, and tight deadlines. Performance improvements matter—a 35% accuracy boost means less time debugging AI-generated code—but don’t expect miracles on day one.

Moreover, Mario Rodriguez from GitHub tweeted: “Seeing a 35% boost in internal benchmarks. Try it in VS Code for agentic tasks and tell us how it changes your dev rhythm.” The invitation to test is telling. They know the gap between benchmarks and reality.

Model Specialization: Which One for What?

Each model excels at different tasks, and that’s the real story here. GPT-5.1-Codex is optimized for long-running agentic coding—multi-file refactors, production code generation. Furthermore, it will work for 30 minutes on complex tasks, following every instruction detail, unlike Claude which tends to shortcut. A tech lead at Cisco Meraki reported: “We could assign GPT-5.1-Codex a refactoring of another team’s code and Codex delivered high-quality, fully tested code that integrated back smoothly.”

In contrast, Gemini 3 Pro leads in algorithm design and from-scratch code generation. Its multimodal capabilities shine when you drag-and-drop error screenshots for visual debugging. Meanwhile, Claude Sonnet 4.5, the default model, handles quick queries fastest—explanations, simple fixes, rapid iteration.

Notably, GitHub’s Auto Mode learns your workflow and routes requests to the optimal model automatically. Users hit peak productivity by day 3 with Auto Mode, compared to 6-7 days when manually selecting models. That’s a 2x faster learning curve just by letting AI handle the meta-task of picking the right AI.

Developer choice isn’t just marketing here. Different models genuinely excel at different tasks. Therefore, match the model to your workflow: Gemini for prototypes, GPT-5.1-Codex for production refactors, Claude for speed. This is the first time CLI developers get this level of flexibility without switching tools.

CLI Enhancements Beyond the Headlines

The November 18 update bundled ripgrep into Copilot CLI and added grep and glob tools for faster codebase search. This isn’t flashy, but it’s workflow-transforming. ripgrep is 10-100x faster than grep on large codebases, enabling Copilot to build context before generating code. Consequently, better context equals better suggestions.

Additionally, other enhancements include the /share command for saving sessions as Markdown or GitHub gists, improved PowerShell support, and fixes for memory leaks and keyboard input issues on Windows Terminal. These aren’t the features that get TechCrunch headlines, but they’re the ones that make the tool genuinely useful rather than a novelty.

Developer Reaction: Choice Versus Complexity

Developer sentiment is mixed. Excitement about model choice pairs with confusion over selection UI. The CLI doesn’t clearly show which model is active, and switching requires the /model command. GitHub issue #34 requests dynamic model switching during runtime, highlighting a key UX pain point.

One Hacker News user noted: “The GitHub Copilot CLI interface doesn’t clearly show which model you’re using or how to switch between them.” Another common question on GitHub Community discussions: “Why can’t I switch GitHub Copilot models?” The UX gap suggests this feature was shipped before the interface was ready.

Despite this, Amanda Silver, Microsoft VP of Product for Developer Tools, emphasized the strategic bet: “Developer choice so you can do your best work.” That sounds like marketing, but Auto Mode backs it up—the AI handling model selection proves the concept works even if the manual UX needs polish.

This reveals the paradox of choice in developer tools. On one hand, power users love flexibility and will tolerate rough edges to get control. On the other hand, beginners feel overwhelmed by options and want smart defaults. GitHub’s trying to serve both with Auto Mode, but the CLI interface needs work to make manual selection less painful.

The Bigger Picture: Terminal Versus IDE Wars

GitHub Copilot CLI competes with Cursor AI, Aider, and Cline—each with trade-offs. Specifically, Cursor is a full AI-first IDE at $20/month with deeper integration but requires switching editors. Aider is open-source CLI supporting any LLM, free plus API costs, with full model control but worse UX. Copilot CLI balances ease and choice within the GitHub ecosystem at $10/month for Pro.

The CLI versus IDE debate reflects different developer workflows. If you live in the terminal—DevOps, backend, infrastructure work—Copilot CLI is the obvious choice. However, if you’re building from scratch and want AI-first UX, Cursor might win. This isn’t a winner-take-all market. Different tools for different needs.

Ultimately, the real insight: the terminal is becoming an AI-first interface, not just editors. Model choice at the command line signals that AI coding assistance is maturing beyond autocomplete into genuine multi-tool platforms. Whether GitHub executes the vision or gets outmaneuvered by Cursor and Aider depends on how fast they fix the UX gaps.