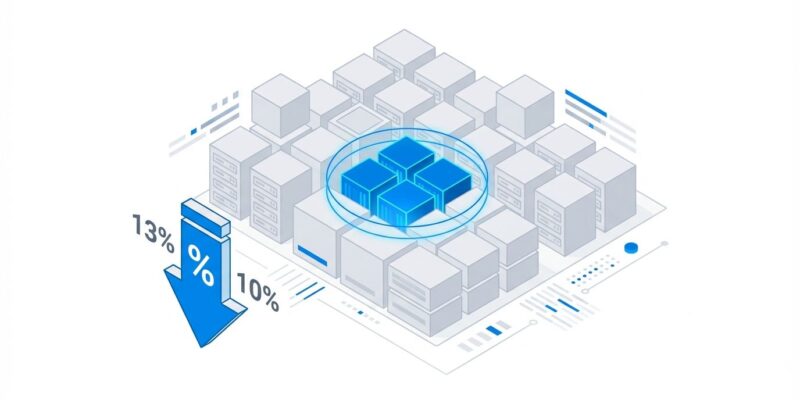

Cast AI’s 2025 Kubernetes Cost Benchmark Report exposes a paradox devastating cloud budgets: average CPU utilization across Kubernetes clusters has DECLINED to 10%, down from 13% last year. Memory utilization sits at 23%. The over-provisioning gap stands at 40% for CPUs and 57% for memory. Despite billions invested in FinOps tools and automation, Kubernetes efficiency is worsening, not improving. The $44.5B annual cloud waste crisis stems from a brutal truth—experienced DevOps engineers aren’t being hired fast enough to manage exploding cluster growth, forcing teams into manual infrastructure management that burns money at scale.

The Efficiency Paradox: More Tools, Worse Results

The Kubernetes waste crisis follows a counterintuitive pattern. FinOps awareness has never been higher. Companies deploy cost monitoring tools, implement tagging strategies, and hire consultants. Yet CPU utilization fell from 13% to 10% between 2023 and 2024. Memory utilization improved modestly from 20% to 23%, but the core problem intensified.

Cast AI analyzed 2,100+ organizations across AWS, GCP, and Azure from January through December 2024. The data reveals a troubling disconnect between provisioned infrastructure and actual usage. Organizations request resources from Kubernetes based on guesswork rather than measurement. Developers set CPU requests at easy round numbers—500 millicores, 1GB of memory—without profiling actual consumption.

The result: 40% of requested CPU capacity goes unused, and 57% of memory requests exceed actual needs. Multiply this across hundreds of pods and dozens of clusters, and you get utilization rates that would embarrass a datacenter from 2005.

Why Manual Management is Failing at Scale

The root cause isn’t technology—it’s talent and process. Ninety-four percent of companies struggle to find qualified DevOps talent. Seventy-six percent of DevOps positions require Kubernetes expertise. Sixty percent demand senior-level experience, yet only 5% of open roles target junior engineers.

Cast AI’s report states this bluntly: “Experienced DevOps engineers are not hired fast enough and the lack of talented DevOps engineers results in a decline in efficiency. Many DevOps teams still manually manage infrastructure for Kubernetes applications, leading to significant overprovisioning and cloud waste.”

Manual infrastructure management worked when you had three clusters and five engineers. It collapses when you scale to thirty clusters managed by the same five engineers. Teams fall back on conservative provisioning—over-allocate CPU and memory “just in case” to avoid performance issues during traffic spikes. This safety buffer causes costs to double or triple.

The CNCF microsurvey on cloud-native FinOps confirms this pattern. Seventy percent of respondents cite over-provisioning as the top reason for rising Kubernetes costs. Forty-five percent point to “lack of ownership and accountability”—nobody actively monitors spend, so costs spiral until finance intervenes.

The Real Cost: $10M Waste and a 78% Reduction Case Study

The waste isn’t theoretical. Companies with 1,000+ nodes could reduce their wasted resources by $10 million per year by addressing over-provisioning. One organization discovered this the hard way: they ran 41 nodes averaging 3% CPU utilization and 4% memory utilization.

After analysis and optimization, they reduced their node count from 41 to 17. Monthly infrastructure costs dropped from $61,500 to $13,500—a 78% cost cut delivering $576,000 in annual savings.

Adidas followed a similar path with automated Vertical Pod Autoscaler (VPA) enforcement. By rightsizing workloads based on actual usage rather than developer estimates, they reduced CPU and memory consumption by 30% and cut dev/staging cluster costs by up to 50%.

The over-provisioning gap between requested resources and infrastructure used stood at 37% in 2022, widened to 43% in 2023, then improved slightly to 40% in 2024. Progress, but not enough—the gap remains massive, and actual CPU usage averages just 13% of provisioned capacity.

This is a Cross-Cloud Problem

Switching cloud providers won’t save you. AWS clusters average 11% CPU utilization. Azure clusters also average 11%. GCP clusters fall in the same 10-13% range. The problem stems from Kubernetes resource management practices, not cloud provider infrastructure.

Developers request resources without measuring actual consumption. Kubernetes schedules pods based on requests, not actual usage. If you request 500 millicores but consume 50, you’ve wasted 450 millicores of schedulable capacity. The cluster provisions enough nodes to satisfy requests, leaving most infrastructure idle.

This pattern holds across all major clouds because the underlying behavior—conservative provisioning driven by fear of performance issues—transcends infrastructure providers.

Solutions That Actually Work

The good news: the problem is fixable. Organizations running clusters with a mix of On-Demand and Spot Instances achieve 59% average cost savings. Clusters running exclusively on Spot Instances hit 77% average cost reduction.

Spot instances provide access to unused cloud capacity at steep discounts—typically 70-90% cheaper than On-Demand pricing. The tradeoff is potential interruption when the cloud provider needs capacity back. For fault-tolerant workloads like batch processing, CI/CD pipelines, and dev/test environments, this tradeoff delivers massive savings.

Automation eliminates the manual bottleneck. Vertical Pod Autoscaler (VPA) continuously monitors actual resource consumption and adjusts CPU and memory requests automatically. Horizontal Pod Autoscaler (HPA) scales replica counts based on load. Cluster Autoscaler adds or removes nodes as capacity needs change.

Machine learning-driven optimization platforms like Kubecost, OpenCost, and Cast AI take this further. They analyze usage patterns across workloads and automatically rightsize resources, delivering documented savings of up to 50% through intelligent resource allocation.

Six essential Kubernetes cost control strategies for 2025 include rightsizing workloads, implementing autoscaling, leveraging spot instances, establishing cost visibility, enforcing resource quotas, and cleaning up unused resources. CloudZero identifies nine ways to lower Kubernetes costs, emphasizing automation and continuous monitoring over manual adjustments.

The common thread: manual management can’t scale. Automation isn’t optional—it’s mandatory for efficient Kubernetes operations beyond small teams.

The Path Forward

Kubernetes efficiency is declining because DevOps teams can’t hire fast enough to manage cluster growth manually. The talent shortage isn’t temporary—94% of companies struggle to find qualified engineers, and only 5% of open positions target juniors who could build future talent pipelines.

Organizations have two choices: automate resource management or continue wasting 40-80% of Kubernetes spend. The tools exist. The strategies work—78% cost reductions aren’t outliers, they’re achievable with proper implementation.

The $44.5 billion annual cloud waste crisis will worsen unless teams abandon manual provisioning. CPU utilization fell from 13% to 10% despite increased awareness and tool adoption because manual processes create the waste, not fix it.

The irony: Kubernetes was built to automate infrastructure. Yet most teams manage it manually, defeating the purpose while burning budgets. The answer isn’t leaving Kubernetes—it’s using Kubernetes the way it was designed, with automation handling what humans can’t scale.