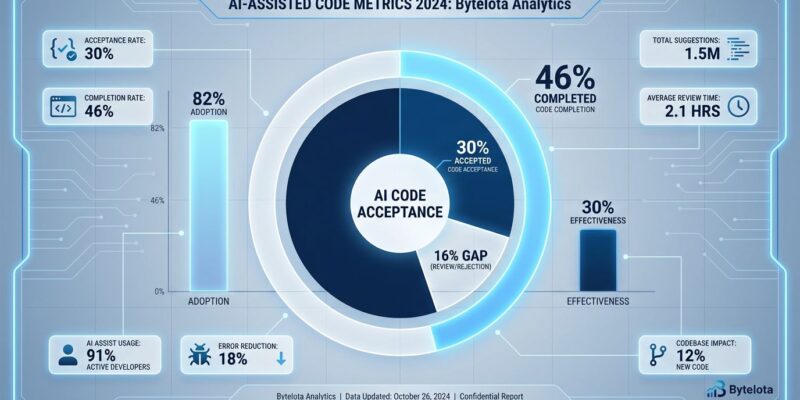

GitHub Copilot offers code completions at a 46% rate, but developers only accept approximately 30% of those suggestions. This 16-percentage-point acceptance gap exposes a fundamental tension in the AI coding revolution: adoption has exploded to 82% of developers using AI tools weekly, yet one-third of AI-generated code gets rejected before it ever reaches production. The gap between what AI offers and what developers trust reveals the difference between marketing hype and ground truth in 2025’s productivity landscape.

The Acceptance Gap: Quality Control, Not Waste

The 46% completion rate vs 30% acceptance rate isn’t a bug in GitHub Copilot—it’s developers exercising quality control. This 16-point gap represents human judgment filtering AI suggestions in real-time. In fact, 75% of developers manually review every AI-generated code snippet before merging, according to recent research from NetCorp Software. This behavior makes sense when you consider that 48% of AI-generated code contains security vulnerabilities, and GitClear’s analysis of 153 million code lines found 4x more code cloning with AI-assisted development compared to traditional approaches.

The acceptance gap answers the critical question haunting every development team: does AI help or create busywork? If developers accepted 90% of suggestions, AI would be revolutionary. At 30%, AI functions more like a junior developer requiring constant supervision. Consequently, the 16% gap is the tax developers pay for AI assistance—time spent evaluating and rejecting low-quality suggestions.

However, this tax might be worth paying. Among developers who accept AI tools, 81% report noticeable productivity improvements in coding and testing. The key is understanding that the gap isn’t waste—it’s insurance against shipping vulnerable or unmaintainable code.

82% Adoption Doesn’t Equal 30% Effectiveness

Adoption statistics tell one story; acceptance rates tell another. Currently, 82% of developers use AI coding tools daily or weekly, up from 76% in 2024. Meanwhile, 59% run three or more tools simultaneously, and 41% of all code written in 2025 is AI-generated. JetBrains’ survey of 24,534 developers across 194 countries found 85% use AI tools regularly for coding tasks.

Nevertheless, this adoption surge masks a productivity paradox documented by Michael Hospedales’ research: teams complete 126% more feature requests while individual features take 19% longer to develop. Moreover, initial development speeds up by 67%, but code maintenance slows by 23%. Bug introduction rates jump 89%, and code review time increases 31%.

The tool stacking problem makes this worse. Teams using four or more AI tools show 34% lower productivity than those using one or two tools effectively. More tools don’t equal better results—they create decision paralysis and context-switching overhead that erodes the productivity gains AI promises.

This explains why companies celebrating 82% adoption might be measuring the wrong metric entirely. Adoption is not the same as effectiveness. Furthermore, the 30% acceptance rate is the better indicator of actual value delivered.

The Technical Debt Time Bomb

Features with over 60% AI assistance take 3.4x longer to modify later, creating what researchers call a “technical debt time bomb.” While AI speeds initial development by 67%, the long-term costs mount quietly. Additionally, code maintenance becomes 23% slower. Debugging AI-generated code takes 2.1x longer than expected despite initially “working.” Code reviews transform into what developers call “archaeology sessions”—trying to understand code nobody on the team actually wrote.

The numbers reveal the hidden costs AI adoption creates. Short-term code churn increases while maintainable design principles decline. GitClear’s analysis found AI-assisted development produces 4x more code cloning, suggesting developers are accepting similar-looking solutions without understanding underlying patterns.

This is precisely why the acceptance gap matters. The 30% acceptance rate represents developers trying to avoid this technical debt bomb. Rejecting 70% of AI suggestions isn’t inefficiency—it’s quality control to prevent code that “works” now but creates 3.4x modification costs later. Therefore, the 16% gap between offering and accepting code isn’t waste; it’s insurance against future pain.

When AI Works and When It Doesn’t

Google reports 25% of its code is AI-assisted, delivering a +10% engineering velocity gain. Microsoft’s Q1 2025 study shows average 3.5X ROI on AI investments, with 5% of companies seeing 8X returns. JetBrains found 9 in 10 developers save at least one hour weekly using AI, with one in five saving eight or more hours—equivalent to a full workday.

These success stories share a pattern: careful human oversight. One Hacker News user “fixed 109 years of open issues with 5 hours of guiding GitHub Copilot,” demonstrating what’s possible with skilled direction. GitHub’s internal usage data shows 400 employees used Copilot in over 300 repositories, merging almost 1,000 pull requests.

In contrast, workplace observations note “fairly obvious drops in code quality” for Copilot users without strong code review processes. A rigorous METR study published in July 2025 found experienced developers actually took 19% longer to complete tasks while believing they were 20% faster. Security concerns emerged with CVE-2025-53773, a remote code execution vulnerability via prompt injection, raising questions about acceptable risk levels for AI coding tools.

The difference between Google’s 10% velocity gain and the average developer’s 19% slowdown? Implementation quality. Elite implementations treat AI as a junior developer requiring supervision. Average implementations trust AI suggestions without adequate review, accumulating technical debt that compounds over time.

Setting Realistic Expectations for Your Team

The 46% to 30% acceptance gap isn’t disappearing—it’s a feature, not a bug. AI tools will improve (Cursor’s multi-file reasoning shows 35-40% better performance on context-heavy tasks than Copilot), but developers will always filter suggestions. The critical question for teams: is the 30% acceptance rate worth the cost of evaluating the other 70%?

The 2025 tool landscape offers options at every price point. Cursor ($20/month) excels at complex refactoring with multi-file reasoning. GitHub Copilot ($10/month) provides reliable inline suggestions that “finish your thoughts as you type.” Codeium offers a genuinely free tier with unlimited autocomplete. Google Gemini Code Assist ($75/developer/month) targets enterprises with 2.5x higher success rates compared to developers using no AI tools.

Developer attitudes reveal the practical middle ground. JetBrains’ survey found developers comfortable delegating boilerplate code generation, documentation, and repetitive tasks to AI. However, they prefer human control for debugging, architecture decisions, security implementation, and system integration. Interestingly, 52% still code recreationally after work hours, suggesting AI hasn’t killed the love of the craft.

Understanding the acceptance gap helps teams measure what matters. AI is a junior developer requiring supervision, not a senior replacement. The 30% acceptance rate is actually good if it saves time on boilerplate while preserving quality on complex logic. Teams need to track acceptance rates and code quality metrics, not just adoption percentages.

The acceptance gap will shrink as AI improves, but it won’t disappear. Nor should it. That 16% gap represents human judgment—the difference between code that ships and code that creates problems six months later.