A rigorous randomized controlled trial published in July 2025 found something shocking: experienced developers using AI coding tools took 19% longer to complete tasks than those working without AI assistance. The twist? Those same developers believed they were 20% faster. This 40-percentage-point perception gap documented by METR researchers across 246 coding tasks reveals a productivity crisis built not on facts, but on feelings.

The study involved 16 experienced open-source developers, each with an average of five years working on their specific repositories. Using frontier AI tools like Cursor Pro with Claude 3.5 Sonnet, developers worked on real issues in mature codebases averaging over 22,000 GitHub stars and one million lines of code. Before the study, participants predicted AI would make them 24% faster. After experiencing the 19% slowdown, they still estimated they’d gained a 20% speedup. If developers can’t accurately judge whether they’re getting faster or slower, how can teams make sound decisions about tools, workflows, or investments?

The Review Bottleneck Explains the Paradox

The psychological trick is simple: AI generates code quickly, creating an immediate sense of progress and accomplishment. Developers feel productive watching their screens fill with generated text. What they don’t notice is the extra time spent in review, debugging, and fixing what Stack Overflow’s 2025 survey identified as the number-one AI frustration: solutions that are “almost right, but not quite.” Forty-five percent of developers cite this as their biggest problem, while 66% report spending more time fixing nearly-correct AI code than they save on generation.

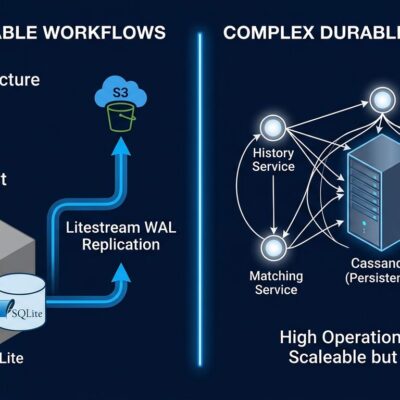

Research from Faros AI quantifies the bottleneck precisely. Teams with high AI adoption complete 21% more tasks and merge 98% more pull requests—impressive numbers at first glance. However, pull request review time increases 91%, creating a critical chokepoint that neutralizes the gains. The time breakdown reveals the shift: less time actively coding, reading documentation, and thinking; more time prompting AI, waiting for responses, reviewing outputs, and sitting idle while validating suggestions. The minutes saved on boilerplate get wiped out by the hours spent verifying, testing, and often rewriting AI-generated code.

The technical debt compounds the problem. Seventy-six percent of developers say AI-generated code demands refactoring, according to industry surveys. Meanwhile, the 2024 DORA report found that a 25% increase in AI adoption triggered a 7.2% decrease in delivery stability and a 1.5% decrease in delivery throughput. AI commits get merged into production four times faster than regular commits, which sounds efficient until you consider the security implications: insecure code is bypassing normal review cycles.

The Organizational Reality Check

Individual developers feel faster. Teams show higher activity metrics. Yet these gains evaporate at the organizational level, where correlation between AI adoption and business outcomes disappears entirely. Why? Because unchanged processes can’t accommodate the new patterns. Code reviews haven’t adapted to AI-generated volume. Testing pipelines weren’t designed for rapid-fire commits. Release processes still move at pre-AI speed. Consequently, any individual or team-level productivity boost gets absorbed by downstream bottlenecks.

This pattern mirrors another 2025 finding: Harness’s FinOps report projecting $44.5 billion in cloud waste, representing 21% of enterprise infrastructure spend. The cause? Fifty-two percent of engineering leaders cite disconnects between teams and limited visibility into actual resource usage. Fifty-five percent admit purchasing commitments are based on “guesswork.” The parallel is striking—companies are making multi-million dollar investments in AI coding tools for every developer, justified by self-reported productivity gains that don’t translate to measurable business value. It’s infrastructure spending based on perception rather than data, potentially creating a similar waste pattern.

Trust Is Declining While Adoption Soars

Stack Overflow’s 2025 Developer Survey reveals a telling contradiction. Eighty-four percent of developers now use AI tools, with 51% using them daily. JetBrains reports 85% regular usage across their 24,534-developer survey. Yet trust in AI accuracy has plummeted from 40% to just 29%, and only 3% of developers “highly trust” AI outputs. Forty-six percent actively distrust the tools they’re using daily. Furthermore, positive favorability dropped from 72% to 60% year-over-year.

This isn’t a fringe opinion from skeptics who refuse to adopt AI. This is the majority of developers who’ve integrated AI into their daily workflows discovering that the tools don’t deliver on the productivity promises. They’re not abandoning AI—adoption keeps rising—but they’re growing increasingly frustrated with the gap between marketing claims and reality.

The Measurement Problem We’re Not Talking About

The AI productivity narrative rests on vendor reports claiming 20-40% productivity increases, JetBrains surveys showing 90% self-reporting time savings, and developer testimonials about feeling faster. Meanwhile, the only randomized controlled trial—the gold standard for research—shows a 19% decrease for experienced developers in familiar codebases.

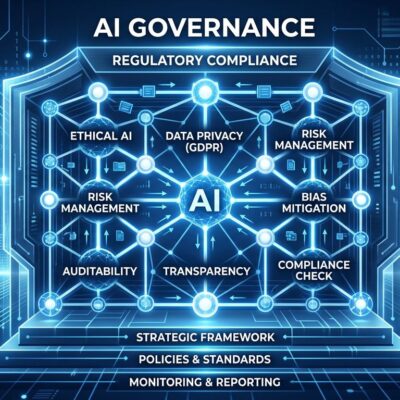

Most companies track the wrong metrics: lines of code written, code generated by AI, self-reported time savings. These are vanity metrics that create false confidence. What actually matters is end-to-end delivery time including review and testing, code quality and technical debt accumulation, security vulnerabilities introduced, team-level throughput rather than individual velocity, and ultimately, business value delivered.

Research from DX found that the most effective AI use case isn’t code generation at all—it’s stack trace interpretation and explanation. When autonomous agents connect to richer project and architectural information, developer throughput improves up to 270% and code accuracy rises up to 60%. Context matters more than raw generation speed. However, that’s not what gets marketed, because “understand your errors better” doesn’t sell subscriptions like “code 10x faster” does.

Where We Go From Here

The METR researchers explicitly noted their study’s limitations: it focused on experienced developers working in familiar codebases, may not generalize to all scenarios, and represents a snapshot of early-2025 AI capabilities. Future AI may improve. Junior developers might benefit differently from experienced ones. Specific tasks might see genuine speedups.

But here’s what we know for certain: the current narrative is oversold. The productivity gains companies are banking on don’t show up in rigorous measurement. The perception gap is real and significant. Moreover, we’re making strategic decisions—team structures, tool investments, workflow changes—based on feelings rather than facts.

Use AI coding tools if they work for your specific context. But demand objective measurement. Track review time, not just generation time. Compare end-to-end delivery metrics, not velocity during the typing phase. Run your own controlled experiments instead of trusting vendor claims. And above all, trust data over feelings—because the biggest productivity gain might come from learning to measure productivity accurately in the first place.