On November 25, 2025, Nvidia—a $4 trillion company with a 90% monopoly on AI chips—did something it almost never does: it publicly defended itself. After reports emerged that Meta is considering switching to Google’s TPUs for its AI infrastructure, Nvidia posted on X: “NVIDIA is a generation ahead of the industry—it’s the only platform that runs every AI model and does it everywhere computing is done.” The stock dropped 7% before recovering to -4.3%. For a company that usually lets its dominance speak for itself, this defensive posture reveals something critical: Nvidia is genuinely spooked. And they should be.

The Tweet That Revealed Everything

Nvidia’s rare public defense came in response to reports that Meta is negotiating a multi-billion dollar deal to deploy Google’s TPUs in its data centers by 2027. The immediate trigger: a report from The Information detailing Meta’s plan to rent Google Cloud TPUs in 2026 before purchasing them outright the following year. For context, Meta represents a $10 billion-plus annual AI chip customer—exactly the kind of revenue Nvidia can’t afford to lose.

Fortune called Nvidia’s X post “something rare”—and they’re right. When a monopolist with 90% market share feels compelled to publicly defend its position, the competitive landscape has shifted. The stock market agreed: while Nvidia fell 7%, Alphabet climbed 4.2% on the same day. This isn’t a confident giant swatting away a fly. This is a company sensing genuine threat.

Inference Economics: Where Google Wins

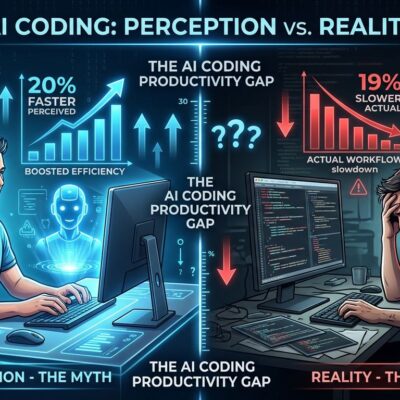

Google’s TPUs deliver a 4x better performance-per-dollar advantage for AI inference, with 60-65% lower power consumption than Nvidia’s GPUs. This matters because inference—running trained AI models billions of times—is projected to be 70% of all AI compute demand by 2030. Training, where Nvidia currently dominates, is flattening. Inference workloads double every 12-18 months, but each model trains only once.

The economics are brutal. Industry insiders report TPUs achieve up to 1.4x better performance per dollar, with some customers seeing 80% cost reductions for flexible deployment timelines. For companies running billions of inference calls daily, small efficiency advantages compound into massive savings. As one former Google Cloud executive put it: “For companies like Meta or Google, a 10% efficiency gain in inference translates to hundreds of millions of dollars in saved electricity and hardware.”

Here’s the vulnerability: Nvidia’s CUDA moat is strongest for training, where 6 million locked-in developers rely on the ecosystem. But inference doesn’t need CUDA’s flexibility—it needs cost efficiency and power savings. TPUs are purpose-built for exactly this workload, powered by a systolic array architecture that drastically reduces memory bottlenecks. If inference becomes 70% of the market, Nvidia’s 90% monopoly faces a real challenger.

Google Proved You Don’t Need Nvidia

Google trained its flagship Gemini 3 model—competitive with GPT-4 and Claude—entirely on TPUs without touching a single Nvidia GPU. This destroys the narrative that “you need Nvidia for cutting-edge AI.” Multiple sources confirm Gemini 3 was trained exclusively on TPU v4 and v5 Pods, with industry analysis calling it “a first at this level” for proving Google’s custom silicon can compete at the frontier.

The TPU ecosystem is expanding beyond Google’s internal use. Anthropic, maker of Claude, just ordered 1 million TPUs in a tens-of-billions dollar deal for both training and inference. When a leading AI company commits to that scale on non-Nvidia hardware, the “you need GPUs” argument starts to crack.

Nvidia’s defense relies on CUDA ecosystem lock-in—”the only platform that runs every AI model.” But frameworks like PyTorch and TensorFlow increasingly abstract hardware dependencies. Developers aren’t writing CUDA code anymore; they’re writing framework-native code that runs on multiple backends. The moat isn’t as wide as Nvidia claims.

What Developers Should Know

The future isn’t GPU monopoly or TPU dominance—it’s multi-chip strategies. Smart companies are hedging: train on GPUs where CUDA still dominates, run inference on TPUs where economics matter more, and keep options open with AWS Trainium or AMD as backups.

Anthropic’s approach is instructive: $8 billion investment from Amazon for Trainium-based training, $3 billion from Google for 1 million TPUs handling inference, plus Nvidia GPUs for specific workloads. This multi-cloud strategy kept Claude online during a recent AWS outage while competitors went dark. Meta appears to be following a similar path, considering TPU rental in 2026 and deployment in 2027 while maintaining Nvidia relationships.

For infrastructure teams, the lesson is clear: evaluate TPUs for inference workloads where massive cost savings compound over billions of calls. Keep GPUs for training flexibility where CUDA’s ecosystem still delivers value. Don’t lock yourself into single-vendor dependencies. The days of “just use Nvidia for everything” are ending.

Key Takeaways

- Nvidia’s defensive tweet signals genuine vulnerability – When a $4 trillion monopolist publicly defends itself on X, the competitive dynamics have shifted. The stock market’s 7% drop confirms investors see real threat.

- Inference is 70% of AI’s future, and TPUs win on economics – With 4x better performance-per-dollar and 60-65% power savings, TPUs are purpose-built for the workload that matters most. Inference grows faster than training, and efficiency advantages compound over billions of calls.

- Google proved TPUs can train frontier models – Gemini 3 trained entirely on TPUs destroys the “you need Nvidia” narrative. Anthropic’s 1 million TPU order signals ecosystem expansion beyond Google’s walls.

- Smart strategy: Multi-chip infrastructure – Train on GPUs for CUDA flexibility, run inference on TPUs for cost efficiency, maintain vendor independence. The GPU monopoly era is ending—don’t assume Nvidia dominates forever.