Mistral dropped a 128B open-weight model on April 29 that scores 77.6% on SWE-Bench Verified — and paired it with a CLI coding agent that queues tasks, runs them in the cloud, and files pull requests on GitHub while you work on something else. That combination is worth paying attention to.

Mistral Medium 3.5 consolidates three previous products — Devstral 2 (code), Magistral (reasoning), and its prior instruction model — into one dense 128B deployment. The model is open-weight, available on HuggingFace under a modified MIT license, and self-hostable on as few as four H100s with FP8 quantization.

What Changed and Why It Matters

Medium 3.5 is a dense transformer, not a mixture-of-experts architecture. Every parameter activates on every forward pass, which makes inference behavior predictable and self-hosted throughput easier to reason about. The 256,000-token context window handles large codebases without chunking gymnastics, and reasoning effort is configurable per API request — the same model handles a quick chat reply or a multi-hour agentic run without you switching endpoints.

The consolidation story is the most underrated part of this release. If you were routing traffic between Devstral 2 and Magistral depending on task type, you now use one model for both. That simplifies deployment, reduces latency variance, and cuts the maintenance surface. One model, one endpoint, one billing line.

The SWE-Bench Number, Honestly

77.6% on SWE-Bench Verified puts Mistral Medium 3.5 below Claude Sonnet 4.6 (79.6%) and well below Claude Code (87.6%), but ahead of most open-weight alternatives. That benchmark tests whether a model can resolve real GitHub issues from production repositories — not contrived puzzles — so the number carries real weight.

The fair counterpoint, which the HackerNews community raised immediately: Qwen 3.6 at 27B parameters scores 72.4% on the same benchmark and costs about eight times less per million output tokens via API. Mistral didn’t publish MMLU, GPQA, or HumanEval alongside the launch, which didn’t help the reception.

Where Mistral Medium 3.5 earns its premium is the combination of score, model size, and the self-hosting option. At 77.6%, it’s the strongest open-weight dense model for coding available right now. You can run it on your own infrastructure, which no closed-source competitor offers. That’s a real tradeoff, not a marketing claim.

Vibe: Fire-and-Forget Coding Agent

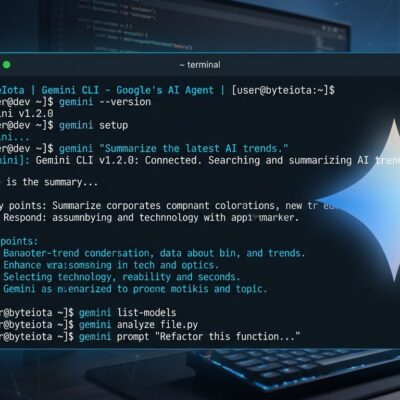

The more interesting part of this release is Vibe remote agents. Vibe is Mistral’s open-source CLI coding agent (Apache 2.0), and the new remote mode runs tasks in isolated cloud sandboxes that operate independently of your machine.

The interaction model is different from Claude Code or Cursor. You’re not pair-programming in a live session — you’re delegating work asynchronously. You queue a task, the agent runs, and you get a pull request when it’s done:

# Install (Python 3.12+ required)

pip install mistral-vibe

# Configure your API key

vibe --setup

# Queue a remote task and walk away

vibe --remote "Fix the authentication timeout bug in auth/middleware.py"You can run multiple remote agents simultaneously on independent tasks. Vibe connects to GitHub for code and PR creation, Linear and Jira for issue context, and Sentry for incident details when you’re debugging a production bug. Session teleportation lets you hand off a local session to the cloud mid-task if you need to close your laptop.

This is the pattern that Cursor 3, GitHub Copilot App, and now Mistral are all converging on: async, PR-based delivery rather than inline autocomplete. The agent runs the work; you review a diff. Whether that workflow fits your team depends on how much you trust the output, which brings us back to the benchmark number.

Self-Host or API: The Honest Math

API pricing is $1.50 per million input tokens and $7.50 per million output tokens. The OpenAI-compatible endpoint means existing agent frameworks work with minimal changes.

The $7.50 output price drew criticism — “closed-source rates for open-source performance” was the most common complaint. The self-hosting path answers that directly. At FP8 precision, a four-GPU H100 cluster handles production inference at the full 256K context window. Breakeven against the API falls around 50–100 million tokens per day, or roughly 1.5–3 billion tokens per month. Below that threshold, the API is cheaper when you factor in GPU costs and operations. Above it, self-hosting saves 50–80%.

Vibe works against the self-hosted endpoint too, so the remote agent infrastructure isn’t locked to Mistral’s cloud.

Bottom Line

Mistral Medium 3.5 isn’t the best model on any single benchmark. It’s positioned for teams that need strong open-weight coding performance and genuinely want the option to self-host — either for cost at scale, data privacy requirements, or both. The Vibe remote agent is the practical differentiator: async coding delegation with GitHub integration is a workflow that doesn’t currently exist in the open-source toolchain.

If you’re running Devstral 2 or Magistral today, the migration path is straightforward. If you’re evaluating open-weight coding models for the first time, compare it honestly against Qwen 3.6 on your actual usage pattern before committing to the API pricing. The weights are available — you can benchmark both.