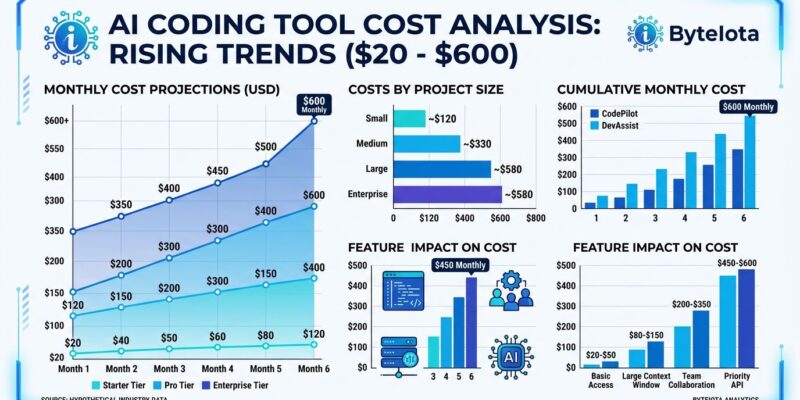

AI coding tools advertise $10-20 per month per developer, but fresh enterprise data from May 2026 tells a different story: the real cost is $200-600 per month when you factor in the complete picture. Here’s why the math doesn’t work the way vendors claim. Inline completion tools like GitHub Copilot Pro ($10/month) and Cursor Pro ($20/month) are just the base layer. Then you add agentic tools with usage-based token costs ranging from $200-2,000 per month depending on how heavily your team uses autonomous code generation. Most developers need both because no single tool handles all use cases, and suddenly that “$20/month” line item balloons to $400-500 per month in actual spend.

The gap between pricing pages and reality hit critical mass in April 2026 when GitHub paused new Copilot signups. Their explanation: “Agentic workflows have fundamentally changed Copilot’s compute demands” and “it’s now common for a handful of requests to incur costs that exceed the plan price.” The flat-rate era is over. Furthermore, in June 2026, GitHub transitions to AI Credits—usage-based billing that aligns pricing with actual token consumption. Consequently, this isn’t temporary pricing pressure. This is the new normal.

The Two-Tier Cost Structure Nobody Mentions

AI coding tools operate on two distinct pricing tiers, and the industry doesn’t make this clear until you’re already committed.

Tier one is inline completion: autocomplete suggestions as you type. A single inline completion consumes roughly 500 tokens. Tools like GitHub Copilot Pro ($10/month) and Cursor Pro ($20/month with a $20 credit pool) live here. Pricing is predictable. This tier works and delivers value—20-40% faster on boilerplate tasks with minimal risk.

Tier two is agentic tools: autonomous multi-step code generation. A 10-step agentic loop consumes 5,000-10,000 tokens—5 to 20 times more than inline completion. Cursor Ultra runs $200 per month for 20x the usage of Pro. Claude Code Max costs $100 per month for heavy users. Devin dropped from $500/month to $20/month in January 2026, but then charges $2.25 per Agent Compute Unit (roughly 15 minutes of active work). Heavy Devin usage still hits $500-1,500 per month.

The problem: most teams need both tiers. Moreover, industry best practice in 2026 is stacking a primary IDE assistant for daily completions with Claude Code for targeted agentic tasks. Total cost: $110-120 per month for heavy users, assuming disciplined use. However, “disciplined use” is harder than it sounds when developers get used to letting AI handle complex refactoring.

Hidden TCO: Licensing Is Only 60-70% of the Bill

Enterprise total cost of ownership analysis from May 2026 shows licensing fees represent only 60-70% of true first-year costs. Additionally, the hidden 30-40% comes from integration labor, training overhead, and usage overages nobody budgeted for.

Take a 50-developer team. GitHub Copilot Business licensing runs $22,800 over two years. Sounds reasonable. But first-year TCO hits $89,000-273,000 depending on integration complexity and usage patterns. According to GetDX’s TCO analysis, mid-market teams report $50,000-150,000 in unexpected costs connecting AI coding tools to CI/CD pipelines, security scanning workflows, and code review processes. Nobody budgets for this until integration starts.

A 100-developer team example breaks down like this: GitHub Copilot Business costs $22,800 annually, OpenAI API usage adds roughly $12,000, code transformation tools contribute another $6,000. Total direct licensing: $40,000 per year. Add integration labor ($50k-150k one-time) and the total annual cost exceeds $66,000—and that excludes ongoing support and overage charges when teams blow past included credits mid-month.

The integration surprise is brutal because it’s invisible until you’re committed. Connecting tools, training developers on prompt engineering, and updating code review processes to account for AI-generated code all require engineering time that wasn’t in the original ROI calculation.

ROI Claims Don’t Hold Up Under Scrutiny

Official ROI claims say 2.5-3.5x average returns and 4-6x for top-quartile organizations. Nevertheless, the fine print reveals: “only when actual token and usage-based costs are included.” Most teams underestimate real costs and overestimate productivity gains.

A McKinsey study published in February 2026 surveyed 4,500 developers across 150 enterprises. The headline finding: AI coding tools reduce time spent on routine coding tasks by an average of 46%. Impressive—until you read further. Code review time increased by 12%, and projects with unreviewed AI-generated code showed higher bug density. CodeRabbit’s December 2025 analysis found 1.7 times more issues in AI-coauthored pull requests. Veracode reported AI-generated code has 2.74 times more vulnerabilities than human-written code. CVE data backs this up: 35 new AI-caused CVEs in March 2026, up from 6 in January.

The productivity paradox: developers feel faster but deliver slower. One analysis put it bluntly: “AI-made code is now almost free to produce, but it did nothing to reduce the cost of reviewing it.” A developer generates a 500-line pull request in 90 seconds. A maintainer still needs 2 hours to review it properly. Consequently, the time savings evaporate in code review.

Microsoft Research found productivity gains materialize after 11 weeks, with break-even at 12-18 months and positive ROI by year two—when full TCO is properly accounted for. That timeline assumes disciplined use and thorough code review. A counterpoint: METR’s 2025 randomized controlled trial with experienced open-source contributors found AI tools resulted in a 19% net slowdown compared to unassisted work. Initial enthusiasm is waning as developers encounter the technology’s limitations.

When AI Coding Tools Are Actually Worth It

AI coding tools work, but only with realistic budgeting and strict use-case discipline. Here’s the decision framework that makes sense in 2026.

For inline completion: yes, it’s worth $10-20 per month. GitHub Copilot Pro at $10/month or Cursor Pro at $20/month deliver measurable value on boilerplate generation, autocomplete, and low-stakes automation. Productivity gains are real, risk is minimal, and costs are predictable. This tier is a no-brainer for most developers.

For agentic tools: selective use only. Budget $100-200 per month per heavy user, but define “heavy user” narrowly. Use agentic tools for complex refactoring tasks, large-scale code migrations, and exploratory prototyping—not for daily coding. Treat pull requests from AI agents as drafts requiring extensive review, not production-ready code. Don’t commit AI-generated code without manual verification. The 2.74x higher vulnerability rate is real.

Avoid AI coding tools entirely if your team is small (fewer than 10 developers) with tight budgets, if you lack code review discipline, or if you can’t absorb $50k-150k in integration costs upfront. The ROI math doesn’t work for small teams that can’t amortize integration labor across dozens of developers.

Budget for Reality, Not Vendor Promises

The $20/month era is over. Vendors tried flat-rate pricing and lost money on heavy users. Agentic workflows consume 5-20x more tokens than inline completion, and providers can’t sustain old pricing models. Usage-based billing is the future, and costs will continue rising as providers align with true compute economics.

If you’re budgeting for AI coding tools in 2026, assume $100-200 per month per developer for realistic usage. Factor in $50k-150k integration costs for mid-market teams. Plan for 12-18 months to break-even and measure ROI carefully against full TCO—not just subscription fees. Don’t expect 46% productivity gains without accounting for the 12% code review slowdown and quality issues that come with AI-generated code.

AI coding tools deliver real value, but the pricing is in flux and the ROI claims need scrutiny. Budget accordingly.