Anthropic launched Claude Managed Agents on April 8, offering hosted infrastructure that gets developers from prototype to production AI agents in days instead of months. The pitch is simple: you define what your agent does, Anthropic runs it on their cloud. No building agent loops, no sandboxing headaches, no tool execution layers. Early adopters like Notion, Rakuten, and Asana report going live in under a week.

But at $0.08 per session-hour plus standard API token costs, the real question isn’t whether managed infrastructure works—it’s whether Claude-only lock-in is worth the speed.

What You Actually Get

Claude Managed Agents provides a pre-built agent harness running in secure cloud containers. The infrastructure includes sandboxed code execution, built-in tools (Bash, file operations, web search, MCP server connections), and performance optimizations like prompt caching and compaction. Sessions are stateful with persistent file systems, and you can deploy via Claude Console, Claude Code, or Anthropic’s new CLI.

It’s currently in public beta, requiring the managed-agents-2026-04-01 header on all API requests. The SDK handles this automatically, but beta status means behaviors may change between releases.

Notion leveraged the built-in prompt caching to achieve 90% cost reduction and 85% latency improvement. Engineers ship code and knowledge workers generate presentations without leaving the platform, running dozens of parallel agent tasks while teams collaborate on outputs simultaneously.

The Economics: When $0.08 Per Hour Adds Up

Pricing is straightforward: standard Claude API token rates plus $0.08 per session-hour. Runtime charges accrue only while the session status is “running”—not when waiting for user input or sitting idle. A two-hour active session costs $0.16 in runtime fees, but token costs typically dominate at $20-50 for the same workload.

For most teams, managed infrastructure is cheaper than self-built through the first year. Setup takes hours instead of days, and ongoing maintenance is essentially zero. But the economics flip at scale: 500 concurrent agents generate $40 per hour in session costs alone, before factoring in inference.

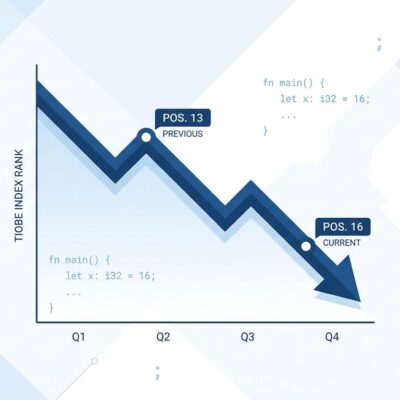

Independent analysis shows self-hosted solutions become 60-70% more cost-effective beyond three persistent agents. The crossover point isn’t about company size—it’s about how many agents you run continuously. If you’re prototyping or deploying a handful of agents, managed wins on speed and simplicity. If you’re scaling to dozens, self-hosted wins on cost and flexibility.

Real-World Validation

Rakuten deployed agents across five business functions in under a week, each plugged into Slack and Teams to accept task assignments and return structured deliverables. Their release cycle compressed from quarterly to every two weeks, with a 97% reduction in critical errors.

Asana built what they call “AI Teammates”—agents embedded in project management workflows that pick up assigned tasks, draft deliverables, and hand outputs back for human review. Claude Managed Agents dramatically accelerated their development compared to building custom infrastructure.

The pattern is consistent: teams go from zero to production agents in days or weeks, not months. The infrastructure complexity that usually derails AI projects simply isn’t a factor.

The Trade-Off: Speed vs. Flexibility

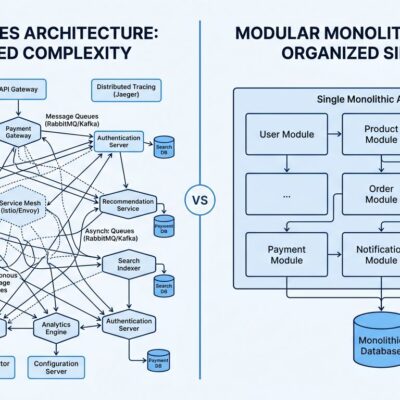

Here’s what most launch coverage won’t tell you: managed infrastructure means vendor lock-in. This is Claude-specific, cloud-only infrastructure. You can’t easily migrate to GPT or other models. You can’t access local filesystems or git history—sessions run in sandboxed containers. Multi-agent coordination and self-evaluation (outcomes) are still in research preview, requiring separate access approval.

Rate limits sit at 300 requests per minute for create endpoints and 600 for read operations. For high-volume scenarios, that could become a bottleneck. And because it’s beta software, Anthropic reserves the right to change how the harness works between releases.

Managed makes sense when you have small teams without dedicated DevOps, need production agents fast, and value zero-maintenance over cost optimization. Self-hosted makes sense when you’re running high-volume workloads, need multi-vendor orchestration, or when 60-70% cost savings matter more than development speed.

Don’t let Anthropic’s marketing make that decision for you. If you’re building one or two agents for internal workflows, managed infrastructure is the pragmatic choice. If you’re architecting a platform where agents are the product, betting your infrastructure on beta software with vendor lock-in is a strategic risk.

What This Signals

The AI agent market is projected to grow from $7.84 billion in 2025 to $52.62 billion by 2030, and 85% of organizations already use agents in at least one workflow. Framework adoption has doubled year-over-year, and small teams are saving 40+ hours monthly with basic automation.

Claude Managed Agents is part of a broader shift: agent infrastructure is becoming a “solved problem,” commoditized into managed services. Developers can now focus on what agents do instead of how they run. The barrier to deploying production AI agents just dropped dramatically—but so did the excuse for not thinking critically about vendor lock-in and long-term flexibility.

If you’re choosing between managed and self-hosted, the math is simple. Under three persistent agents: managed wins. Beyond that: build your own. Unless you’re willing to trade flexibility and cost savings for convenience, in which case managed infrastructure might be exactly what you need—just know what you’re giving up.