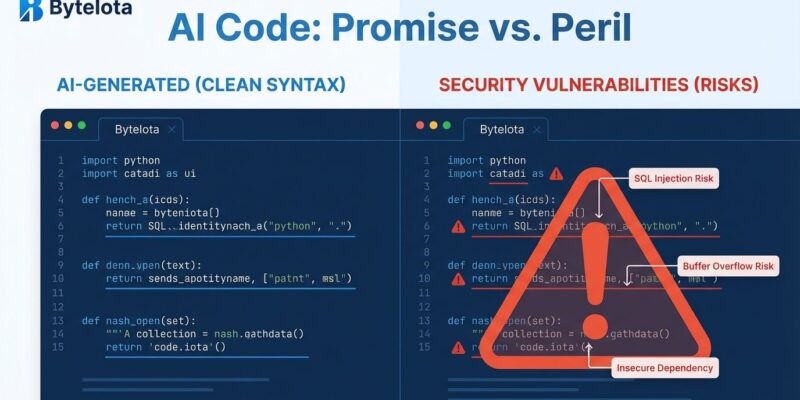

Ninety-six percent of developers don’t trust AI-generated code is functionally correct. However, only 48% actually verify it before committing. That’s not healthy skepticism—that’s productive irresponsibility. Moreover, this gap between what developers claim and what they do has created what AWS CTO Werner Vogels calls “verification debt,” a crisis where software advances toward production faster than humans can understand it.

Sonar’s State of Code survey of 1,100+ developers shows 72% use AI tools daily, generating 42% of all commits (rising to 65% by 2027). Yet 96% don’t trust the output. Why skip verification? Thirty-eight percent say it takes too long. Sixty-one percent admit AI code “looks correct but isn’t reliable.”

In other words, you’re knowingly committing code you don’t trust because checking it is inconvenient. That’s negligence dressed up as productivity.

The Consequences Are Already Here

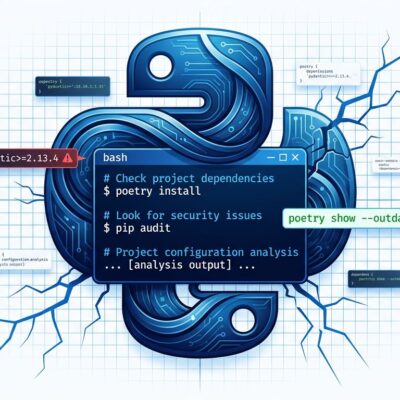

This isn’t theoretical. Privilege escalation paths in production code have surged 322% since AI coding tools gained widespread adoption. In March 2026 alone, 35 new CVEs were disclosed that traced directly to AI-generated code, up from 6 in January and 15 in February. Furthermore, traditional static analysis tools catch only 20% of AI-generated vulnerabilities because they rely on pattern matching for known-bad code. AI fails at logic-level checks—authentication, authorization, proper scoping.

In one 2024 incident, an attacker used a regex pattern to trick an agent into exfiltrating 45,000 customer records. The code was syntactically correct and ran without errors. It just did the wrong thing.

Meanwhile, production incidents are up 23.5%. Deployment failure rates are up 30%. The code ships faster, but it breaks more.

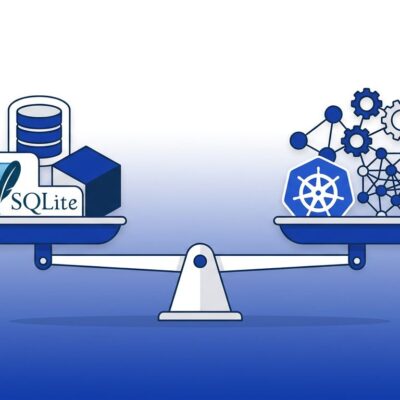

The Productivity Gains Are an Illusion

Individual developers report completing 21% more tasks when using AI coding tools. Teams merge 98% more pull requests. Nevertheless, here’s the catch: PR review time increased 91%. The bottleneck shifted from writing code to understanding it. Consequently, organizational DORA metrics—the measures that actually matter for delivery speed—are flat. No improvement.

Werner Vogels summarized the problem at AWS re:Invent 2025: “You will write less code, because generation is so fast. You will review more code because understanding it takes time.” Writing code was never the bottleneck. Verification is. Additionally, developers are skipping it because the queue is too long.

The system created its own problem. AI accelerates generation, review capacity stays fixed, backlogs explode. Ultimately, the “productivity” being measured is throughput without quality gates. It’s not speed—it’s recklessness.

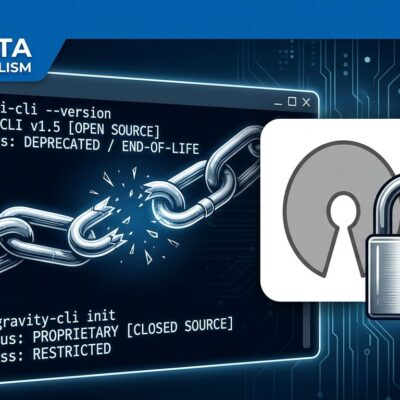

You’re Using Tools That Work Differently Than You Think

Developers expect deterministic systems (same input, same output). However, AI code generation is probabilistic—the same prompt produces different logic each run. Even at temperature zero, LLM APIs aren’t truly deterministic.

This creates the “looks correct but isn’t reliable” phenomenon. The code compiles and runs, but contains logic errors that surface only under specific conditions. Consequently, forty-five percent cite “almost right, but not quite” as their top frustration. Sixty-six percent spend more time debugging AI code than writing manually.

Your workflow is built for one paradigm. Your tools operate in another. Instead of adapting the workflow, you’re skipping the verification step because it doesn’t fit neatly into the old process.

Pick a Lane

You can’t claim you don’t trust something while simultaneously using it without verification. Either you trust AI-generated code and you commit it with confidence, or you don’t trust it and you verify every line. What you’re doing now—saying you don’t trust it, then committing it anyway because checking takes too long—is hypocrisy.

It’s also organizationally reckless. Atlassian cut 1,600 jobs (900+ in R&D). Block eliminated 4,000 (40% of staff), citing “intelligence tools.” These companies fire developers while depending on AI code that their remaining staff admits they don’t trust or verify.

Goldman Sachs finds “no meaningful relationship between productivity and AI adoption at the economy-wide level.” Nevertheless, companies justify layoffs by claiming AI delivers equivalent output. They’re not measuring verification debt or the 322% surge in privilege escalation. They’re counting commits and calling it productivity.

This Isn’t a Tool Problem

The industry is racing toward a cliff, convinced it’s taking a shortcut. The trust gap—96% distrust, 48% verify—isn’t a technology failure. It’s a discipline failure. Developers know better. They just aren’t doing better. Similarly, organizations know better. They’re prioritizing cost reduction over code quality, then acting surprised when incidents spike.

You want the speed of AI code generation without the cost of verification. You can’t have both. Pick one.