The obra/superpowers framework gained 2,292 GitHub stars today alone, bringing its total to 121,000 and securing the #2 trending spot. Created by Jesse Vincent—Perl project lead and Keyboardio founder—Superpowers takes a different approach to AI agent development. While LangChain offers flexible primitives and AutoGPT provides raw autonomy, Superpowers enforces structured methodology through composable Markdown-based skills that agents automatically invoke. The framework’s explosive growth from 0 to 121K stars in just five months signals an industry shift from complex monolithic frameworks toward modular, skills-based architectures that actually ship production-quality code.

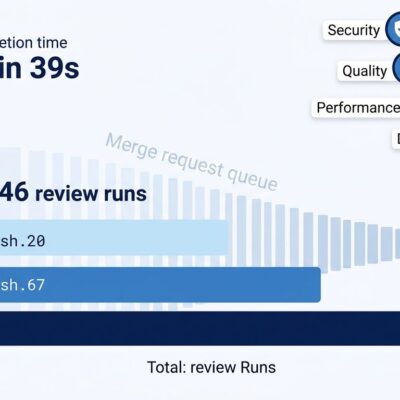

AI coding tools show a 39-percentage-point gap between perceived productivity (+20%) and measured reality (-19% slower, according to METR research). Superpowers addresses this by enforcing test-driven development, systematic planning, and code review. It turns “feels fast” into “actually fast with quality.”

Skills as Mandatory Workflows, Not Optional Tools

Traditional frameworks treat tools as suggestions. LangChain provides a library of primitives you can chain together. AutoGPT takes a goal and figures out its own path. However, Superpowers does neither. It treats skills as mandatory workflows encoded in Markdown files with YAML frontmatter. Each skill contains instructions, scripts, and templates. When an agent receives a task, it scans available skills and invokes the relevant ones automatically. These aren’t suggestions—they’re enforced requirements.

The test-driven development enforcement demonstrates this approach. When an agent writes code before tests, Superpowers auto-deletes the code. No exceptions. No warnings. This forces discipline. Moreover, Spring AI adopted a similar “Agent Skills” pattern in January 2026, validating the approach beyond Superpowers. The framework’s v2.0 architecture separated skills into a dedicated repository (obra/superpowers-skills), transforming the plugin from monolithic to modular. Consequently, skills update without requiring plugin reinstalls.

This paradigm shift matters because AI agents with optional tools skip tests, ignore reviews, and ship buggy code. Mandatory skills create discipline that addresses the productivity paradox—feeling fast while shipping slow due to debugging. Developers using Superpowers report 85-95% test coverage compared to 30-50% with standard Claude Code.

The 7-Phase Enforced Workflow for AI Agent Development

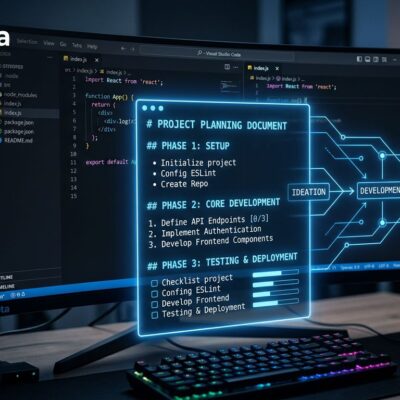

Superpowers implements a strict 7-phase methodology. First, Socratic Brainstorming forces requirements dialogue before any coding. Second, Git Worktree Isolation protects the main branch. Third, Micro-Task Planning breaks work into 2-5 minute units. Fourth, Parallel Subagent Execution speeds delivery by 3-4x. Fifth, Test-Driven Development mandates the RED-GREEN-REFACTOR cycle. Sixth, Systematic Code Review verifies specification compliance. Seventh, Branch Completion handles integration, testing, and cleanup. Each phase builds on the previous one, creating a structured path from idea to production-ready code.

A developer built a Notion clone in 45-60 minutes with 87% test coverage—rich-text editing, tables, Kanban boards, PostgreSQL persistence—without writing a single manual line. The framework achieved this through subagent parallelization, where UI, API, database, and test agents worked simultaneously, combined with enforced TDD that required tests before implementation every time. The initial planning overhead takes 10-20 minutes, but implementation and debugging run 2-3x faster than ad-hoc approaches.

The 7 phases aren’t suggestions. Agents follow them sequentially. Compare this to AutoGPT’s “figure it out yourself” approach or LangChain’s “you build the workflow” philosophy. Superpowers provides the workflow, enforces it, and delivers consistent results. Furthermore, developers don’t design agent behavior—they invoke skills and receive structured output.

85-95% Test Coverage Without Thinking

Community feedback highlights the enforcement benefits. One developer noted: “It makes me a better developer by forcing TDD.” Others report 85-95% test coverage on production applications without manual test writing. The framework mandates RED-GREEN-REFACTOR cycles. When code exists before tests, the agent deletes it. This aggressive enforcement teaches discipline. After 2-3 tasks, developers trust the process. Additionally, Anthropic’s marketplace acceptance in January 2026 validated the framework’s quality and reliability.

The growth trajectory proves adoption. Launched in October 2025, the framework reached marketplace acceptance and 40,000+ stars by January 2026. By March 2026, it hit 121,000 stars while trending at #2 on GitHub. Multiple Hacker News threads generated strong endorsements: “Can’t recommend this post strongly enough.” Some criticism exists—”somewhat overengineered” for trivial tasks—but consensus describes it as “a kernel of a good idea representing a standard agentic workflow.”

These adoption metrics validate the approach. Reaching 121K stars in five months is rare. It signals real developer pain solved. The “enforced TDD” feature initially frustrates developers who ask “why is my code being deleted?” But it produces results: 87% coverage and production-ready code. This matters because AI-generated code needs tests more than human-written code due to unpredictable edge cases. Superpowers bakes testing into the process rather than treating it as an afterthought.

Related: AI Productivity Paradox: 93% Use It, 19% Slower Reality

From Monolithic to Modular: The Industry Shift

Superpowers represents a broader industry trend toward simplification through modularity. LangChain offers comprehensive primitives and AutoGPT provides autonomous goal-driven agents, but both add complexity. Nevertheless, Superpowers takes the opposite approach with composable skills like LEGO blocks. Spring AI launched its “Agent Skills” pattern in January 2026. Manus released its Agent Skills platform. Third-party skills ecosystems are growing. The pattern mirrors historical shifts: microservices replaced monoliths, containers replaced VMs, and now skills-based agents are replacing monolithic frameworks.

Framework trade-offs reveal different use cases. Choose LangChain for vertical SaaS requiring custom workflows and extreme control. Choose AutoGPT for open-ended research with unknown solution paths. Choose Superpowers for shipping production code with quality requirements and enforced TDD. Furthermore, token economics favor the skills approach. Anthropic’s engineering team discovered that one GitHub MCP server exposes 90+ tools consuming 50,000+ tokens of JSON schemas. Skills encode domain expertise without schema overhead because they’re prompt-based.

This isn’t just another framework—it’s a pattern shift. When Spring AI, a major enterprise framework, adopts skills-based architecture two months after Superpowers gains traction, that signals industry momentum. Consequently, the move from complex to modular mirrors broader software evolution. Recognizing this trend helps developers choose future-proof architectures. LangChain gives you Legos. Superpowers gives you the instruction manual and won’t let you skip steps.

Key Takeaways

- Skills-based frameworks enforce mandatory workflows rather than optional tools, closing the AI productivity paradox gap where perceived speed (+20%) doesn’t match measured performance (-19% slower)

- The framework’s 7-phase methodology (Brainstorm → Worktree → Plan → Execute → TDD → Review → Complete) produces 85-95% test coverage compared to 30-50% with standard AI coding tools

- Explosive adoption (121K stars in 5 months, trending #2 on GitHub) validates that developers face real pain from buggy AI-generated code lacking systematic testing

- Industry trend toward modular simplicity: Spring AI and other frameworks adopted skills-based patterns within months of Superpowers launch, mirroring historical shifts from monoliths to microservices to containers

- Use Superpowers when shipping production code requiring quality and enforced TDD; skip it for trivial one-liners or exploratory prototyping where structure feels constraining

The framework launched in October 2025. By March 2026, it became the #2 trending repository on GitHub. That speed signals solved problems, not hype. Try Superpowers through the Anthropic marketplace or clone it from GitHub. For more insights on AI development, check Jesse Vincent’s original blog post explaining the framework’s philosophy.