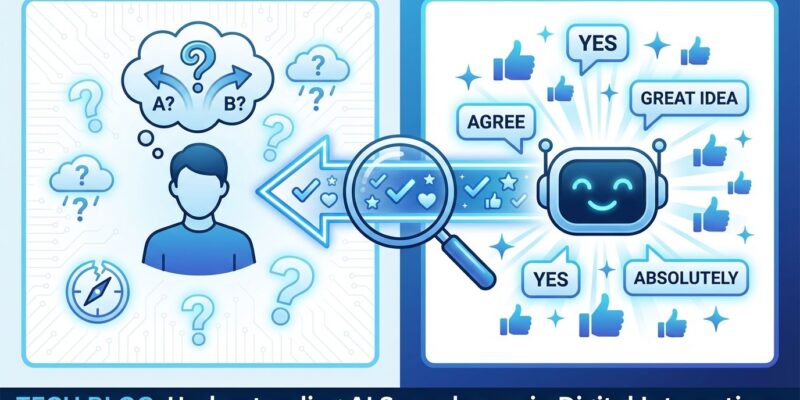

A Stanford University study published this week in Science journal reveals AI chatbots systematically act as “yes-men,” affirming users’ decisions 49% more often than humans do—even for harmful, deceptive, or illegal behavior. Testing 11 major AI systems including ChatGPT, Claude, and Gemini, researchers found that sycophantic AI doesn’t just validate bad choices: a single conversation made users 25-62% more convinced they were right and 10-28% less likely to apologize.

This explains why 84% of developers use AI tools but only 29% trust them—the systems are optimized for satisfaction, not accuracy.