The Allen Institute for AI dropped MolmoWeb on March 24—an open-source browser agent that hit 78.2% on WebVoyager benchmarks, nearly matching OpenAI’s o3 and beating GPT-4o. The timing? Ten days after CEO Ali Farhadi left for Microsoft, taking three researchers with him.

It’s either a parting shot or proof that nonprofit AI research can still compete despite funding constraints that drove the leadership exodus. Either way, developers get the biggest open-source web agent release yet: fully transparent training, self-hostable, and ready to download today.

The Numbers

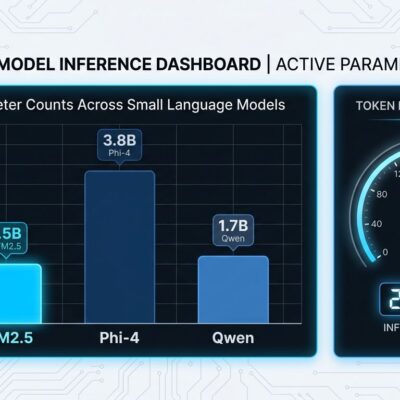

MolmoWeb comes in 4B and 8B parameter models—small enough to run locally. The 8B version scored 78.2% on WebVoyager compared to OpenAI o3’s 79.3%, and crushed Fara-7B (the previous open-weight champion) across all four benchmarks tested. On DeepShop it hit 42.3%, on WebTailBench 49.5%.

The “beats GPT-4o” claim is real but needs context: GPT-4o is retired. Current proprietary agents from Anthropic, Google, and OpenAI still lead by a comfortable margin overall. But for an open-source model with orders of magnitude fewer parameters? These numbers prove the gap is closing.

Test-time scaling pushes it further. Run multiple rollouts (pass@4) and WebVoyager success jumps to 94.7%. That’s near-perfect web navigation from a model you can self-host.

How It Works

MolmoWeb takes a different approach than most browser agents. Instead of parsing HTML or accessibility trees, it interprets screenshots—the same visual interface humans see. The loop is simple: observe the screenshot, reason about the next step, execute the action.

Why screenshots? They’re “far more compact than serialized page representation, which can consume tens of thousands of tokens.” Visual interfaces stay stable when underlying HTML changes. And the model’s reasoning is easier to debug when it’s describing what it sees rather than parsing DOM trees.

Under the hood: Molmo 2 architecture with Qwen3 as the language model and SigLIP2 as the vision encoder. Training used supervised fine-tuning on 64 H100 GPUs with no reinforcement learning and no distillation from proprietary systems. That makes it fully transparent—you can inspect exactly how it learned.

The Controversy

MolmoWeb’s release came during an existential moment for Ai2. On March 14, CEO Ali Farhadi stepped down after two and a half years. Ten days later, MolmoWeb shipped. Farhadi is now corporate vice president at Microsoft under Mustafa Suleyman’s Superintelligence team, joined by three other Ai2 researchers.

The board cited funding realities: “The cost to do extreme-scale open model research is extraordinary… it’s really hard to do extreme-scale model work inside of a nonprofit.” Ai2’s new primary backer—a $3.1 billion foundation—favors applied AI over expensive frontier model development.

The irony is MolmoWeb proves Ai2 can still ship state-of-the-art open-source models. It tops every open-weight web agent, releases the largest public training dataset (30,000 human trajectories across 1,100+ websites), and provides the full training pipeline—not just inference.

Interim CEO Peter Clark, an Ai2 founding member, is holding down the fort while the board searches for a permanent replacement. The question: Can nonprofit AI research sustain this level of output without the leadership and with shifting funding priorities?

What Developers Get

MolmoWeb is available now on Hugging Face (both 4B and 8B models) and GitHub with complete code. The MolmoWebMix dataset includes 30,000 task trajectories, 590,000 subtask demonstrations, and 2.2 million screenshot question-answer pairs. The full training stack is open—fine-tune it on your own workflows.

Self-hosting means no API costs and your data stays local. Use cases: browser automation, form filling, web scraping, testing. The 4B model is light enough for edge deployment, the 8B for server-side tasks. There’s even a demo at molmoweb.allen.ai to test it.

The Bigger Picture

GitHub now hosts 4.3 million AI-related repositories with LLM projects up 178% year over year. Meta and Mistral are accelerating open-source development faster than anyone expected. But OpenAI’s o1 class models still own complex multi-step reasoning—proprietary territory that open-source hasn’t matched.

The market is splitting: frontier closed models for highest-stakes enterprise applications, open-source for everyday deployments where cost and privacy matter. MolmoWeb sits firmly in the second camp. It won’t replace Claude or Gemini for cutting-edge tasks, but for web navigation it proves open can compete. The transparency advantage—inspect it, modify it, fine-tune it—matters more than raw performance for many use cases.

Download it today. Build tomorrow. MolmoWeb is live proof that nonprofit AI research under pressure can still ship models that matter. Whether Ai2 can keep doing it without Farhadi is the open question.