The agent orchestration space exploded in Q1 2026. OpenAI shipped its production-ready Agents SDK in March, replacing the experimental Swarm framework. Meanwhile, Ruflo hit 1,173 GitHub stars today, DeerFlow 2.0 claimed the #1 trending spot with 3,787 stars, and Gartner predicts 40% of enterprise apps will deploy multi-agent swarms by year-end. Developers now face a critical architectural choice: handoff-based orchestration (explicit control transfer) versus swarm-based coordination (decentralized autonomous agents). Choose wrong and you’re looking at months of technical debt.

Multi-agent systems deliver 100% actionable recommendation rates versus 1.7% for single agents in incident response trials—an 80x improvement in action specificity. However, frameworks vary wildly in complexity, cost (5x-20x token consumption), and production readiness. Teams need guidance now, not in six months when they’re already locked into the wrong architecture.

Handoffs vs Swarms: The Architecture Choice That Matters

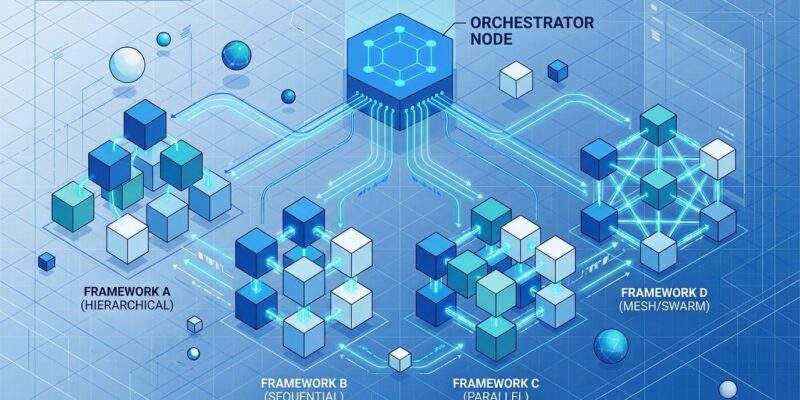

The fundamental decision isn’t which framework to use—it’s which architecture pattern fits your use case. Handoffs use explicit control transfer. OpenAI’s Agents SDK implements this with agents that pass control to specialized peers, carrying conversation context through transitions. A triage agent receives a customer request, classifies the intent, and hands off to the billing agent or technical support agent. Importantly, the downstream agent gets a concise recap, not the full conversation history.

Swarms take the opposite approach: decentralized coordination. Ruflo and Swarms use Q-Learning routers, message queues, and consensus algorithms (Byzantine, Raft, Gossip) to let agents operate as autonomous peers. They share state via blackboards and make local decisions about when to hand off work. Ruflo deploys 60+ specialized agents—coders, security auditors, testers—each knowing only its domain and when to delegate.

The difference matters because it determines your system’s complexity, failure modes, and scalability ceiling. Furthermore, handoffs work for simple coordination tasks: customer support routing, approval workflows, sequential pipelines. Swarms handle complex, parallel, fault-critical tasks: incident response, multi-domain research, distributed decision-making. Most failures happen at agent handoffs, not inside agents—centralized orchestrators create single points of failure, while swarms distribute risk.

100% vs 1.7%: Multi-Agent Performance Isn’t Incremental

Research published in arXiv paper 2511.15755 quantifies what developers intuitively know: specialized agents working together dramatically outperform generalist single agents. The study ran 348 controlled trials comparing single-agent copilots versus multi-agent systems on identical incident response scenarios. Consequently, single agents produced actionable recommendations just 1.7% of the time. Multi-agent orchestration hit 100%—with zero variance.

That’s an 80x improvement in action specificity and 140x improvement in solution correctness. Moreover, multi-agent systems delivered deterministic outputs. Single-agent approaches failed inconsistently, making them unusable in production. The difference between “works 100% of the time” and “fails 98% of the time” isn’t incremental—it’s the difference between production-ready and experimental.

This explains why enterprises are rushing to adopt agent orchestration frameworks despite the operational complexity. When the performance gap is this wide, the question isn’t whether to use multi-agent systems, but which framework to choose and how to manage the costs.

The Contenders: OpenAI, Ruflo, Swarms, DeerFlow

Four frameworks dominate the March 2026 landscape, each optimized for different use cases.

OpenAI Agents SDK replaced the experimental Swarm framework in March with production-grade handoff architecture. Additionally, it’s the best choice for prototyping and when you need tight control. Built-in guardrails validate inputs and outputs, preventing harmful responses. Tracing dashboards visualize agent execution for debugging. Persistent memory supports long-running sessions. The trade-off is vendor lock-in—it works best with OpenAI models and integrates tightly with their ecosystem. Choose this when you need enterprise support, safety features, and don’t mind the OpenAI dependency.

Ruflo hit 1,173 GitHub stars today by solving the cost problem. It optimizes for Claude Code but supports GPT, Gemini, Cohere, and local models. The killer feature is Agent Booster (WASM)—simple code transformations (formatting, imports, basic refactors) run 352x faster without LLM calls. Combined with tiered model routing, this delivers 85% API cost reduction. Ruflo shines for software development workflows where you need 60+ specialized agents (coder, reviewer, architect, security auditor) working together. The self-learning Q-Learning router remembers successful patterns and routes similar tasks to best-performing agents automatically.

Swarms (6.1k stars) positions itself as the universal orchestrator with 10+ swarm patterns: SequentialWorkflow, ConcurrentWorkflow, HierarchicalSwarm, MixtureOfAgents, GraphWorkflow. The SwarmRouter lets you switch strategies dynamically—change one parameter to go from sequential to parallel to hierarchical without restructuring code. AutoSwarmBuilder generates specialized agents from task descriptions automatically. Choose Swarms when you need pattern flexibility and want to experiment with different agent orchestration strategies before committing to one.

DeerFlow 2.0 claimed #1 on GitHub trending (3,787 stars) following ByteDance’s February rewrite. We covered DeerFlow’s launch here. It’s purpose-built for deep research, content creation, and asset generation. Lead agents spawn sub-agents in sandboxed Docker containers with scoped contexts and termination conditions. Furthermore, persistent memory stored as JSON tracks user preferences, writing styles, and project history across sessions. The framework handles web scraping, slide deck creation, and UI component generation out of the box. Model-agnostic design works with any OpenAI-compatible API. Choose DeerFlow for research and creative workflows where isolation and memory matter more than raw speed.

The 5x-20x Token Problem (And How Ruflo Solves It)

Agentic workflows consume 5x-20x more tokens than standard completions. Multiple agents discussing, delegating, and coordinating generate massive conversation histories. Consequently, without optimization, costs spiral quickly. A task that costs $0.10 with a single agent jumps to $1-2 with poor orchestration.

Ruflo demonstrates the solution with tiered routing. Simple tasks (formatting fixes, import updates) hit the Agent Booster WASM layer—sub-millisecond execution, zero LLM cost. Medium complexity work (standard code generation, basic tests) routes to cheap models like GPT-3.5 or Claude Haiku. Only complex tasks (security logic, edge case analysis) escalate to premium models like Claude Opus or GPT-4.

The result: 85% API cost reduction and 250% greater effective usage. For a team spending $10,000/month on agentic AI, that’s $8,500 saved and higher quality outputs. Cost optimization isn’t optional—it’s the difference between sustainable agentic AI and budget-killing experiments.

Industry reports show teams revisit pricing quarterly because vendor costs shift constantly. Agentic usage is the primary cost variable, consuming tokens at rates that make traditional pricing models unsustainable. Therefore, expect more frameworks to adopt Ruflo-style tiering as the economic reality becomes clear.

Do You Actually Need Swarms? Most Teams Don’t (Yet)

Gartner’s 40% enterprise adoption prediction by year-end 2026 may be overly optimistic. Framework vendors benefit from complexity hype—developers should be skeptical. In contrast, single agents with good prompts often outperform poorly orchestrated swarms. Over-engineering creates operational burden without ROI.

Industry guidance is clear: start centralized, decentralize only when hitting concrete performance bottlenecks. Most production systems use orchestrator-worker patterns (handoffs) initially. Only after encountering scalability limits do they adopt swarm architectures. Hybrid patterns dominate real deployments—hierarchical coordination at the top with mesh patterns at the leaf level.

The contrarian take: you probably don’t need multi-agent orchestration yet. Try a single agent with chain-of-thought prompting first. Add handoffs when specialization clearly helps. Upgrade to swarms when proven necessary by actual performance data, not vendor marketing. Nevertheless, framework wars just began—expect consolidation. Too many options exist (OpenAI SDK, Ruflo, Swarms, DeerFlow, LangGraph, CrewAI, AutoGen). The market will likely converge on 2-3 winners. Choose frameworks with strong community support and clear migration paths.

Key Takeaways

- Architecture first, framework second: Decide handoffs vs swarms based on use case, then pick the framework that implements that pattern best.

- Performance gains are real: 100% vs 1.7% actionable rates prove multi-agent systems work when implemented correctly.

- Cost optimization is mandatory: 5x-20x token consumption requires tiered routing (WASM for simple tasks, cheap models for medium, premium for complex).

- Start simple: Single agent + good prompts outperform poorly orchestrated swarms. Add complexity only when proven necessary.

- Framework selection matters long-term: OpenAI SDK for enterprise support, Ruflo for cost optimization, Swarms for pattern flexibility, DeerFlow for research workflows.

- Expect consolidation: Too many frameworks currently, market will consolidate to 2-3 winners over next 12 months.