OpenClaw became the fastest-growing open-source project in GitHub history in January 2026, surging from 0 to 250,000 stars in just 84 days—a milestone that took Linux years to achieve. But the AI personal assistant’s viral success triggered an equally record-breaking security crisis. By February 2026, researchers discovered 40,214 exposed instances with critical vulnerabilities, 63% of them exploitable via remote code execution. Then the creator joined OpenAI, moving the project to an independent foundation while questions swirl about independence, security, and the future of AI agents with system-level access.

Fastest to 250K Stars, Fastest to Security Crisis

OpenClaw exploded to 250,000 GitHub stars in 84 days (January-March 2026), surpassing React’s 10-year milestone in a fraction of the time. The self-hosted AI personal assistant gained 60,000 stars in its first 72 hours after Elon Musk retweeted it.

But viral adoption outpaced security hardening. Within weeks, SecurityScorecard discovered 40,214 exposed OpenClaw instances—63% vulnerable to remote code execution attacks. The culprit: developers deployed OpenClaw with default settings that bind to 0.0.0.0:3000 (publicly exposed) with no authentication. CVE-2026-25253 (CVSS 8.8) allowed attackers to compromise systems through a single malicious webpage. 12,812 instances were exploitable via this one-click takeover vulnerability.

What Makes OpenClaw Different (And Dangerous)

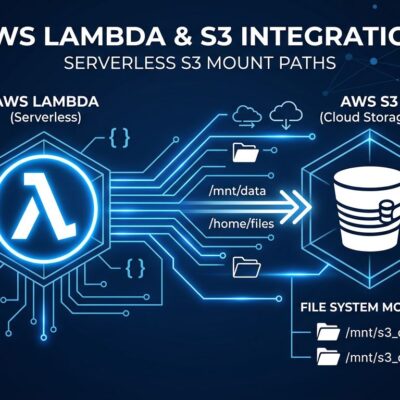

OpenClaw is a self-hosted AI personal assistant that acts as a message router, connecting chat platforms like WhatsApp, Slack, and Discord to LLMs (Claude, GPT, DeepSeek, or local models). It then grants these AI models autonomous access to local file systems, shell commands, and web browsers. The appeal: an “AI that actually does things” instead of just answering questions.

The project’s “configuration-first” philosophy uses a SOUL.md markdown file to define agent behavior—no coding required. This accessibility made OpenClaw viral but also made it trivially easy to deploy insecurely. The dangerous defaults: no authentication, public network exposure, and optional sandbox mode that many users disabled to unlock “full capabilities.” Developers wanted powerful AI assistants but didn’t grasp the security implications of granting system-level access.

The Anthropic Trademark Backfire

OpenClaw started as “Clawdbot” in November 2025, named after Anthropic’s Claude. In January 2026, Anthropic issued a trademark complaint, forcing a rename to “Moltbot.” Three days later, creator Peter Steinberger renamed it again to “OpenClaw” because “Moltbot never quite rolled off the tongue.”

The irony is spectacular. OpenClaw was driving significant API traffic to Anthropic’s Claude service—users primarily ran it with Claude as the backend LLM. By enforcing trademark against their biggest customer, Anthropic may have inadvertently pushed Steinberger toward their biggest competitor, who would soon hire him and sponsor the project.

Creator Joins OpenAI, Project Goes Independent

On February 14, 2026, Peter Steinberger announced he was joining OpenAI to “drive the next generation of personal agents.” Sam Altman called him “a genius with amazing ideas about agents interacting with each other.”

OpenClaw moved to an independent open-source foundation with OpenAI as a corporate sponsor. The project remains MIT-licensed and community-governed. Steinberger stated: “What I want is to change the world, not build a large company.” But questions persist: Can a foundation remain truly independent when sponsored by a company that competes in the same space?

Security Lessons: The Checklist 40K Users Ignored

The 40,214 exposed instances reveal catastrophic misconfigurations. Security researchers found 549 instances correlated with prior breaches and 1,493 with known vulnerabilities. Here’s the hardening checklist that most deployments ignored:

# docker-compose.yml - Secure configuration

version: '3.8'

services:

openclaw:

image: openclaw/openclaw:latest

volumes:

- ~/.openclaw:/root/.openclaw:ro

- ~/openclaw/workspace:/workspace

environment:

- CLAUDE_API_KEY=${CLAUDE_API_KEY}

ports:

- "127.0.0.1:3000:3000" # ← CRITICAL: Localhost only!

restart: unless-stoppedEssential steps: Bind to localhost (127.0.0.1) instead of 0.0.0.0. Enable authentication immediately—OpenClaw ships with none. Start with sandbox mode and expand permissions gradually. Update to version 2026.2.25+ to patch CVE-2026-25253. Audit third-party skills before installing—12% of ClawHub marketplace skills were infected with malware in February 2026. Set API spending limits. Never mount your Docker socket or home directory into the container.

What’s Next for AI Agent Security

The OpenClaw Foundation is establishing governance structures—a maintainer council, security review process, and predictable release cadence—to stabilize after Steinberger’s departure. But the story foreshadows challenges the entire AI agent ecosystem will face.

The market is exploding. Gartner projects 40% of enterprise applications will include task-specific AI agents by the end of 2026. 72% of Global 2000 companies already operate AI agent systems beyond pilots. But analysts warn that 40%+ of agentic AI projects risk cancellation by 2027 if governance, observability, and ROI clarity aren’t established. As one Hacker News commenter put it: “OpenClaw is a security nightmare dressed up as a daydream.”

Foundation governance matters. Open-source projects with clear contributor processes and organizational backing outlast single-maintainer projects. OpenClaw’s security crisis provides a blueprint for what NOT to do when deploying AI agents—lessons that apply to AutoGPT, CrewAI, and any custom agent with system access. Security can’t be an afterthought when you’re giving AI the keys to your file system.