What if AI agents worked like your team does—on a shared canvas where everyone tackles different parts simultaneously, not in a linear chat thread? That’s the bet behind Spine Swarm (YC S23), which just topped Google DeepMind’s DeepSearchQA benchmark at 87.6%, beating OpenAI, Anthropic, and even Google’s own Gemini Deep Research by 21.5%. Launched on Hacker News today (March 13, 2026), just days after the benchmark announcement on March 9, Spine offers a visual workspace where multi-agent swarms collaborate in parallel on complex projects: competitive analysis, financial modeling, SEO audits, pitch decks. The paradigm shift from chat to canvas represents the broader 2026 trend in AI: moving from “asking” to “doing.”

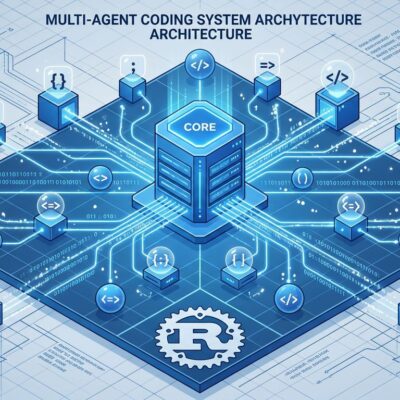

How Spine Swarm Works: Three-Tier Orchestration on Canvas

Spine uses three-tier architecture fundamentally different from chat interfaces. An orchestrating agent decomposes your prompt into subtasks. Persona agents—specialized for research, analysis, synthesis, writing—execute in parallel. Cross-validation reviewers verify outputs before downstream handoffs. The “infinite canvas” is built from intelligent, agentic blocks: discrete units that browse web, generate slides, produce images, connected parent-to-child for parallel workflows.

Consider a real example: “Analyze competitor pricing for SaaS products.” The orchestrator breaks this down: research pricing pages for four competitors, extract data, structure comparison framework, synthesize insights, build slide deck. Unlike chat where you’d iterate back-and-forth, four research agents hit Asana, Monday, ClickUp, and Linear simultaneously. While they work, you can watch on the canvas or work on something else. The analysis agent structures findings once research completes. The synthesis agent identifies positioning differences. The artifact agent builds your slide deck. Total credit cost: approximately 7,000 for this demo, across 10+ browser blocks and multiple deliverables.

The architecture clarifies when canvas beats chat. Parallel execution saves time for multi-source research. Structured handoffs prevent context fragility that plagues long chat threads. Moreover, visual blocks enable surgical re-runs when one agent fails—no redoing entire workflow. The system dynamically selects from 300+ AI models, using ensemble approaches for high-stakes reasoning: running multiple frontier models on the same step and consolidating outputs.

Benchmark Achievement Validates Multi-Agent Approach

Spine Swarm achieved state-of-the-art performance on Google DeepMind’s DeepSearchQA with 87.6% accuracy, announced this week on March 9. Perplexity Deep Research scored 79.5%. GPT-5.2 Thinking High hit 71.3%. Gemini Deep Research managed 66.1%. These aren’t marginal wins—Spine beat Google’s own tool by 21.5 percentage points. Furthermore, on GAIA Level 3, another rigorous benchmark, Spine hit 76.0% (corrected for mislabeled questions), beating competitors like Genspark (58.8%), Manus (57.7%), and OpenAI Deep Research (47.6%).

The transparent canvas revealed dataset quality issues that competitors missed—a meta-validation of the visual approach’s debugging advantages. Benchmarks provide the credibility that new paradigms need. This isn’t just a different UI—it’s provably better for complex multi-step reasoning tasks.

Chat vs Canvas: Choose Based on Your Project, Not Hype

The industry pivoted in 2026 from linear chat to visual canvas for complex AI work, but that doesn’t make chat obsolete—it makes choice critical. Use Spine’s canvas approach for complex multi-deliverable projects requiring research plus synthesis plus slides. Projects with three or more parallel subtasks benefit from simultaneous execution. Additionally, work requiring audit trails for compliance or team visibility needs the transparent canvas. Non-linear exploration during ideation fits the canvas model.

Stick with Claude or ChatGPT chat for quick questions, linear coding tasks, mobile usage, simple research, established workflows that already work. The Hacker News debate captured the tension. One skeptic argued: “I can run the same thing on Claude in research mode and get a report with cited sources in a more digestable format on my phone.” However, another commenter noted: “The persistence layer is probably more valuable than the visual canvas itself” for maintaining state across long multi-agent workflows.

Related: AI Agents Failing: 80% Fail Rate and Silent Disasters

The founders acknowledge agents will get faster and parallel execution may become “not manageable for a human to follow in real time.” Consequently, the canvas adds value when you need to debug agent reasoning, surgically re-run specific blocks, or collaborate with teams—not when you just want instant answers on your phone. Don’t force-fit the tool.

Getting Started: Practical Tutorial for Your First Swarm

Sign up at getspine.ai with no setup needed. Free-forever plan lets you experiment. Create your first swarm with a single detailed prompt—critical difference from chat iteration. Specify deliverable format, data sources, depth level upfront. Example prompt: “Analyze competitor pricing strategies for project management SaaS products. Compare Asana, Monday, ClickUp, Linear. Deliver as slide deck with comparison tables and strategic insights.”

Watch the orchestrator decompose your task. Persona agents execute in parallel—you’ll see blocks appear on the canvas as they work. Inspect each artifact as it’s created. Edit anything in place if needed. Export your deliverable when complete. In fact, agents can run autonomously for 80+ minutes on large projects, using dynamic model selection from a pool of 300+ models. The autonomous execution distinguishes Spine from chat tools requiring constant interaction.

Pricing Reality Check: Credit Costs and Transparency Concerns

Spine uses credit-based pricing starting at $12/month with a free-forever plan. Credit costs scale by three factors: model selection (GPT-5 and Claude Opus consume more credits than economical models), block complexity (advanced blocks like Deep Research cost more due to multi-model operations behind the scenes), and connected blocks multiplier (every three connections increases cost by 1×: zero to three blocks equals 1× multiplier, four to six blocks equals 2× multiplier).

Hacker News users flagged pricing transparency as their top concern. One asked if daily refresh limits single-run usage, calling it “an abysmal pricing model” if true. Another reported “running out of trial credits before seeing complete results”—problematic for evaluation. Unlike flat-rate ChatGPT or Claude, usage-based pricing creates uncertainty. Developers need to estimate costs for production use. Meanwhile, the 7,000-credit demo example suggests complex projects could quickly exceed free tiers. Lack of upfront cost clarity slows enterprise adoption—a common AI tool adoption barrier in 2026.

Key Takeaways

- Spine Swarm achieved state-of-the-art 87.6% on DeepSearchQA benchmark (announced March 9), beating OpenAI, Anthropic, and Google by significant margins—validating the multi-agent canvas approach as production-worthy

- Use canvas for complex multi-deliverable projects with parallel subtasks (competitive analysis, pitch decks, financial models), stick with chat for quick questions and linear tasks—don’t force-fit based on hype

- Three-tier orchestration (orchestrator, persona agents, cross-validation) with structured handoffs prevents context fragility that plagues long chat threads, while visual blocks enable surgical re-runs without redoing entire workflows

- Pricing transparency needs improvement: demo task consumed approximately 7,000 credits, free tier may be insufficient for thorough evaluation, enterprise adoption slowed by unclear cost estimates upfront

- The 2026 paradigm shift from chat “asking” to canvas “doing” extends beyond Spine—OpenAI Agent Builder, visual agent platforms gaining traction as industry standardizes on orchestrated multi-agent workflows

Experiment with Spine for complex non-linear projects, but evaluate based on your actual workflows and credit costs—not just the impressive benchmarks. The canvas approach solves real problems for multi-source research and multi-format deliverables. Whether the visualization adds value or overhead depends entirely on your project complexity.