On March 11, 2026, Nvidia invested $2 billion in Amsterdam-based Nebius Group, acquiring an 8.3% stake at $94.94 per share. This marks Nvidia’s third major “neocloud” investment in early 2026—following a $2 billion bet on CoreWeave in January—signaling a strategic shift from chip vendor to infrastructure player. The partnership aims to deploy 5 gigawatts of AI data center capacity by 2030, enough to power roughly 1 million Nvidia H100 GPUs.

This isn’t just about selling more GPUs. Nvidia is building a “shadow cloud” of specialized AI infrastructure providers to compete with hyperscalers—AWS, Azure, and Google Cloud—who are developing their own AI chips to reduce dependence on Nvidia silicon. By backing neoclouds, Nvidia ensures its hardware remains dominant even as traditional cloud providers push Amazon Trainium and Google TPU alternatives.

What “Neocloud” Actually Means

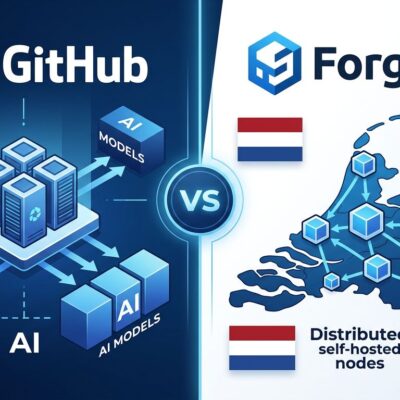

Neoclouds are AI-specialized cloud providers that focus exclusively on high-performance GPU infrastructure for model training and inference. Unlike traditional hyperscalers that offer hundreds of general-purpose services—databases, identity management, compliance tools—neoclouds do one thing: deliver bare-metal GPU clusters optimized for AI workloads.

The promise is compelling. An Nvidia DGX H100 instance costs $98 per hour on AWS or Azure. On a neocloud like Nebius or CoreWeave, the same instance runs $34 per hour—a documented 66% cost savings according to Uptime Institute analysis. Additionally, neoclouds offer faster GPU allocation (days instead of weeks) and priority access to Nvidia’s latest chip architectures. For companies where 80% of infrastructure spending goes to AI training, that’s real money.

Consequently, the neocloud market exploded from $23 billion in revenue in 2025 to a projected $180 billion by 2030—200% annual growth, according to Synergy Research Group. Players like CoreWeave, Nebius, and Lambda Labs are carving out territory that traditional clouds struggle to serve efficiently.

Nvidia’s Shadow Cloud Strategy

Nvidia’s investment pattern reveals a calculated vertical integration strategy. In Q1 2026 alone, the company deployed $2 billion into CoreWeave (January), $2 billion into Nebius (March), and is reportedly targeting Lambda Labs at a $40-50 billion valuation. This isn’t passive portfolio diversification—it’s building ownership across the AI infrastructure stack.

Historically, Nvidia sold chips to AWS, Azure, and Google Cloud, who then rented those GPUs to customers. Now, Nvidia takes 5-10% equity stakes in neoclouds, provides priority access to upcoming chip architectures (Rubin, Vera CPUs, Bluefield storage), and integrates its full product stack directly into these platforms. As industry analysts note, “By propping up CoreWeave and Nebius, Nvidia is building a shadow cloud that allows it to bypass the hyperscalers whenever they push their own internal AI chips.”

This resembles early 20th-century vertical integration—steel barons who owned mines, factories, and railroads. Nvidia now controls the silicon, the software (CUDA ecosystem), and significant pieces of the infrastructure. The strategy is defensive: hyperscalers are developing custom chips precisely to escape Nvidia’s GPU monopoly. Amazon’s Trainium and Google’s TPU represent existential threats to Nvidia’s dominance. By investing in neoclouds, Nvidia ensures customers have alternatives that still run on Nvidia hardware.

Innovation or Expensive Rebrand?

Industry experts are divided on whether neoclouds represent genuine innovation or sophisticated marketing for “GPU rental services.” Bulls cite explosive growth and real cost savings. Synergy Research Group projects neoclouds will reach $180 billion by 2030, growing 200% annually. The 66% cost advantage over hyperscalers is documented and repeatable. For AI-first companies, neoclouds deliver immediate, measurable value.

Skeptics warn of hype and fragile economics. McKinsey’s analysis flags “a lot of hype and exaggerated claims about the massive buildout of gigawatt campuses.” Computer Weekly notes that neoclouds are “well-capitalized but have limited experience running cloud services at scale.” The core concern: bare-metal-as-a-service economics depend on sustained GPU shortages and high hyperscaler pricing. Once GPU supply normalizes or AWS aggressively price-competes, do neoclouds survive?

Moreover, neoclouds lack the breadth of hyperscaler ecosystems. You get GPUs and networking, but no managed databases, identity services, or compliance frameworks. For enterprises with complex workloads, this means stitching together multiple vendors—operational complexity that hyperscalers solve by owning the full stack. As one CIO noted, “As AI matures and becomes more efficient, hyperscalers may reclaim outsourced workloads.”

What Nebius Is

Nebius Group emerged from Yandex’s $5.4 billion divestment in mid-2024, when the Russian tech giant sold its domestic operations and rebranded its international assets as an independent AI infrastructure company. The Amsterdam-based company operates a state-of-the-art data center in Finland and had already secured major contracts before Nvidia’s investment: $17 billion with Microsoft and $3 billion with Meta.

This isn’t a startup scraping for customers. Furthermore, Nebius brings operational experience from Yandex’s hyperscale infrastructure and engineering talent capable of managing gigawatt-scale deployments. The market responded enthusiastically—Nebius stock jumped 16% on the Nvidia announcement—validating the strategic partnership.

When to Choose Neocloud vs Hyperscaler

Decision criteria are crystallizing. Choose neoclouds if 80% of your infrastructure spending is AI training or inference, cost is a primary constraint, and you need GPUs immediately. Bare-metal performance and priority access to Nvidia’s latest chips (Blackwell, Rubin) deliver competitive advantages for AI-first companies.

Stick with hyperscalers if you need compliance certifications (SOC 2, HIPAA, FedRAMP), require integrated services (databases, security, monitoring), or run hybrid workloads where AI is a minority of infrastructure. Enterprise contracts with AWS, Azure, or Google Cloud often include volume discounts that offset neocloud savings. Additionally, hyperscaler stability—financial backing, acquisition resistance, long-term roadmaps—matters for risk-averse organizations.

The smart money isn’t choosing neocloud OR hyperscaler. It’s hybrid architectures: train models on neoclouds where cost and performance matter most, deploy inference and applications on hyperscalers where integration and services add value. This approach captures 66% cost savings on the most expensive workloads while maintaining ecosystem benefits for everything else.

Nvidia’s $2 billion Nebius investment isn’t just funding—it’s a statement. The company is betting that AI infrastructure will fragment into specialized tiers, and neoclouds will own the high-performance computing layer. Whether this becomes reality or a temporary phenomenon crushed by hyperscaler price wars remains uncertain. For now, developers have options, and Nvidia is ensuring those options still run on Nvidia chips.