English Wikipedia banned AI-generated articles on March 20, 2026, closing a Request for Comment with a decisive 44-2 vote. The policy prohibits using large language models like ChatGPT or Claude to generate or rewrite article content, citing violations of Wikipedia’s “core content policies.” This makes Wikipedia the first major knowledge platform to institutionally reject AI-generated content—while the rest of the web drowns in AI slop.

The enforcement paradox is obvious: Wikipedia admits AI detection tools are “currently unreliable.” Volunteer moderators must identify violations based on content quality, not technical signatures. The question is simple: How do you ban something you can’t detect?

The Compounding Risk: Why This Matters Beyond Wikipedia

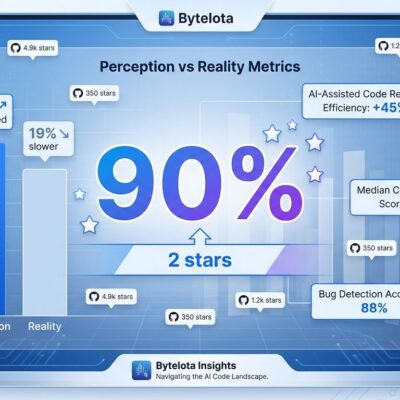

Wikipedia identified a critical feedback loop threatening the entire AI ecosystem. Inaccurate AI content enters Wikipedia, AI companies scrape Wikipedia for training data, bad data re-enters model training, future models produce worse content. Repeat. This phenomenon, called model collapse, degrades AI quality exponentially.

Research from Nature shows that training models on predecessor-generated text causes a “consistent decrease in lexical, syntactic, and semantic diversity.” Early collapse erases minority data and reduces output diversity. Late collapse is worse—the model “converges to a distribution that carries little resemblance to the original.” Individual errors combine and compound.

Here’s the irony: AI companies need Wikipedia to stay clean for their training datasets. Wikipedia is the 5th most visited site globally and appears in nearly every major training corpus. But their own LLMs are the pollution source. If Wikipedia gets contaminated, every future AI model trained on web data suffers. This isn’t just about protecting Wikipedia’s reputation—it’s about preventing a downward spiral for the entire AI industry.

The Enforcement Paradox: Banning What You Can’t Detect

Wikipedia’s honesty is refreshing: AI detection tools don’t work. Enforcement depends entirely on human volunteer moderators checking for policy violations—unsourced claims, hallucinated facts, neutral point of view issues. Stylistic similarity to AI writing alone won’t fly. Moderators must prove actual content policy violations, not just “this looks like ChatGPT.”

The Slashdot community nailed it: “What remains is the trivial matter of enforcement.” Another commenter noted a “large contingent of alleged humans who present AI output as original thought.” Wikipedia created WikiProject AI Cleanup to coordinate identification and removal efforts, but pages with smaller editor communities remain vulnerable. No technical watermark exists in LLM output. Detection is entirely manual.

This raises the obvious sustainability question: Can volunteer moderators scale to enforce this as AI improves and becomes indistinguishable from human writing? The answer determines whether this ban is a principled stand or a temporary speed bump. For other platforms watching closely, the lesson is clear: technical detection is NOT required for policy enforcement, but the volunteer burden is real.

What’s Banned, What’s Allowed

The policy prohibits generating or rewriting article content with LLMs, but allows two narrow exceptions. First, editors can use LLMs for basic copyediting of their own writing—grammar, punctuation, clarity. Second, LLMs can provide first-pass translation assistance. Both require human verification.

The policy warns that “LLMs can go beyond what you ask of them and change the meaning of text such that it is not supported by the sources cited”—even for simple copyediting tasks. The RfC discussion emphasized the burden: generating AI content takes seconds, verifying and cleaning it takes hours. Wikipedia’s 44-2 vote reflects overwhelming community consensus: AI authorship creates unsustainable work for volunteers.

The distinction matters. Wikipedia isn’t categorically anti-AI—it’s anti-AI-authorship. Tools that assist human editors are acceptable. Tools that replace human expertise are not. This nuanced approach beats blanket bans or blind acceptance. Other platforms debating AI policies should take note.

What Happens Next

The March 2026 TomWikiAssist incident—where an autonomous agent authored multiple Wikipedia articles—proved AI content creates real problems, not theoretical ones. Administrator Chaotic Enby successfully framed the RfC after earlier attempts failed on wording specifics. The community’s earlier rejection of Wikimedia Foundation’s “Simple Article Summaries” AI tool (called a “ghastly idea” that would “erode trust”) showed strong anti-AI sentiment existed before the official ban.

This sets precedent. Other platforms are watching: ICML 2026 (academic conference) has LLM review policies, Gentoo Linux is debating AI contribution bans, Stack Overflow is discussing similar restrictions. The question for community-driven platforms is no longer “Should we accept AI content?” but “Can we enforce quality standards without it?”

Wikipedia proves major platforms can institutionally reject AI content. This challenges the narrative that AI-generated content is inevitable and platforms must accept it. Whether this approach holds long-term or evolves as AI technology improves remains to be seen. For now, Wikipedia has drawn a line: human expertise and verifiability trump automation and scale.