Reddit announced on March 25 that it will require accounts flagged for suspicious behavior to verify they’re human, using passkeys, biometrics, and a new labeling system for legitimate bots. The platform removes 100,000 automated accounts daily—a scale that killed competitor Digg just 11 days earlier. Starting March 31, bot developers can register for [App] labels, while suspicious accounts face targeted verification or restrictions.

This affects bot developers, community moderators using automation tools, and anyone building on Reddit’s API. It’s Reddit’s attempt to balance useful automation with spam control while preserving the pseudonymity that makes Reddit unique.

The Digg Warning: Why Reddit Acted Now

Digg shut down on March 14, 2026—just two months after relaunching—because AI bot spam overwhelmed the platform faster than they could respond. Reddit’s announcement came 11 days later, suggesting they were watching closely and acting preemptively.

“We didn’t appreciate the scale, sophistication, or speed at which the bots found us,” Digg admitted in their post-mortem. They discovered “the internet is now populated in meaningful part by sophisticated AI agents.” For a vote-based platform like Digg, untrustworthy votes meant a broken model. Founder Kevin Rose is returning full-time in April for a “hard reset”—assuming the company can rebuild at all.

This isn’t theoretical anymore. Bots can kill platforms, and the timing matters: Reddit learned from a competitor’s failure and moved fast. Moreover, the 11-day gap shows platform companies are watching each other scramble to solve the 2026 bot crisis before it’s too late.

How Verification Actually Works

Reddit’s verification is NOT mandatory for all users—only accounts flagged for suspicious behavior. The system analyzes account-level signals like posting speed, activity patterns, and technical markers. If flagged, users verify via on-device methods: passkeys from Apple, Google, or YubiKey, or biometrics like Face ID. Accounts that fail verification may be restricted.

CEO Steve Huffman emphasized privacy: “Our aim is to confirm there is a person behind the account, not who that person is. Consequently, the goal is to increase transparency while preserving the anonymity that makes Reddit unique. You shouldn’t have to sacrifice one for the other.”

Reddit is threading the needle here: stop bots without forcing real-name verification. Developers with legitimate bots won’t be caught in the crossfire if they follow the rules. However, the detection algorithm isn’t public (anti-gaming measure), so some uncertainty remains. If you’re building automation tools, expect to prove you’re human at least once.

Good Bots Get Labels, Bad Bots Get Banned

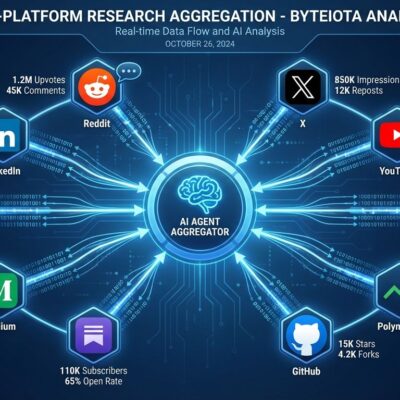

Starting March 31, automated accounts can display [App] labels on profiles (not individual posts). Two types exist: “Developer Platform App” for bots built on Reddit’s official dev tools, and “App” for other compliant automation. Think AutoModerator, RemindMe bot, or moderation tools—useful automation gets recognized instead of banned.

Reddit removes 100,000 accounts daily for spam and malicious activity, but many bots serve communities: moderating spam, posting reminders, aggregating content, or translating discussions. Furthermore, the labeling system lets users distinguish helpful automation from manipulation. Developers who register bots before June may qualify for a “porting bounty”—incentive to comply quickly.

This is enforcement with nuance, not a blanket ban. Additionally, moderation tools depend on bots, and Reddit’s free API access for moderators continues. Bot developers have a clear path: register, label, follow the Responsible Builder Policy. Don’t manipulate votes, don’t spam, be transparent about automation.

Privacy Trade-offs: Passkeys vs World ID

Reddit is prioritizing privacy-first verification: on-device passkeys and biometrics that confirm personhood without revealing identity. They’re “considering” Sam Altman’s World ID (iris-scanning orb), but it’s not preferred due to privacy concerns.

World ID has been banned or investigated in 10+ countries over privacy issues. Critics warn: “Once you link an unchangeable biometric like your eye to a global ID system, you can’t take it back. It’s the ultimate honeypot for surveillance.” In contrast, passkeys combine device authentication (something you have) with biometrics (something you are) without creating centralized biometric databases. Indeed, 87% of enterprises are deploying passkeys in 2026, making them an industry-standard approach.

Reddit is making a deliberate choice: effective bot detection without destroying pseudonymity. Developers and users who value privacy should prefer passkeys over iris scans. The World ID consideration shows Reddit is keeping options open for different threat levels, but their stated preference matters. Passkeys win on privacy.

What Developers Need to Do

If you run a Reddit bot: Register it on Reddit’s Developer Platform before June to qualify for [App] label and potential porting bounty. Follow the Responsible Builder Policy—no vote manipulation, no spam, no circumventing safety mechanisms. If your personal account gets flagged, verify once with passkeys or Face ID and keep using Reddit anonymously.

Reddit’s policy is clear: “Apps must not manipulate Reddit’s features (e.g., voting, karma) or circumvent safety mechanisms (e.g., user blocking, account bans).” Using AI to write posts isn’t banned, but bots must be transparent with labels. Specifically, community moderators continue to get free API access for non-commercial moderation tools. The crackdown targets malicious automation, not helpful community tools.

Clear action items: Register bots, get labels, don’t manipulate. Most developers won’t be affected if they’re building legitimate tools. Nevertheless, the June deadline creates urgency—don’t wait until enforcement tightens.

—