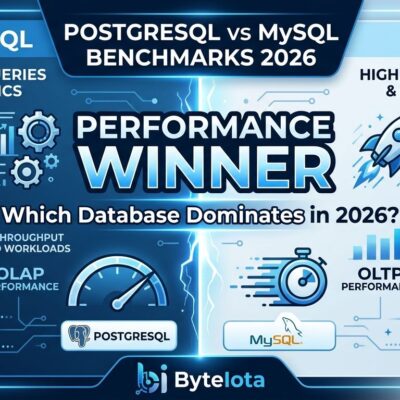

Recent 2026 benchmark studies reveal PostgreSQL is 3.72x faster than MySQL for complex queries and concurrent operations, but MySQL retains a 17% advantage for simple SELECT queries. The performance gap isn’t about which database is “better”—it’s about workload complexity. PostgreSQL’s execution time for 1 million record operations ranges from 0.6-0.8ms versus MySQL’s 9-12ms, a 13x difference. Under concurrent load, PostgreSQL maintains stable 0.7-0.9ms query times while MySQL degrades to 7-13ms. The 2026 decision framework is clear: complex queries and high-concurrency writes favor PostgreSQL, while simple primary key lookups and read-heavy OLTP still favor MySQL.

The Complexity Factor: Where Performance Diverges

The database wars narrative oversimplifies reality. PostgreSQL demonstrates a 3.72x throughput advantage for complex queries—23,441 queries per second versus MySQL’s 6,300 QPS. Yet MySQL wins simple SELECT queries by 17%, delivering 28,500 QPS compared to PostgreSQL’s 24,200 QPS. This isn’t a margin of error. It’s a fundamental architectural trade-off that determines which database fits your workload.

The gap widens dramatically under complexity. MDPI’s academic benchmark study shows PostgreSQL executing 1 million record operations in 0.6-0.8 milliseconds while MySQL takes 9-12 milliseconds—a 13x performance difference. The advantage isn’t subtle for analytical workloads, business intelligence queries, or applications with complex multi-table joins. PostgreSQL’s query optimizer makes better decisions on join strategies, parallelizes execution across CPU cores, and handles aggregations more efficiently.

However, MySQL’s advantage for simple operations matters for specific use cases. Content management systems like WordPress, e-commerce platforms with straightforward product lookups, and read-heavy applications dominated by primary key access benefit from MySQL’s optimized thread model. The 17% throughput advantage translates to millions of additional simple queries per second at scale—meaningful for high-traffic web applications with uncomplicated data access patterns.

Concurrency Architecture: MVCC vs Locking Explained

PostgreSQL uses true Multi-Version Concurrency Control where readers and writers never block each other. MySQL’s InnoDB combines MVCC with row locks and gap/next-key locks—a hybrid approach that sounds sophisticated but introduces unexpected blocking in production. The default REPEATABLE READ isolation level turns innocent SELECT statements into blocking operations. Reads block writes. Writes block reads. Developers unaware of this behavior encounter bottlenecks when concurrent users modify data simultaneously.

The architectural difference is fundamental. PostgreSQL’s MVCC uses full-tuple versioning with xmin/xmax transaction IDs—each row version exists independently, allowing genuine non-blocking concurrency. MySQL’s InnoDB maintains a single base row and applies undo segments to reconstruct old versions. This works for read-mostly workloads but degrades under concurrent write pressure. In real-world concurrent operation tests, PostgreSQL maintains stable 0.7-0.9ms query performance while MySQL degrades to 7-13ms.

The practical impact hits SaaS platforms, collaborative tools, and any application where multiple users modify data simultaneously. PostgreSQL’s write throughput surpasses MySQL when concurrency increases. MySQL requires tuning isolation levels to READ COMMITTED for better concurrency—a configuration change that isn’t obvious to developers expecting MySQL’s “just works” reputation. PostgreSQL’s MVCC does require VACUUM for dead tuple cleanup, but that operational overhead is a worthwhile trade for non-blocking concurrency.

Query Optimizer Differences: Why PostgreSQL Wins Complex Workloads

PostgreSQL has a superior query optimizer that makes better decisions on large multi-table joins, considers more join strategies, and handles complex queries more efficiently. PostgreSQL 18 adds async I/O for 2-3x improvement in sequential scans—a significant enhancement for cloud environments with high-latency storage. MySQL’s optimizer has improved in version 8, but still requires more manual tuning for complex queries. Developers frequently resort to denormalization or application-layer query optimization to achieve acceptable MySQL performance for analytical workloads.

Indexing capabilities widen the gap further. PostgreSQL supports B-tree, GiST, GIN, BRIN, partial indexes, expression indexes, and bitmap indexes. MySQL primarily relies on B-tree indexes with limited alternatives. Need to index JSON fields? PostgreSQL’s GIN indexes handle it natively. Geospatial queries? PostgreSQL’s GiST indexes excel. Filtered indexes for common WHERE clauses? PostgreSQL’s partial indexes deliver precisely targeted performance improvements MySQL can’t match.

Real-world PostgreSQL 18 async I/O benchmarks demonstrate the performance leap. COUNT query execution dropped from 15,071ms with synchronous I/O to 10,051ms with worker method to 5,723ms with io_uring. The 2-3x improvement applies to sequential scans, bitmap heap scans, and vacuum operations—critical for data warehousing and analytics workloads. MySQL offers no equivalent feature. For mixed analytical and transactional workloads, PostgreSQL pulls ahead decisively because of better planning, parallel query execution, and mature vacuuming mechanisms.

# postgresql.conf - Enable async I/O for 2-3x read performance

io_method = 'worker' # Options: sync, worker, io_uring

# worker: Dedicated I/O worker processes

# io_uring: Kernel ring buffer (lower overhead)Real-World Decision Framework: When to Choose Each Database

Choose PostgreSQL for new projects without legacy constraints, complex queries with multiple joins, high-concurrency writes, advanced data types like JSON and geospatial, analytics and data warehousing, and applications requiring strictest ACID compliance. Industry consensus is clear: “If you are starting a new project today and you do not have a specific reason to choose MySQL, choose PostgreSQL.” The SQL feature set is richer, the extension ecosystem is unmatched, the query planner is smarter, and the community moves faster.

MySQL remains the right choice for specific scenarios. Existing MySQL ecosystems like WordPress installations benefit from staying with MySQL’s proven compatibility. Read-heavy applications with simple queries like content management systems, e-commerce product catalogs, and applications dominated by primary key lookups still favor MySQL’s optimized thread model. Teams with deep MySQL expertise and no PostgreSQL experience may find MySQL’s operational simplicity and shorter learning curve worth the performance trade-offs for complex queries.

Production use cases illustrate the divide. Uber chose PostgreSQL for geospatial routing. Instagram scales PostgreSQL for massive user data. Apple uses PostgreSQL for analytics. Conversely, Facebook relies on MySQL for its social graph. YouTube uses MySQL for video metadata. Netflix runs MySQL for content catalogs. The pattern is clear: complex, evolving schemas and analytical workloads favor PostgreSQL; simple, read-heavy access patterns favor MySQL.

The wrong choice costs time and money. Developers building analytics platforms on MySQL encounter query optimizer limitations requiring denormalization workarounds. Teams deploying high-concurrency SaaS applications on MySQL discover blocking behavior under load. Conversely, simple WordPress blogs on PostgreSQL introduce unnecessary operational complexity. Analyze your workload: Are most queries simple or complex? Read-heavy or write-heavy? Does your schema evolve frequently? Do you need advanced data types? Answer these questions before choosing.

Key Takeaways

- PostgreSQL is 3.72x faster for complex queries (23,441 QPS vs 6,300 QPS) but MySQL wins simple SELECTs by 17% (28,500 QPS vs 24,200 QPS)—workload complexity determines the winner

- PostgreSQL’s true MVCC provides non-blocking concurrency where readers and writers never block each other, while MySQL’s hybrid MVCC plus locking causes unexpected blocking in default REPEATABLE READ isolation

- PostgreSQL 18’s async I/O delivers 2-3x performance improvement for sequential scans, widening the gap for analytical workloads and data warehousing

- Choose PostgreSQL for new projects, complex queries, high-concurrency writes, and advanced data types; choose MySQL for existing ecosystems, simple read-heavy workloads, and teams with deep MySQL expertise

- The “PostgreSQL is always better” narrative oversimplifies reality—analyze your specific workload characteristics before making architecture decisions