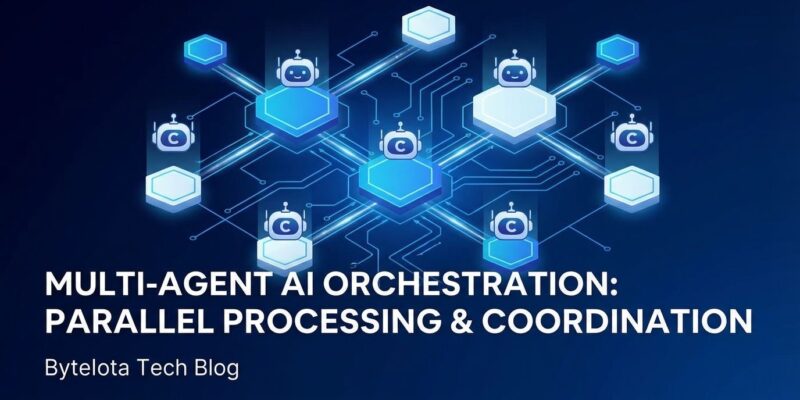

Claude Code revolutionized AI-assisted coding in 2025, but it has one glaring weakness: it’s single-threaded. Refactor 50 files? Migrate your database schema across 40 components? You’re stuck watching Claude work through each file sequentially, burning hours on tasks that should be parallelizable. oh-my-claudecode just changed that. This zero-config orchestration layer—currently trending #1 on GitHub with 858 stars gained in 24 hours—turns Claude Code into a multi-agent orchestra, delivering 3-5x speedup on large projects while cutting token costs by 30-50%.

The Performance Breakthrough Is Real

The numbers aren’t hype—they’re backed by real-world case studies. Take this React migration example: converting 40 class-based React components to functional components with Hooks. Manual approach? Two to three days of careful refactoring. With oh-my-claudecode’s Ultrapilot mode running 5 concurrent Claude Code workers? Two hours. Near-zero regression rate.

Or consider this overnight refactor story: a full architectural migration touching 47 files, database schema changes, and API endpoint updates. Estimated manual work: three weeks. Claude Code with orchestration: eight hours, production-ready, tests passing.

The secret? Ultrapilot mode runs up to 5 Claude Code instances in parallel, each working in isolated Git worktrees with a shared task list. A database migration that takes 4 hours single-threaded? 50 minutes with Ultrapilot. The speedup is real because the work is genuinely parallelizable—five agents refactoring separate modules simultaneously beats one agent working sequentially.

And the cost savings matter. oh-my-claudecode automatically routes simple tasks (file operations, boilerplate generation) to Claude Haiku and complex reasoning (architectural decisions, debugging) to Claude Opus. Result: 30-50% token savings without sacrificing quality. Smart routing means you pay Opus prices only when you need Opus intelligence.

Zero-Config Is the Killer Feature

Here’s what kills adoption of most orchestration tools: complexity. YAML configuration files. Custom DSLs. Memorizing magic keywords. oh-my-claudecode’s genius is avoiding all of it.

Installation from the Claude Code marketplace takes three steps: add the plugin, run /omc-setup, done. No configuration files. No complex setup. From there, you just describe what you want in natural language:

autopilot: build a REST API for managing tasksThat’s it. The system’s 32 specialized agents—architects, debuggers, designers, QA testers, researchers—automatically delegate the work. Ask to “refactor the database”? The architect agent handles it. “Fix the UI colors”? The designer agent takes over. You don’t manage agents; the system does.

This zero-config approach is why oh-my-claudecode is trending #1 on GitHub today while more complex alternatives languish. Developers want power without overhead. oh-my-claudecode delivers.

Knowing Which Mode to Use

oh-my-claudecode offers five execution modes, and understanding when to use each is the key to effective orchestration.

Autopilot is single-threaded, autonomous execution—traditional Claude Code behavior. Use it for simple features, single-file edits, or quick prototypes. It’s your default when parallelization doesn’t matter.

Ultrapilot is maximum parallelism: 5 concurrent workers delivering 3-5x speedup. This is your go-to for large refactors (20+ files), framework migrations, multi-module updates, or test generation across suites. When time matters and the work is parallelizable, Ultrapilot is the answer.

Team mode uses a staged pipeline—plan, PRD, execute, verify, fix—ideal for complex projects requiring coordination. Think multi-component builds where agents need to share context and verify consistency. Quality gates matter here.

Ralph mode is persistent execution with verify-fix loops. It won’t stop until an architect verifies completion. Use it for critical migrations, production work, or anytime “good enough” isn’t acceptable.

The pattern for designing agent teams mirrors microservices architecture. For a 50-file migration, split by module: 5 agents handling 10 files each, parallel processing, one agent managing schema changes, architect verifying consistency. For testing, assign one agent per test suite with parallel execution and shared coverage reports. For documentation, parallel file processing with a technical writer agent ensuring consistency.

The 2026 Orchestration Shift

oh-my-claudecode isn’t just a tool—it’s part of 2026’s broader paradigm shift. As Addy Osmani notes, we’re moving from “AI that responds” to “AI that acts.” Claude Code now authors 4% of all public GitHub commits—roughly 135,000 per day—and projections put that at 20%+ by year’s end.

Agent orchestration tools are proliferating: Claude Colony, Vibe-Claude, ClaudeSwarm, and now oh-my-claudecode leading the pack. The prediction? By end of 2026, parallel multi-agent coding will be standard practice. Single-threaded AI assistance will feel as slow as single-core CPUs felt in 2010.

But here’s the honest tradeoff: 5x speed comes with 5x coordination decisions. You’re not just running five agents faster—you’re managing five agents making interdependent choices. Orchestration complexity becomes the bottleneck, not agent capability. The skills developers need to learn mirror distributed systems: how to design agent teams, when to parallelize versus serialize, managing coordination costs.

The debate over whether we need orchestration layers or just better individual agents misses the point. Specialization wins. Always has. A 32-agent system where each agent is optimized for one job—architect, debugger, designer, QA—outperforms a single super-agent trying to do everything. Division of labor works for humans. It works for AI agents. oh-my-claudecode’s architecture proves it.

Getting Started

If you’re already using Claude Code, adding oh-my-claudecode takes minutes. Install from the marketplace, run /omc-setup, and start with Autopilot mode for familiar single-threaded behavior. Once comfortable, scale to Ultrapilot for your next large refactor.

The zero-config promise is real. No YAML to write, no commands to memorize, no complex mental models. Describe your task. Let the agents orchestrate themselves. Collect your 3-5x speedup.