NVIDIA just admitted what everyone suspected but nobody expected them to say: GPUs alone can’t win the AI inference war. The company announced a $20 billion licensing deal with Groq in December 2025, integrating the startup’s Language Processing Unit technology into a new AI processor set to debut at GTC 2026 on March 16 in San Jose. OpenAI signed on as the first customer, committing $30 billion in dedicated inference capacity. For a company that built a $5 trillion empire on GPU dominance, this marks an unprecedented admission: their flagship product isn’t enough anymore.

The Training vs Inference Split

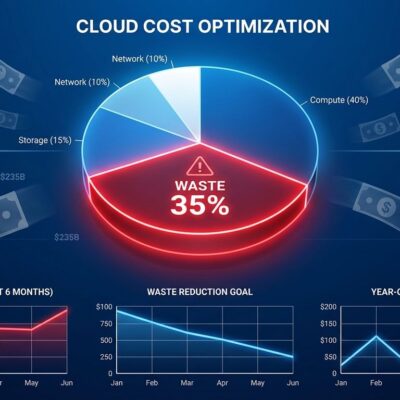

The AI chip market is fracturing into two distinct worlds. Training models happens once and demands raw parallel power. Inference—actually running those models billions of times daily—prioritizes speed, latency, and cost efficiency. Deloitte projects inference will account for two-thirds of all AI compute in 2026, up from just one-third in 2023. By 2030, analysts expect the inference market to dwarf training by 10x. The economics are brutal: training is one-time capital expenditure; inference is continuous operational cost at scale.

The numbers back this up. Custom AI accelerators (ASICs) designed for inference are growing at 44.6% annually in 2026, nearly tripling GPU growth at 16.1%. The specialized processor market is expanding at 22% versus GPUs at 19%. NVIDIA still commands 90% of AI accelerator spending, but that dominance is being challenged by a fundamental architectural reality: GPUs were built for training, not inference.

Why GPUs Fall Short at Inference

Here’s the problem NVIDIA won’t say out loud: GPUs excel at massive parallel workloads like training, but inference is inherently sequential. Generating text token-by-token creates memory bottlenecks that leave GPUs waiting instead of computing. Groq’s LPU architecture solves this with SRAM-based memory that’s 100 times faster than the HBM memory in GPUs. The result: 300 to 500 inference tokens per second with near 100% compute utilization, versus GPUs running at 30 to 40% efficiency during inference workloads.

If you’re running AI inference at scale, you’re paying for that inefficiency. OpenAI alone spends $1.8 billion annually on inference with an estimated 50% margin. Every millisecond of latency degrades user experience. Every wasted compute cycle increases costs. Specialized chips aren’t a luxury—they’re economic necessity at billion-request scale.

Why NVIDIA Paid $20 Billion

NVIDIA could have built competing technology in-house. Instead, they licensed Groq’s architecture and hired its founder. Jonathan Ross created Google’s Tensor Processing Unit before founding Groq in 2016 to build something fundamentally different: a deterministic, inference-optimized processor. NVIDIA’s deal brings Ross on board as Chief Software Architect along with Groq’s engineering team, while Groq remains independent and GroqCloud continues operating.

This isn’t weakness—it’s NVIDIA reading the market correctly. The GTC reveal on March 16 will show whether they can integrate Groq’s technology without cannibalizing their GPU business. OpenAI’s $30 billion commitment suggests the answer matters less than the execution. When the biggest AI consumer in the world needs specialized inference chips, everyone else will follow.

The Industry Follows Suit

As VentureBeat noted, NVIDIA just admitted the general-purpose GPU era is ending. AMD acquired Untether AI’s engineering team and is launching its MI400 series in 2026. Intel is pursuing SambaNova at a reported $1.6 billion valuation while shipping its 18A chips and Xeon 6 processors with built-in AI acceleration. Hyperscalers like AWS, Google, and Microsoft are designing custom inference ASICs. The Hacker News community is divided: is NVIDIA stifling innovation by neutralizing a competitor, or smartly adapting to market reality? Probably both.

What This Means for Developers

The era of one-size-fits-all GPUs is over. Training workloads will keep using GPUs for their parallel processing muscle. Inference workloads are moving to specialized chips optimized for sequential operations, low latency, and cost efficiency. If you’re building AI applications, your infrastructure decisions just got more complex. Choosing between GPUs and custom accelerators is now workload-specific: fast-changing multi-model deployments favor GPU flexibility; stable, high-volume inference favors specialized silicon.

NVIDIA’s $20 billion bet validates what the market already knew. AI infrastructure is disaggregating into training and inference, each demanding different chips. The company that owns 90% of the AI accelerator market just acknowledged their flagship product can’t do everything. That admission, more than the dollar figure, signals where the industry is headed. GTC 2026 on March 16 will reveal whether NVIDIA can execute this pivot—or whether they just paid $20 billion to prove their critics right.