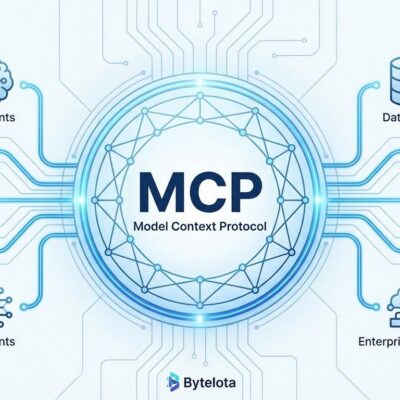

One year after Anthropic launched the Model Context Protocol, it’s become the industry standard for AI agent integrations. The November 25, 2025 spec release shows why: a Tasks API for long-running operations, simplified OAuth for enterprise security, and full backward compatibility. MCP went from experimental protocol to powering OpenAI’s ChatGPT, Google’s Gemini, and 2,000+ production servers in twelve months.

Tasks API: Real Agent Workflows, Not Just Request/Response

The Tasks API solves AI’s long-running operation problem. Before this, agents could only handle quick requests—anything longer required custom workarounds. Developers had to split operations into three separate tools: start the job, poll for status, retrieve results. Every integration reinvented the same pattern.

Tasks introduces a clean “call-now, fetch-later” model. An agent starts a task, does other work, and checks back when ready. The server manages state automatically—working, input_required, completed, failed, or cancelled. No manual job ID tracking. No custom polling logic.

This unlocks production use cases that take hours, not seconds. Healthcare AI can run 45-minute molecular structure analysis for drug discovery. Enterprise teams are using it for SDLC automation—Amazon is running complex business processes across their organization. Research agents can conduct deep investigations across multiple sources without blocking. DevOps teams can build CI/CD pipelines with approval gates and status updates.

The real power: tasks can pause mid-execution to request user input, send real-time progress updates, and handle cancellation gracefully. A research agent might analyze data for hours, pause to ask the user which direction to explore deeper, then continue. Temporal already built a production implementation showing this isn’t theory—it’s shipping.

When OpenAI Adopts Your Competitor’s Protocol

OpenAI integrating MCP into ChatGPT and their Agents SDK in March 2025 was the validation moment. A company doesn’t adopt a rival’s open standard unless it’s genuinely better than building their own. Sam Altman said it directly: “People love MCP and we are excited to add support across our products.”

Google DeepMind followed in April 2025, confirming MCP support for Gemini models. GitHub built an MCP server to automate engineering processes. Hugging Face created one for model management and dataset search. Postman, Notion, Stripe—they’re all building MCP servers now.

The MCP Registry launched in September with an initial batch of servers. By November, it had close to 2,000 entries. That’s 407% growth in two months. The community includes 2,900+ Discord contributors, with 100+ new developers joining weekly. Seventeen specification enhancement proposals shipped in a single quarter.

GitHub’s CPO called it an evolution “from experiment to widely adopted industry standard.” Hugging Face’s CTO described MCP as “the natural language for AI integration.” An AWS VP noted that “MCP solves the fragmentation that held agents back.” When your competitors are publicly endorsing your protocol, you’ve won.

OAuth and Backward Compatibility Seal the Deal

Authorization was MCP’s remaining friction point. The new spec simplifies OAuth with URL-based client registration, eliminating Dynamic Client Registration complexity while maintaining security. Machine-to-machine flows get OAuth client credentials. Cross App Access enables single enterprise sign-on across all authorized MCP servers.

URL Mode Elicitation is the clever part—secure credential collection through browser-based flows without exposing credentials to the MCP client. This enables PCI-compliant payment processing and external OAuth integrations. Stripe’s MCP server is now viable for production.

Everything is backward compatible. Existing implementations keep working. All new features are optional. There was a 14-day release candidate validation window from November 11-25, giving client implementors time to test before the anniversary release. Developers can add Tasks support when ready, not because the protocol forced migration.

The HTTP of AI Agents

MCP is becoming what HTTP became for the web: the standard everyone builds on because fragmentation is more expensive than convergence. If you’re building AI agents, this is the protocol to use. The ecosystem is mature—check the 2,000+ servers in the registry before building custom integrations.

The speed matters. Most standards take years to gain adoption. MCP became the industry standard in under twelve months because it solved real problems developers faced daily. Tasks API handles long-running operations. OAuth handles enterprise security. Backward compatibility handles migration risk. When a protocol removes friction instead of adding it, adoption follows.

The November 25 spec release isn’t just an anniversary milestone. It’s MCP transitioning from “promising new standard” to “production infrastructure.” The companies building on it—OpenAI, Google, GitHub, AWS—aren’t experimenting. They’re shipping.