Harmonic AI, co-founded by Robinhood CEO Vlad Tenev, raised $120 million in Series C funding at a $1.45 billion valuation in November 2025. The company’s pitch: eliminate AI hallucinations entirely through formal mathematical verification. While OpenAI, Google, and Anthropic chase sub-1% hallucination rates with bigger models, Harmonic claims they’ve achieved 0%—provably—by requiring AI to output mathematical proofs instead of probabilistic text. Their Aristotle model won gold at the 2025 International Math Olympiad with formally verified solutions, and investors just bet $1.45 billion that “proof beats probability” for high-risk AI applications.

The Fundamental Problem OpenAI Can’t Solve

OpenAI’s best models boast 0.7-0.9% hallucination rates. Google Gemini-2.0-Flash hits similar numbers. Impressive engineering, sure. But for aerospace engineers certifying flight software or banks verifying fraud detection algorithms, “0.9% error rate” isn’t impressive—it’s disqualifying. Harmonic Aristotle delivers 0% hallucination rates on verified outputs, not through better prompting or bigger models, but through mathematical proof.

The gap matters. A bank using AI to detect fraud cannot rely on a model that merely “thinks” it is right; it needs a model that can mathematically prove its assertions comply with regulatory code. Boeing doesn’t ship flight-critical software with “probably correct” verification—they use formal methods meeting DO-178C certification standards. Harmonic applies the same rigor to AI.

The numbers justify the approach. 47% of enterprise AI users made at least one major business decision based on hallucinated content in 2024. Knowledge workers now spend 4.3 hours per week fact-checking AI outputs. AI coding assistants introduce false APIs, insecure settings, and fake dependencies that trigger compliance failures and cyberattacks. The hallucination crisis isn’t theoretical—it’s measurable and expensive.

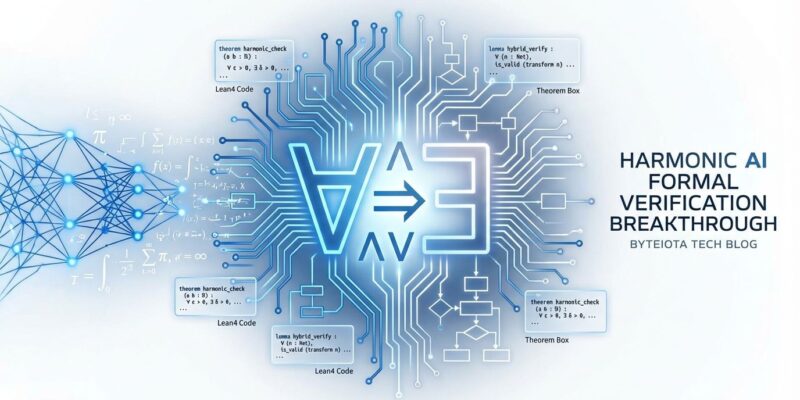

Harmonic’s approach leverages Lean4, an open-source theorem prover that won the 2025 ACM SIGPLAN Award for its impact on mathematics, hardware verification, and AI. Lean4’s mathlib contains 210,000+ formalized theorems and 100,000+ definitions. Amazon uses it to verify cloud infrastructure. Blockchain developers use it to verify consensus protocols. Famous mathematician Terence Tao uses it with AI assistance to formalize cutting-edge math. This isn’t experimental tech—it’s mature, proven, and production-ready.

How Harmonic’s Hybrid Approach Actually Works

Harmonic isn’t anti-neural-network. It’s hybrid: neural networks for intent translation, formal solvers for validation. The workflow:

- User asks a question in natural language

- Neural network translates the problem to Lean4 code (mathematical representation)

- AI generates a solution as a Lean4 proof

- Lean4 proof checker algorithmically verifies correctness—no AI involved in verification

- Only mathematically proven solutions return to the user

Harmonic explains it simply: “The elimination of hallucinations comes directly from the fact that we require our system to output reasoning as code instead of reasoning as English.” Natural language reasoning is probabilistic. Mathematical proofs are not.

Compare this to OpenAI’s o1 approach. OpenAI’s reasoning models use chain-of-thought processing to improve accuracy, achieving 74% accuracy on mathematical reasoning benchmarks. But they stay entirely within the neural network paradigm, requiring human verification. OpenAI o3 hallucinated 33% of the time on the PersonQA benchmark—worse than o1’s 16%. Bigger models don’t eliminate the fundamental limitation: LLMs generate statistically likely text, not truth.

Harmonic’s Aristotle won gold at the 2025 IMO by solving 5 of 6 problems with formally verified solutions. OpenAI and Google DeepMind also won gold, but their solutions were natural language reasoning requiring human verification. Harmonic’s solutions were machine-verified mathematical proofs. That distinction separates “impressive demo” from “deploy in aerospace systems.”

Formal verification proves correctness for all possible cases, not just tested cases. Traditional testing checks specific inputs: “Does 2 + 2 = 4? Does 3 + 3 = 6?” Formal verification proves mathematical properties: “Addition is commutative for all integers.” When Boeing certifies flight software under DO-178C, they don’t rely on test coverage—they require formal verification that the code satisfies safety properties under all conditions. Harmonic brings that rigor to AI.

The $1.45B Bet on High-Risk Sectors

Harmonic targets sectors where “probably correct” isn’t good enough:

- Aerospace: Flight-critical software under DO-178C certification requires formal verification. Commercial aerospace uses tools like SCADE and Astrée with near-zero false positives.

- Finance: Fraud detection, trade verification, regulatory compliance. Financial systems need mathematical proof of assertions, not probabilistic confidence.

- Healthcare: Medical device software, diagnostic AI. Errors in medical AI cost lives, not just money.

- Blockchain: Smart contract verification, consensus protocol correctness. Exploits in DeFi protocols have drained billions; formal verification prevents them.

The Series C was led by Ribbit Capital, a fintech-focused investor. That’s a signal. Harmonic’s valuation jumped from $875 million in July 2025 to $1.45 billion in November 2025—a 65% increase in four months. The market isn’t betting on “maybe formal verification catches on someday.” It’s betting on regulatory requirements and compliance mandates forcing adoption in trillion-dollar sectors.

Current AI hallucination rates show why. While leading models hit sub-1% rates on benchmarks, OpenAI’s reasoning models perform worse: o3 hallucinated 33%, o4-mini hit 48%. The industry’s “scale solves hallucinations” narrative is failing. Harmonic’s $1.45 billion valuation says investors believe mathematical proof is the answer.

What Developers Need to Know

Formal verification isn’t staying in academia. It’s integrating into developer tools. Lean4 is becoming a competitive edge in AI, with new benchmarks like FormalStep and VeriBench driving research. DeepSeek released open-source Lean4 theorem proving models in April 2025, democratizing access. AWS published guidance on verified software development, signaling enterprise adoption.

Near-term, expect formal verification in AI coding assistants. Verified code snippets for critical paths, mathematical proofs for algorithm correctness, formal checks before deployment. Medium-term, regulatory requirements may mandate formal verification for critical codebases in aerospace, finance, and healthcare. “Verified by default” could become a compliance checkbox. Long-term, formal verification may become standard for production AI-generated code.

Learning Lean4 basics positions developers for this shift. The official Theorem Proving in Lean 4 tutorial is accessible and well-documented. Understanding formal methods—even at a high level—provides context for evaluating AI tools and understanding their limitations.

Some investors remain skeptical. One prominent AI investor stated, “I doubt that most AI generated code is going to end up formally verified.” They’re probably right for general-purpose applications. Most CRUD apps don’t need mathematical proofs of correctness. But for high-risk sectors—aerospace, finance, healthcare—the question isn’t “will formal verification become standard?” It’s “how fast will regulations require it?”

Harmonic’s bet: faster than the skeptics think. The hallucination crisis is forcing enterprises to choose between probabilistic accuracy and provable correctness. For critical applications, proof wins. Every time.

Key Takeaways

- Harmonic AI raised $120M at $1.45B valuation using Lean4 formal verification to achieve 0% hallucination rates (vs. OpenAI’s 0.7-0.9%)

- Aristotle model won 2025 IMO gold with machine-verified mathematical proofs, no human verification needed

- High-risk sectors (aerospace, finance, healthcare) demand provable correctness, not probabilistic accuracy

- Formal verification integrating into developer tools: Lean4 theorem provers, FormalStep/VeriBench benchmarks, AWS guidance

- Developers should learn Lean4 basics as regulatory requirements may mandate formal verification for critical codebases