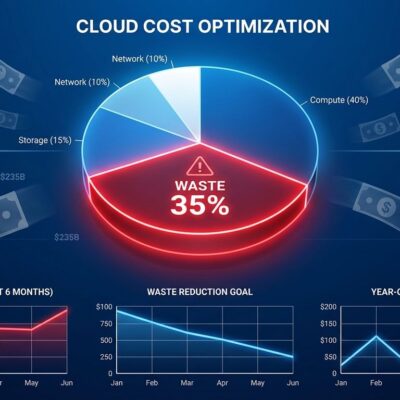

Public cloud spending hit $1.03 trillion in 2026, yet enterprises waste 32% of it—roughly $330 billion thrown away annually. The irony isn’t lost on anyone: organizations pour money into cloud infrastructure while leaving a third of it unused, misconfigured, or forgotten. But here’s the twist: the nature of that waste has fundamentally changed. Traditional culprits like idle VMs and forgotten snapshots are yesterday’s problem. Today’s waste driver is AI—bursty training jobs, always-on inference, and complex hybrid architectures that organizations don’t fully understand yet. The State of FinOps 2026 Report reveals 98% of companies now manage AI costs, up from just 31% two years ago, yet only 34% have mature capabilities. We’re spending faster than we can optimize.

The Old Waste Playbook No Longer Works

Traditional FinOps optimization—shutting down idle VMs, right-sizing instances, cleaning up snapshots—captured the “big rocks” of waste. Cloud waste breakdown shows 60% comes from idle resources (20-25%), overprovisioning (15-20%), and orphaned environments (10-15%). Mature organizations already addressed most of this. Now they face diminishing returns.

One practitioner in the State of FinOps report put it bluntly: they’ve “hit the big rocks and now face smaller opportunities requiring more effort.” The low-hanging fruit is gone. What’s left requires sophisticated tooling, granular tracking, and more organizational effort per dollar saved. Meanwhile, AI workloads introduced an entirely new waste category that doesn’t fit the old playbook.

The numbers tell the story. 84% of enterprises report 6% or more gross margin erosion from unexpected AI costs. Practitioners describe managing “something they don’t fully understand yet, with pricing models still being invented.” The FinOps tactics that worked from 2015 to 2024 are hitting their limits. AI workloads require fundamentally different approaches: per-token cost tracking, forecasting bursty GPU usage, navigating pricing models that vary 4.7x for identical hardware—H100 spot at $1.49 per hour versus on-demand at $6.98.

FinOps Expanded Beyond Cloud Cost Management

FinOps in 2026 is no longer synonymous with cloud cost optimization. The State of FinOps report shows 90% of organizations now manage SaaS costs, up 25% year-over-year. 64% manage licensing. 57% manage private cloud. 48% manage data centers. What started as “let’s understand our AWS bill” evolved into “let’s understand all technology spending.”

The organizational signal is clear: 78% of FinOps practices report to the CTO or CIO, not the CFO. This isn’t a finance function anymore—it’s a technology capability. The discipline matured from reactive cost-cutting to proactive value management. Priorities shifted. Expanding scope, governance, organizational alignment now collectively outweigh pure optimization work.

AI cost management adoption exploded. 31% of organizations managed AI costs in 2024. 63% in 2025. 98% in 2026. That’s not gradual adoption—that’s everyone scrambling to understand costs that appeared overnight as AI moved from experimental budgets to operational reality. FinOps teams aren’t just optimizing cloud bills anymore. They’re tracking SaaS license utilization, AI model inference costs, data center amortization, and private cloud spending. The goal shifted from “reduce the cloud bill” to “guide how technology investments are planned, governed, and valued.”

AI Costs Are the New Frontier (And Nobody Has It Figured Out)

AI cost management represents genuinely uncharted territory. Unlike predictable cloud VMs with stable pricing, AI workloads are bursty, unpredictable, and priced on models that are literally still being invented. The State of FinOps report identifies visibility, allocation, and ROI as practitioners’ top three challenges. Translation: teams don’t know what they’re spending, can’t assign it to the right cost centers, and can’t prove it’s worth it. Yet AI spending is exploding.

Only 34% of organizations have mature AI cost management capabilities. That means two-thirds are flying blind on costs that can erode 6% or more of gross margins. GPU pricing illustrates the complexity: H100 instances range from $1.49 per hour for marketplace spot instances to $6.98 for hyperscaler on-demand—a 4.7x spread for identical hardware. GPU spot instances offer 70-80% savings, but require workload design changes most teams haven’t implemented.

Here’s the paradox: inference costs dropped 280-fold in two years, yet total AI spending is skyrocketing. Organizations face mission-critical AI needs for product differentiation, but the economics are murky. Traditional FinOps tools track monthly VM hours. AI requires per-token tracking, real-time inference cost monitoring, and understanding complex batch versus spot versus on-demand tradeoffs. Vendors claim 25-60% savings with “AI-aware automation,” but that requires instrumenting model pipelines, forecasting bursty workloads, and integrating cost data into product metrics. Most organizations aren’t there yet.

What Actually Works (When Done Right)

The data on FinOps effectiveness is clear: organizations completing the maturity journey achieve 30-50% savings compared to unmanaged spending. But “completing the maturity journey” is the hard part. It requires executive sponsorship, cross-functional collaboration, organizational change, and continuous iteration. Quick wins are easy. Sustained improvement is hard.

Success isn’t about buying a tool—it’s about embedding financial awareness into engineering workflows. 60% of mature organizations use a centralized enablement model: small central teams set standards and provide tooling while distributed champions execute locally. Mandatory tagging strategies get enforced at resource creation (tags can’t be applied retroactively). Cost centers, environments, and projects become required metadata. Engineers see costs in dashboards alongside performance metrics.

The proactive governance trend matters most. Strong practitioner demand exists for pre-deployment architecture costing, where financial context is introduced before infrastructure is provisioned and before AI workloads are deployed. Instead of asking “why did costs spike last month?” (reactive), teams ask “what will this architecture cost before we deploy it?” (proactive). Cost becomes an engineering constraint evaluated alongside performance, reliability, and security.

This shift works. AI-assisted optimization is pushing the waste floor toward 15-20%, down from today’s 28-35% baseline. Organizations that embed financial awareness early, automate governance, and iterate continuously see the 30-50% savings. Those that treat FinOps as a one-time cost-cutting exercise don’t.

The $330 Billion Question

$330 billion in annual cloud waste isn’t inevitable, but eliminating it requires continuous evolution, not one-time fixes. The traditional FinOps playbook—idle resource cleanup, right-sizing, reserved instance optimization—captured most available savings. Diminishing returns are setting in. AI costs represent the new frontier, and organizations are figuring out the economics in real-time while pricing models are still being invented.

FinOps expanded beyond cloud to cover all technology spending. 90% manage SaaS. 98% manage AI costs. The discipline evolved from reactive bill optimization to proactive value governance. Success means embedding financial awareness into engineering workflows before costs are incurred, not after bills arrive. Organizations that evolve their FinOps practices as fast as their spending grows will capture competitive advantage. Those that don’t will keep contributing to that $330 billion waste figure.

The question isn’t whether FinOps delivers ROI—the 30-50% savings prove it does. The question is whether organizations will adapt their practices faster than their spending accelerates. Based on 2026 trends, that race is still very much in play.