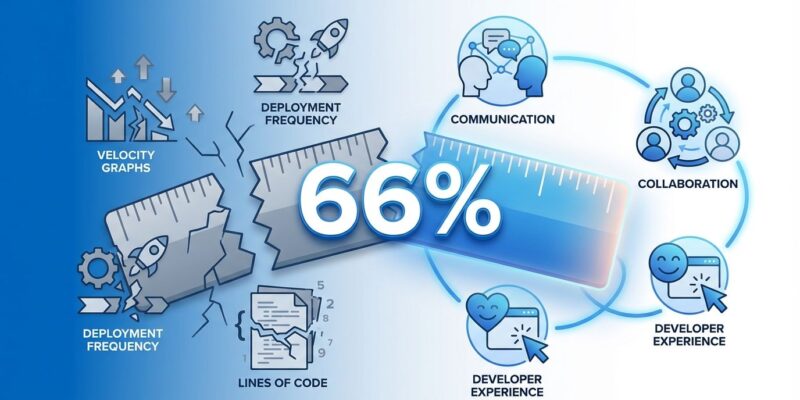

Two out of three developers don’t believe the metrics used to evaluate them actually reflect their work. That’s not a rounding error—that’s 66% of the 24,534 developers surveyed by JetBrains saying the systems measuring their productivity are broken. And this isn’t just an academic complaint. These metrics determine your promotions, your bonuses, and whether you’re first on the layoff list. While engineering organizations spent the last five years obsessing over DORA metrics and velocity tracking, they built measurement systems that the people being measured fundamentally don’t trust.

The Metrics Trust Crisis Hits Critical Mass

When two-thirds of developers tell you the yardstick is broken, maybe examine the yardstick instead of the people. The JetBrains State of Developer Ecosystem 2025 report—surveying developers across 194 countries—reveals a fundamental breakdown in developer productivity measurement: developers consistently request “greater transparency and clarity in measurement processes,” which is corporate-speak for “we have no idea how you’re evaluating us, and we’re pretty sure you’re getting it wrong.”

This isn’t an isolated gripe. Stack Overflow’s 2025 developer survey validates the trust crisis from another angle: only 29% of developers trust AI tool accuracy, down from 40% previously. Positive AI sentiment dropped to 60% from over 70% in 2023-2024. The pattern is clear—developers are losing faith in the systems claiming to measure and augment their work.

The impact is concrete. Developers ranked by PR count. Performance reviews based on story points. Promotion decisions tied to deployment frequency. When the productivity metrics are broken, every decision built on them inherits that failure. Your career advancement becomes a function of how well you game the system, not how much value you create.

AI Productivity Is Invisible to Current Metrics

Here’s the paradox keeping engineering leaders up at night: 85% of developers use AI tools regularly. One in five saves eight or more hours per week—that number doubled from 9% in 2024 to 19% in 2025. Individual developers report massive productivity gains. Yet organizational delivery metrics stay stubbornly flat.

The DORA 2025 report quantified this disconnect: AI coding assistants boost individual output by 21%, with 98% more pull requests merged. But for teams lacking mature measurement practices, those gains vanish at the organizational level. The developer productivity metrics can’t see the productivity shift.

Why? Because traditional measurements track the wrong things. Lines of code decrease when AI generates more concise solutions. Commit frequency drops when AI enables larger, more complete changes. Story points don’t capture time saved on research, debugging, or writing boilerplate. Velocity stays flat even though developers are working substantially smarter.

You’re flying blind in the AI era, measuring 2025 workflows with 2020 developer productivity metrics. The biggest productivity transformation in a decade is invisible to the systems designed to track it. Organizations can’t optimize what they can’t measure, and right now, they’re measuring the wrong things entirely.

Developers Say Human Factors Matter More Than Tools

Here’s the data that should terrify every VP of Engineering still obsessing over CI/CD speed: 62% of developers cite non-technical factors as critical to their performance. Only 51% say the same about technical factors. Read that again. Collaboration quality, communication effectiveness, goal clarity, and constructive feedback matter more than your pipeline optimization.

This represents a complete reversal from the 2020-2024 DORA era, when technical delivery metrics dominated everything. We spent billions optimizing build times and deployment frequency while developers were telling us—loudly, in every survey—that they needed better collaboration, clearer goals, and actual transparency in how they’re evaluated.

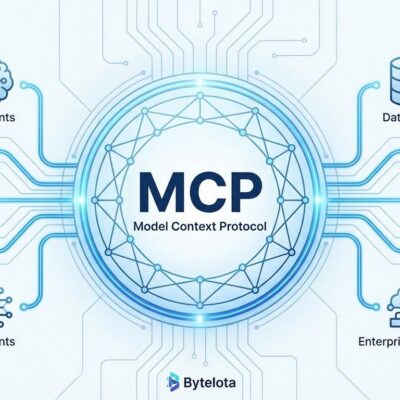

The industry is finally listening. DX Core 4, introduced in January 2025, represents the new measurement paradigm. Instead of just measuring speed and stability, it adds two critical dimensions: Effectiveness (via the Developer Experience Index measuring 14 factors of how well processes support engineers) and Impact (alignment between engineering output and business outcomes).

The business case is compelling: improving the Developer Experience Index by just one point saves 13 minutes per developer per week. That’s 10 hours annually per person. For a 150-person engineering org, a single point of improvement yields 33 hours of recovered productivity weekly. And unlike DORA metrics, developers actually trust DXI because it measures things they say matter.

What DORA Metrics Got Wrong

DORA metrics weren’t wrong for 2020. Deployment frequency, lead time for changes, change failure rate, and mean time to recover provided a standardized way to benchmark software delivery performance. The problem is we’re not in 2020 anymore, and DORA’s limitations have gone from minor annoyances to fatal flaws.

The gamification problem hit first. Teams split pull requests to boost deployment frequency. They cherry-picked easy tasks to inflate velocity. They optimized for the metrics instead of outcomes, because that’s what humans do when you measure them—they give you what you measure, whether or not it’s what you actually want.

Then came the AI era gap. DORA can’t capture time saved by AI assistants. It doesn’t measure quality improvements from AI code review. It’s blind to research efficiency gains or reduced cognitive load. It was designed for a world where humans wrote every line of code and productivity meant “how fast can you ship features.” That world is gone.

Organizations are adapting. Atlassian, Google, and Microsoft have shifted toward hybrid approaches combining DORA’s delivery metrics with developer satisfaction surveys and experience measurements. The future isn’t abandoning metrics—it’s acknowledging that speed without context is just noise.

The Compensation Paradox Reveals Value Signals We Miss

Want proof our productivity metrics are broken? Only 2% of developers use Scala as their primary language. Yet 38% of top-paid developers use Scala. If you’re optimizing your career based on language popularity rankings—chasing JavaScript and Python because they top the adoption charts—you’re playing the wrong game.

The metrics we track (adoption rates, usage percentages, GitHub stars) completely miss the value signals that actually drive compensation. Specialization in valuable niches beats broad adoption of mainstream tech. But good luck finding “niche skill value” in your org’s engineering metrics dashboard.

What Happens Next

The developer productivity measurement revolution is already underway. Developers don’t trust the old systems—66% distrust is a mandate for change. AI productivity gains demand new frameworks that can actually see them. And the shift from technical to human-centric factors means the DORA era is definitively over.

If you’re a developer, advocate for transparency in how you’re measured. Push for frameworks like DX Core 4 that include satisfaction and impact, not just velocity. Refuse to let your career be defined by metrics designed for a pre-AI world.

If you’re leading engineering teams, the path is clear: adopt hybrid measurement approaches that combine delivery metrics with developer experience indices. Tie engineering outcomes to actual business impact. And please, for the love of all that’s holy, stop ranking individuals by PR count.

The tools we use to measure developer productivity will define the culture we build and the talent we retain. Right now, those tools are broken. The data is unambiguous, the trust is gone, and the AI era demands better. Time to update the yardstick.