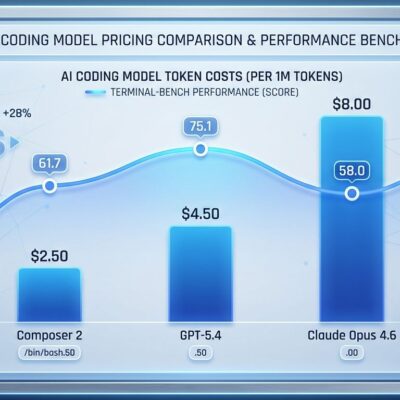

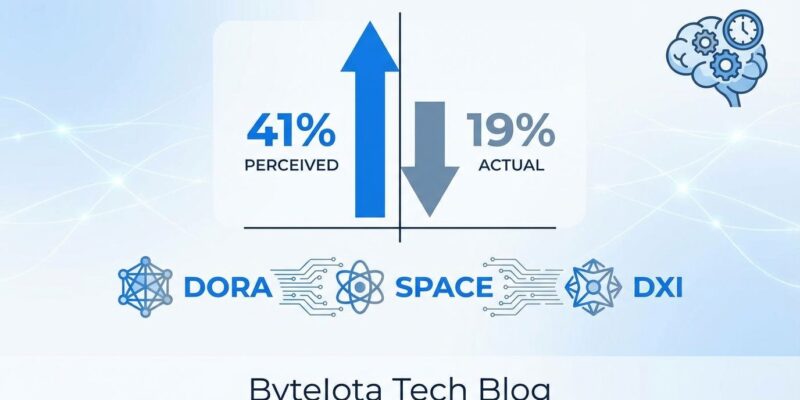

AI tools now generate 41% of all code—256 billion lines in 2024 alone—creating what appears to be a productivity revolution. Except it’s not. Developers perceive a 24% productivity boost from AI yet actually work 19% slower in complex codebases, according to a 2025 METR study. This 43-point expectations gap represents one of the largest perception-versus-reality disconnects in software engineering research. The productivity illusion stems from measuring output (lines of code, commits) rather than outcomes (delivery speed, system stability). As code churn doubles in 2026 and delivery stability drops 7.2% despite AI adoption, organizations face an uncomfortable truth: traditional productivity metrics are obsolete, and most companies are flying blind.

The Productivity Paradox: Why Developers Feel Faster But Measure Slower

The numbers expose a fundamental measurement crisis. AI generates 41% of all code, GitHub Copilot offers a 46% code completion rate, and 76% of professional developers now use AI tools. However, when researchers tested developers on actual tasks, they took 19% longer to finish with AI assistance—not faster. Even after experiencing this slowdown, developers still believed AI had accelerated their work by 20%.

This perception gap exists because AI reduces cognitive friction. Syntax recall vanishes. Documentation lookups disappear. Boilerplate scaffolding materializes instantly. Consequently, the editor activity creates a strong feeling of productivity that code appearing on screen reinforces. But delivery metrics tell a different story. Lead time, defect rate, and deployment frequency remain unchanged because bottlenecks migrate downstream to code review and validation stages.

The acceptance gap reveals the quality problem: GitHub Copilot’s 46% completion rate sounds impressive until you learn only 30% of that generated code gets accepted by developers. The other 70% fails review or requires substantial rework. As a result, organizations making AI tool investments based on perceived productivity gains that don’t match measurable outcomes are setting themselves up for the “18-Month Wall”—the point where delivery cycles stall completely as accumulated technical debt and verification overhead overwhelm the team.

Why Lines of Code and Velocity Metrics Are Obsolete

Activity metrics like lines of code (LOC), commits per day, and velocity seem objective. They’re not—they reward the wrong behaviors. When developers know they’re evaluated on code volume, they optimize for that metric at the expense of code quality, maintainability, and business impact. Furthermore, an engineering lead on Reddit admits the truth: “I’ve been heavily pointing all story tickets since management wants to play the velocity game.” Teams break up commits, inflate estimates, and avoid complex issues that might hurt their metrics.

LOC is language-dependent (50 lines in Java equals 5 in Python) and trivially easy to game. Moreover, velocity metrics create even worse incentives. Teams hit their velocity targets while shipping features no one uses or solving problems that don’t matter. This misalignment between engineering metrics and value creation is why traditional measurement often makes teams slower, not faster. When measured on units of output alone, developers disengage—frustration grows, retention suffers, and the best engineers leave for companies that measure what actually matters.

The antidote to gaming metrics is never using a single metric in isolation. Therefore, every speed metric must be balanced by a quality metric. Every quantitative metric must be balanced by a qualitative metric. And most critically: measure systems, not people. Focus on the flow of work through the team to identify blockers, not low performers.

Modern Frameworks: DORA, SPACE, and DXI Solve the Measurement Crisis

Three frameworks have emerged to replace obsolete metrics, and they share a critical principle: measure systems, not individuals.

DORA metrics, created by Google’s DevOps Research and Assessment team, lead with 40.8% adoption. The framework measures system-level delivery through four core metrics: deployment frequency, lead time for changes, change failure rate, and mean time to recovery. What makes DORA different is its system-level perspective—when lead times increase, you investigate pipeline blockers rather than blaming individual developers. Reddit’s typically skeptical developer community gives DORA positive reviews specifically because it doesn’t target individuals.

Meanwhile, the SPACE framework, developed by researchers at Microsoft, GitHub, and the University of Victoria, adds holistic dimensions: Satisfaction and well-being, Performance, Activity, Communication and collaboration, and Efficiency and flow. Teams measuring across all five SPACE dimensions improve productivity by 20-30% compared to those focused solely on activity metrics. Organizations typically select 1-3 metrics from each dimension to create balanced measurement that prevents gaming.

Additionally, the Developer Experience Index (DXI) quantifies tangible ROI that justifies productivity investments. Built on over four million data points from 800 organizations, DXI measures 14 key drivers including deep work capability, local iteration speed, build and test processes, change confidence, and code maintainability. Each one-point DXI improvement saves 13 minutes per developer per week—10 hours annually. For a 100-person engineering team, gaining just 1 DXI point saves 1,100 hours annually. Top-quartile DXI scores correlate with development speed and quality 4-5x greater than bottom-quartile teams.

Organizations now dedicate 4.7% of engineering headcount to centralized developer productivity teams—roughly one productivity engineer per 21 developers. This investment reflects industry recognition that modern measurement requires dedicated focus, especially as AI reshapes how code gets written.

The Trust Gap: 96% Don’t Trust AI Code, Yet Only 48% Verify It

Sonar’s January 2026 State of Code survey revealed a critical verification gap: 96% of developers don’t fully trust AI-generated code is functionally correct, yet only 48% verify it before committing. This creates a productivity paradox—the time theoretically saved drafting code must be reinvested into review and debugging, but nearly half of AI-generated code enters codebases unchecked. The result isn’t productivity. It’s technical debt accumulation disguised as speed gains.

The trust problem manifests in concrete costs. Indeed, 66% of developers cite “AI solutions that are almost right, but not quite” as their biggest frustration. 45% say debugging AI code takes more time than expected. And developers now spend 91% more time on code review specifically to verify AI output. The time saved in the editor gets consumed downstream in validation workflows.

The 2025 DORA report confirms AI adoption positively correlates with Throughput—teams do ship code faster—but it also correlates with increased Instability. Code churn is expected to double in 2026, and delivery stability decreased 7.2% according to Google’s DORA data. AI accelerates code production, but without robust control systems, that acceleration exposes weaknesses rather than amplifying strengths. Consequently, organizations must implement mandatory verification workflows—pre-commit quality gates, secondary LLM auditing, longitudinal code health tracking—or risk erasing any AI-driven gains with accumulated technical debt. Tools like SonarQube enable 22% Technical Debt Ratio reduction within six months when verification is actually enforced.

AI as Amplifier, Not Solution: Your Engineering System Determines AI ROI

The 2025 DORA report’s most critical insight gets overlooked in AI hype: AI amplifies the quality of the engineering system it operates within. Organizations with mature DevOps practices, well-defined workflows, strong automated testing, mature version control, and fast feedback loops convert AI-driven productivity gains into measurable improvements. Conversely, organizations lacking these foundations see AI accelerate code production while multiplying chaos downstream.

Without robust control systems—automated testing that catches defects, mature version control practices, fast feedback loops—increased change volume leads to instability increases instead of productivity gains. AI doesn’t fix broken engineering systems. It makes broken systems fail faster.

Engineering leaders now dedicate 4.7% of headcount to centralized developer productivity teams, with technology companies leading at 4.89%, fintech at 4.36%, retail at 3.8%, and large enterprises trailing at 3.32%. This investment reflects recognition that AI alone doesn’t improve productivity—the underlying engineering system determines whether AI helps or hurts. Therefore, organizations making AI coding tool investments without first building measurement maturity waste money. The antidote to both gaming metrics and AI-driven instability is the same: implement multi-dimensional frameworks (DORA + SPACE + DXI), build robust engineering practices, measure systems not people. Only then does AI amplify productivity instead of chaos.

Key Takeaways

- AI generates 41% of code but developers work 19% slower in reality despite perceiving 24% gains—a 43-point expectations gap that exposes fundamental measurement flaws

- Traditional metrics (lines of code, velocity, commits) reward wrong behaviors and are easily gamed, creating misalignment between engineering activity and business value

- Modern frameworks (DORA at 40.8% adoption, SPACE at 14.1%, DXI with 13 min/week ROI per point) prevent gaming by using multi-dimensional, system-level measurement

- The verification gap is unsustainable: 96% don’t trust AI code yet only 48% verify it, creating technical debt accumulation disguised as productivity gains

- AI amplifies your engineering system—mature DevOps practices see productivity gains, broken systems just fail faster, requiring 4.7% headcount dedicated to developer productivity