Cursor launched Automations on March 5, 2026, fundamentally shifting AI coding from prompt-based to event-driven. Agents now trigger automatically on code commits, Slack messages, PagerDuty incidents, or timers—no human prompts required. Running hundreds of automations per hour internally, Cursor deploys agents like Bugbot (automatic bug detection on every commit) and PagerDuty responders (incident investigation via Datadog logs with proposed fixes delivered to Slack). This comes as Cursor’s annual revenue doubled to $2 billion in three months, with 60% now from enterprise customers demanding automated code review at scale.

From Prompt-and-Monitor to Event-Driven Automation

The shift is structural. Instead of developers manually prompting agents (“review this code”) and monitoring output, Automations let developers configure policies—trigger conditions plus instructions—and agents execute autonomously. Cursor CEO Michael Truell describes the change: humans are no longer “always initiating” but rather “called in at the right points in this conveyor belt.”

Bugbot exemplifies this. It triggers on every code commit, analyzes the diff for bugs and security vulnerabilities, and posts findings to Slack. No developer prompts. No manual monitoring. The agent runs, verifies its own output, and notifies humans only when issues surface. PagerDuty responders follow the same pattern: alert fires, agent spins up, queries Datadog logs, analyzes recent commits, proposes a fix, and posts to Slack—all without human initiation.

This represents the evolution of AI coding tools: from code generation (GitHub Copilot autocomplete) to autonomous agents (Cursor Cloud Agents generating PRs) to event-driven workflows. Developers shift from author to orchestrator—configuring when agents run, reviewing output, approving changes. The role fundamentally changes.

Solving the AI Code Verification Crisis

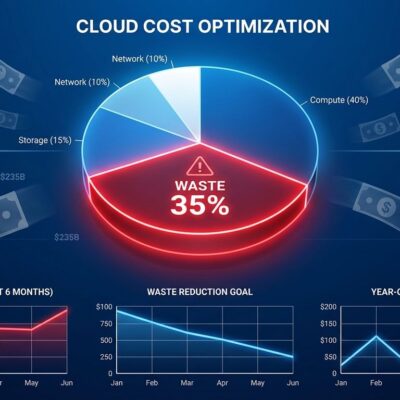

Cursor Automations directly address the industry’s verification bottleneck. Sonar’s 2026 State of Code Developer Survey found 96% of developers don’t fully trust AI-generated code, yet only 48% consistently verify it before committing. AI now accounts for 42% of all committed code, projected to reach 65% by 2027. Manual review doesn’t scale.

Related: AI Verification Bottleneck: 96% Distrust Code, 48% Verify

Moreover, 59% of developers rate AI code verification effort as “moderate to substantial,” and 38% say reviewing AI code requires more effort than human code. The most important skill for the AI era? “Reviewing and validating AI-generated code” (47% of respondents). The problem isn’t just AI code quality—it’s the mismatch between generation speed and verification capacity.

Automations shift verification from manual (doesn’t scale) to automated (scales to hundreds per hour). Bugbot runs continuously. Security audits happen automatically. Incident response agents investigate without waiting for on-call engineers to start digging through logs. This is critical as AI code volume grows from 42% to 65% of commits. Without automated verification, the trust gap widens and technical debt accumulates.

How It Works: Cloud Sandboxes and MCP Integrations

When triggered, Cursor spins up an isolated cloud sandbox—a fresh VM with full codebase access, configured Model Context Protocol (MCP) integrations (Datadog, Slack, GitHub, PagerDuty), and a memory tool that enables learning across runs. Agents follow predefined instructions, verify their own output, and post findings to configured channels.

Supported triggers include code commits, Slack messages, Linear issues, GitHub pull requests, PagerDuty alerts, webhooks, and scheduled timers. Bugbot’s workflow: commit → sandbox spin-up → code analysis → self-verification → Slack notification → human review. PagerDuty integration follows the same pattern: alert → agent queries Datadog logs via MCP → analyzes recent code changes → proposes fix → posts to Slack.

The memory tool is critical. Agents store context from previous runs to avoid duplicate alerts and refine detection patterns. If an engineer dismisses a Bugbot alert, the agent learns and stops flagging similar patterns. This continuous improvement distinguishes Automations from static CI/CD scripts—agents get smarter with usage.

Related: VS Code 1.110 Agent Plugins: AI Coding Matures with MCP

Cursor vs GitHub Copilot: Event-Driven vs Prompt-Based

Cursor Automations are fully event-driven, differentiating from GitHub Copilot’s human-initiated agent mode and Replit’s prompt-based autonomous development. While Copilot requires developers to assign GitHub issues to trigger agents, and Replit emphasizes natural language prompts for full-stack generation, Cursor’s agents react automatically to external events. Commit happens. Agent runs. PagerDuty fires. Agent investigates. No prompts.

Market validation is clear. Cursor reached $2 billion in annual recurring revenue—60% from enterprise—after doubling in three months. GitHub Copilot reached approximately $100 million ARR in its first year. The order of magnitude difference shows enterprise demand for automated workflows over manual prompts. Businesses don’t want developers spending time prompting agents; they want agents working in the background while developers focus on creative problem-solving.

Expect competitive response. GitHub and Microsoft will likely adopt event-driven triggers beyond assigned issues. Replit may add scheduled and webhook-based automations. Event-driven automation represents the next competitive frontier in AI coding tools.

The Trust and Control Debate

Automation raises legitimate concerns. Will developers accept background agents automatically reviewing code without prompts? Who’s responsible if Bugbot misses a critical vulnerability or generates false positives? Cursor’s design addresses this through human-in-the-loop: agents propose changes (Slack notifications, PR comments), humans approve them. Bugbot posts findings to Slack for review. PagerDuty responders propose fixes, on-call engineers approve.

However, as automation scales to “hundreds per hour,” developers worry about losing agency and becoming reviewers of agent output rather than primary code authors. This mirrors the DevOps shift from manual deployments to CI/CD pipelines. Developers who embraced automation thrived; those who resisted were left behind. The same pattern is emerging with AI coding tools.

The philosophical shift is profound: from “I write code” to “I configure automation policies and review agent output.” Developers clinging to manual review romanticism—”I must personally inspect every line”—face the same fate as sysadmins who refused to adopt CI/CD. The question isn’t whether to automate verification but how to do it responsibly with appropriate human oversight.

Key Takeaways

- Cursor Automations (launched March 5, 2026) shift AI coding from prompt-based to event-driven—agents trigger automatically on code commits, Slack messages, PagerDuty incidents, and timers without human prompts.

- Addresses the verification bottleneck: 96% of developers distrust AI code, yet only 48% verify consistently (Sonar survey). Automations automate verification itself, scaling to hundreds of checks per hour.

- Technical architecture: Cloud sandboxes provide isolated VMs with codebase access, MCP integrations connect to external services (Datadog, Slack, PagerDuty), and memory tools enable agents to learn from feedback and refine detection patterns.

- Competitive differentiation: Cursor ($2B ARR, 60% enterprise) offers event-driven automation while GitHub Copilot requires human-initiated triggers (assigned issues). Event-driven workflows free developers from constant prompting and monitoring.

- The developer role is evolving from code author to automation orchestrator—configuring policies, reviewing agent output, approving changes. This mirrors the DevOps shift from manual deployments to CI/CD pipelines.

Event-driven automation isn’t optional for teams generating 65% of code with AI by 2027. The shift from prompt-based to event-driven tools is inevitable. Developers who embrace automated verification workflows will gain productivity; those who cling to manual review will face mounting technical debt and verification bottlenecks.