OpenAI launched Codex Security on March 6, 2026, an AI agent that scans code for vulnerabilities, validates them in sandboxes, and generates patches. During beta testing across 1.2 million commits, it found 792 critical and 10,561 high-severity vulnerabilities—including 14 CVEs in major projects like OpenSSH, GnuTLS, PHP, and Chromium—while cutting false positives by 50% and noise by 84%. This challenges traditional security scanners like Snyk, GitHub Advanced Security, and SonarQube that plague developers with alert fatigue from unvalidated warnings.

The False Positive Problem

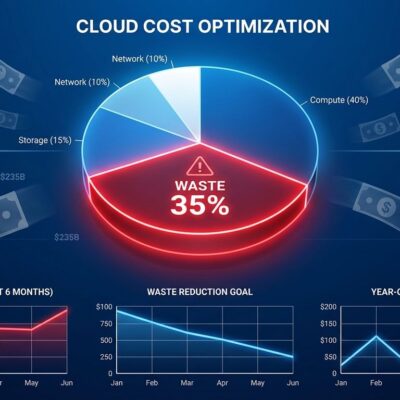

Security scanners have a credibility crisis. Developers ignore 90% of security alerts because traditional tools flood teams with false positives. The result is alert fatigue costing enterprises $500,000 per year in wasted manual validation. When every scan produces dozens of unvalidated warnings, teams learn to treat security tooling as unreliable noise.

Codex Security attacks this problem through sandbox validation. Instead of dumping unverified alerts on developers, it pressure-tests findings in isolated containers before surfacing them. The approach delivered an 84% noise reduction during beta and cut false positives by more than 50%. More importantly, it reduced over-reported severity by 90%, meaning the critical alerts you see are actually critical.

How It Works: Threat Models and Validation

Codex Security operates in three stages, starting with something traditional tools skip entirely: threat modeling. Before scanning a single line of code, it maps your application’s security structure—entry points, trust boundaries, authentication assumptions, risky components. This context informs every subsequent finding.

Stage two uses that threat model to search for vulnerabilities, categorizing each by real-world impact in your specific system. But here’s where it diverges from conventional scanners: suspected issues get reproduced in sandboxed environments. The agent runs exploits, captures logs, and records whether reproduction succeeded or failed. Only validated vulnerabilities surface to your team.

Stage three generates patches using full system context. The goal isn’t just fixing the vulnerability but minimizing regressions. Traditional tools tell you what’s broken; Codex Security proposes how to fix it without breaking something else.

Real Vulnerabilities in Real Projects

During beta, Codex Security discovered 14 CVEs across widely-used open-source projects. That list includes OpenSSH, GnuTLS, GOGS, Thorium, libssh, PHP, and Chromium—projects underpinning critical internet infrastructure. Examples include a GnuTLS heap buffer overflow (CVE-2025-32990), two GOGS authentication bypasses, and two gpg-agent stack buffer overflows.

Chandan Nandakumaraiah, Head of Product Security at NETGEAR and CVE Board Member, described the experience: “Its findings were impressively clear and comprehensive, often giving the sense that an experienced product security researcher was working alongside us.”

Open-source maintainers echoed a consistent theme during feedback: “The challenge isn’t a lack of vulnerability reports, but too many low-quality ones.” Codex Security addresses this by surfacing fewer findings with higher confidence.

OpenAI vs. the Security Tooling Market

OpenAI already disrupted code completion with Copilot and Cursor. Now it’s targeting the multi-billion-dollar security tooling market dominated by Snyk, GitHub Advanced Security, and SonarQube. Anthropic released Claude Code Security last month, signaling an AI security arms race between frontier model companies.

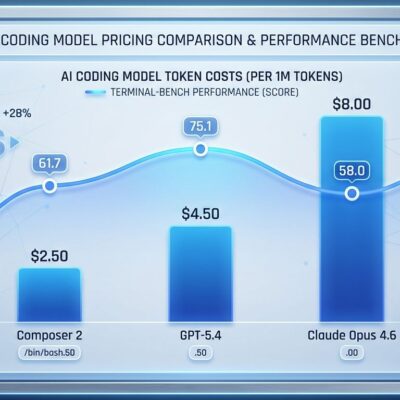

The competitive wedge is AI-native validation. Rule-based scanners like Snyk excel at pattern matching but can’t reason about context. GitHub Advanced Security integrates deeply with GitHub workflows but suffers from the same false positive problem. Codex Security leverages GPT-5.4’s reasoning capabilities to build project-specific threat models and validate findings that legacy tools miss.

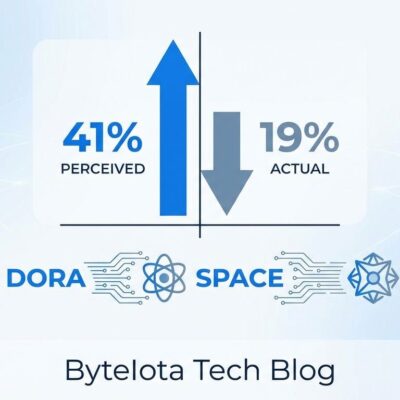

Interestingly, when Anthropic launched Claude Code Security, cybersecurity stocks dropped sharply as investors worried about disruption. Codex Security’s launch provoked no such reaction. The market may be underestimating OpenAI’s threat to incumbents.

Privacy, Access, and Open Questions

Enterprise adoption hinges on trust. Codex Security analyzes your entire codebase context, meaning proprietary code, trade secrets, and sensitive business logic get sent to OpenAI’s infrastructure. For companies with strict data sovereignty requirements, that’s a dealbreaker. GitHub Advanced Security and self-hosted SonarQube don’t require external code transmission.

Access is currently limited to ChatGPT Enterprise, Business, and Education customers. Individual developers, small teams, and open-source projects can’t use it yet. OpenAI offers free usage for the first month, after which it becomes a paid feature within existing subscription tiers. Pricing details for standalone access remain unclear.

Accuracy questions linger. Can AI truly validate complex, context-dependent vulnerabilities? What about false negatives—vulnerabilities Codex Security misses? Over-reliance on AI security agents could create new blind spots if teams trust findings without verification.

What’s Next

Codex Security represents a shift from code generation to code auditing. AI assistants spent the last two years helping developers write code faster. Now they’re validating whether that code is secure. The logical next step is AI agents that detect, validate, and auto-fix vulnerabilities in production systems without human intervention.

For developers drowning in false positives, Codex Security’s 84% noise reduction is compelling. For security vendors, it’s a warning: AI-native tools with frontier model reasoning capabilities are coming for traditional SAST and DAST markets. The question isn’t whether AI will dominate security tooling, but which AI company captures the enterprise budgets.